像人一样抓握:从人本体感受感觉运动整合学习多指抓握

IF 10.5

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

触觉和动觉知觉对于人类灵巧的操作是至关重要的,通过本体感觉和感觉运动的整合,可以可靠地抓住物体。对于机器人手来说,尽管获得这种触觉和动觉反馈是可行的,但建立从这种感觉反馈到运动动作的直接映射仍然是一个挑战。在本文中,我们提出了一种新的手套介导的触觉-运动感知-预测框架,用于基于模仿学习的抓取技能从人类直觉和自然操作转移到机器人执行,并通过广义抓取任务(包括涉及可变形物体的抓取任务)验证了其有效性。首先,我们集成了一个数据手套来捕捉关节层面的触觉和动觉数据。该手套适用于人类和机器人手,允许从不同场景的自然人手演示中收集数据。它确保了原始数据格式的一致性,使人类和机器人手的抓取评估成为可能。其次,建立了基于极坐标图结构的多模态输入的统一表示。我们明确地将形态差异整合到设计的表示中,增强了不同演示器和机器人手之间的兼容性。此外,我们引入了触觉-动觉时空图网络,该网络利用多维子图卷积和基于注意的长短期记忆(LSTM)层从图输入中提取时空特征,以预测每个手关节的基于节点的状态。然后,这些预测通过部队-位置混合映射映射到最终命令。对比实验和消融研究表明,我们的方法在抓取成功率、手指协调、接触力管理以及抓取和计算效率方面都超过了其他方法,获得了最接近人类抓取的结果。我们的方法也通过多个随机实验设置进行了鲁棒性验证,并在不同的物体和机器人手中测试了其泛化能力。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Grasp Like Humans: Learning Generalizable Multifingered Grasping From Human Proprioceptive Sensorimotor Integration

Tactile and kinesthetic perceptions are crucial for human dexterous manipulation, enabling reliable grasping of objects via proprioceptive sensorimotor integration. For robotic hands, even though acquiring such tactile and kinesthetic feedback is feasible, establishing a direct mapping from this sensory feedback to motor actions remains challenging. In this article, we propose a novel glove-mediated tactile–kinematic perception–prediction framework for grasp skill transfer from human intuitive and natural operation to robotic execution based on imitation learning, and its effectiveness is validated through generalized grasping tasks, including those involving deformable objects. First, we integrate a data glove to capture tactile and kinesthetic data at the joint level. The glove is adaptable for both human and robotic hands, allowing data collection from natural human hand demonstrations across different scenarios. It ensures consistency in the raw data format, enabling evaluation of grasping for both human and robotic hands. Second, we establish a unified representation of multimodal inputs based on graph structures with polar coordinates. We explicitly integrate the morphological differences into the designed representation, enhancing the compatibility across different demonstrators and robotic hands. Furthermore, we introduce the tactile–kinesthetic spatio-temporal graph networks, which leverage multidimensional subgraph convolutions and attention-based long short-term memory (LSTM) layers to extract spatio-temporal features from graph inputs to predict node-based states for each hand joint. These predictions are then mapped to final commands through a force-position hybrid mapping. Comparative experiments and ablation studies demonstrate that our approach surpasses other methods in grasp success rate, finger coordination, contact force management, and both grasp and computational efficiency, achieving results most akin to human grasping. The robustness of our approach is also validated through multiple randomized experimental setups, and its generalization capability is tested across diverse objects and robotic hands.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

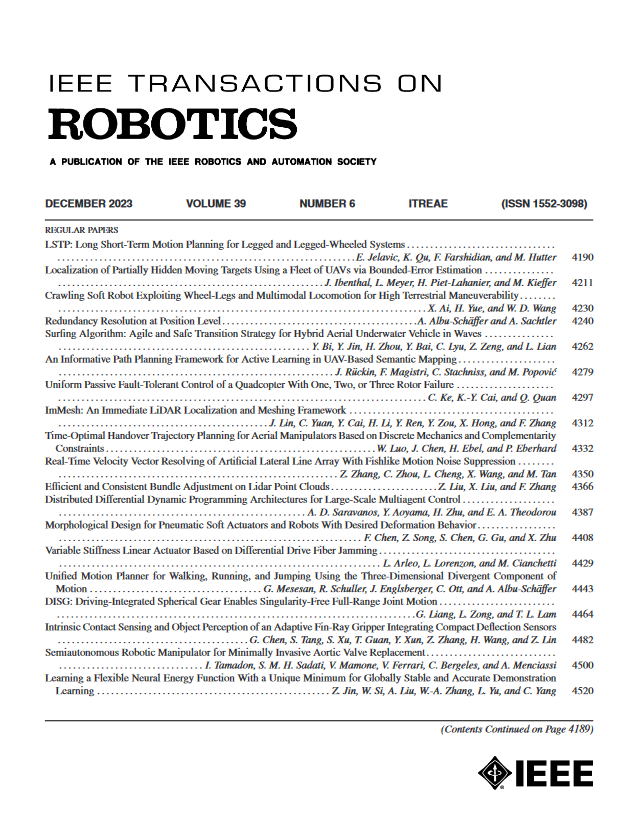

来源期刊

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: