BEVPlace++:用于无人地面车辆的快速、鲁棒和轻型激光雷达全球定位

IF 10.5

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

本文介绍了一种新型、快速、鲁棒的自动地面车辆(AGV)光探测和测距(LiDAR)全球定位方法BEVPlace++。它在LiDAR数据的鸟瞰(BEV)图像表示上使用轻量级卷积神经网络(cnn),通过位置识别实现精确的全球定位,然后进行3自由度(DoF)姿态估计。我们的详细分析揭示了一个有趣的事实,即cnn在从LiDAR BEV图像中提取独特特征方面天生有效。值得注意的是,使用cnn提取的特征可以有效地匹配两幅翻译量较大的BEV图像的关键点。基于这一见解,我们设计了一个旋转等变模块(REM),以获得独特的特征,同时增强对旋转变化的鲁棒性。然后通过级联REM和描述符生成器NetVLAD开发旋转等变和不变网络(REIN),依次生成旋转等变局部特征和旋转不变全局描述符。首先利用全局描述符实现鲁棒的位置识别,然后利用局部特征实现精确的姿态估计。在七个公共数据集和我们的AGV平台上的实验结果表明,即使在只有位置标签的小数据集(3000帧KITTI)上进行训练,BEVPlace++也能很好地泛化到看不见的环境中,在不同的日子和年份中表现一致,并适应各种类型的激光雷达扫描仪。bevplac++在多个任务中实现了最先进的性能,包括位置识别、闭环检测和全局定位。此外,bevplac++是轻量级的,实时运行,不需要精确的姿势监督,使其非常方便部署。本文章由计算机程序翻译,如有差异,请以英文原文为准。

BEVPlace++: Fast, Robust, and Lightweight LiDAR Global Localization for Autonomous Ground Vehicles

This article introduces BEVPlace++, a novel, fast, and robust light detection and ranging (LiDAR) global localization method for autonomous ground vehicles (AGV). It uses lightweight convolutional neural networks (CNNs) on bird’s eye view (BEV) image-like representations of LiDAR data to achieve accurate global localization through place recognition, followed by 3-degrees of freedom (DoF) pose estimation. Our detailed analyses reveal an interesting fact that CNNs are inherently effective at extracting distinctive features from LiDAR BEV images. Remarkably, keypoints of two BEV images with large translations can be effectively matched using CNN-extracted features. Building on this insight, we design a rotation equivariant module (REM) to obtain distinctive features while enhancing robustness to rotational changes. A rotation equivariant and invariant network (REIN) is then developed by cascading REM and a descriptor generator, NetVLAD, to sequentially generate rotation equivariant local features and rotation invariant global descriptors. The global descriptors are used first to achieve robust place recognition, and then local features are used for accurate pose estimation. Experimental results on seven public datasets and our AGV platform demonstrate that BEVPlace++, even when trained on a small dataset (3000 frames of KITTI) only with place labels, generalizes well to unseen environments, performs consistently across different days and years, and adapts to various types of LiDAR scanners. BEVPlace++ achieves state-of-the-art performance in multiple tasks, including place recognition, loop closure detection, and global localization. In addition, BEVPlace++ is lightweight, runs in real-time, and does not require accurate pose supervision, making it highly convenient for deployment.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

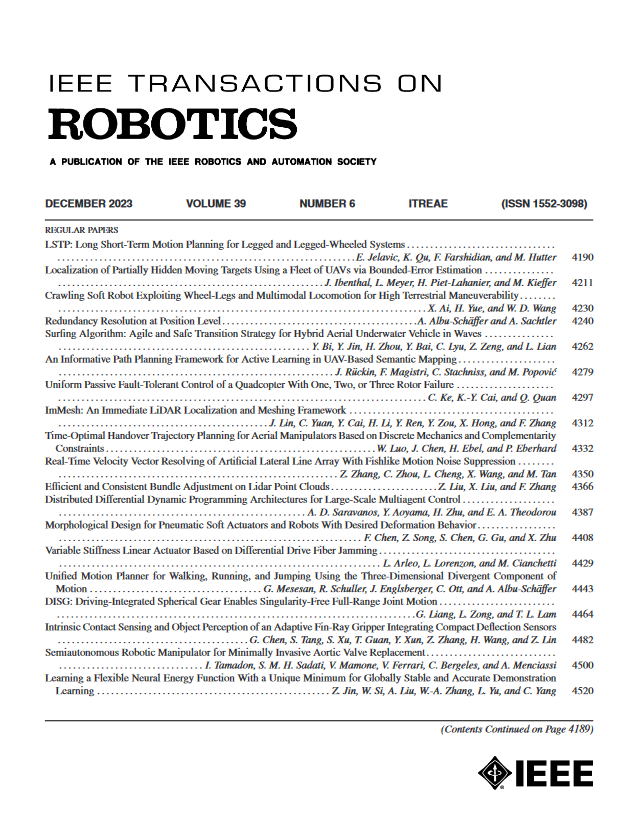

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: