ESVO2:直接视觉惯性里程计与立体事件相机

IF 9.4

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

基于事件的视觉里程计是视觉同步定位和映射(SLAM)技术的一个特定分支,旨在通过利用神经形态(即基于事件的)相机的特殊工作原理来解决跟踪和映射子问题(通常是并行的)。由于事件数据的运动依赖性,在大基线视点变化情况下难以建立显式的数据关联(即特征匹配),因此直接方法是更合理的选择。然而,现有的直接方法受到映射子问题计算复杂度高和相机姿态跟踪在一定自由度下的退化性的限制。在本文中,我们通过构建基于事件的立体视觉惯性里程计系统来解决这些问题,该系统建立在称为基于事件的立体视觉里程计(ESVO)的直接管道上。具体来说,为了加快映射操作,我们提出了一种根据事件的局部动态进行等高线点采样的有效策略。通过合并时间立体和静态立体结果,提高了映射的结构完备性和局部平滑性。为了避免相机姿态跟踪在恢复一般六自由度运动的俯仰和偏航分量时的退化,我们通过预积分引入IMU测量作为运动先验。为此,提出了一个紧凑的后端,用于持续更新IMU偏差和预测线速度,从而实现相机姿态跟踪的准确运动预测。由此产生的系统可以很好地与现代高分辨率事件相机配合使用,并在大规模户外环境中实现更好的全球定位精度。对具有不同分辨率和场景的五个公开可用数据集的广泛评估证明了所提议的系统与五种最先进的方法相比具有优越的性能。与ESVO相比,我们的新管道在绝对轨迹误差和相对姿态误差方面分别将相机姿态跟踪误差显著降低了40%-80%和20%-80%;同时,映射效率提高了五倍。我们将我们的管道作为开源软件发布,用于该领域的未来研究。本文章由计算机程序翻译,如有差异,请以英文原文为准。

ESVO2: Direct Visual-Inertial Odometry With Stereo Event Cameras

Event-based visual odometry is a specific branch of visual simultaneous localization and mapping (SLAM) techniques, which aims at solving tracking and mapping subproblems (typically in parallel), by exploiting the special working principles of neuromorphic (i.e., event-based) cameras. Due to the motion-dependent nature of event data, explicit data association (i.e., feature matching) under large-baseline viewpoint changes is difficult to establish, making direct methods a more rational choice. However, state-of-the-art direct methods are limited by the high computational complexity of the mapping subproblem and the degeneracy of camera pose tracking in certain degrees of freedom (DoF) in rotation. In this article, we tackle these issues by building an event-based stereo visual-inertial odometry system, which is built upon a direct pipeline known as event-based stereo visual odometry (ESVO). Specifically, to speed up the mapping operation, we propose an efficient strategy for sampling contour points according to the local dynamics of events. The mapping performance is also improved in terms of structure completeness and local smoothness by merging the temporal stereo and static stereo results. To circumvent the degeneracy of camera pose tracking in recovering the pitch and yaw components of general 6-DoF motion, we introduce IMU measurements as motion priors via preintegration. To this end, a compact back-end is proposed for continuously updating the IMU bias and predicting the linear velocity, enabling an accurate motion prediction for camera pose tracking. The resulting system scales well with modern high-resolution event cameras and leads to better global positioning accuracy in large-scale outdoor environments. Extensive evaluations on five publicly available datasets featuring different resolutions and scenarios justify the superior performance of the proposed system against five state-of-the-art methods. Compared to ESVO, our new pipeline significantly reduces the camera pose tracking error by 40%–80% and 20%–80% in terms of absolute trajectory error and relative pose error, respectively; at the same time, the mapping efficiency is improved by a factor of five. We release our pipeline as an open-source software for future research in this field.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

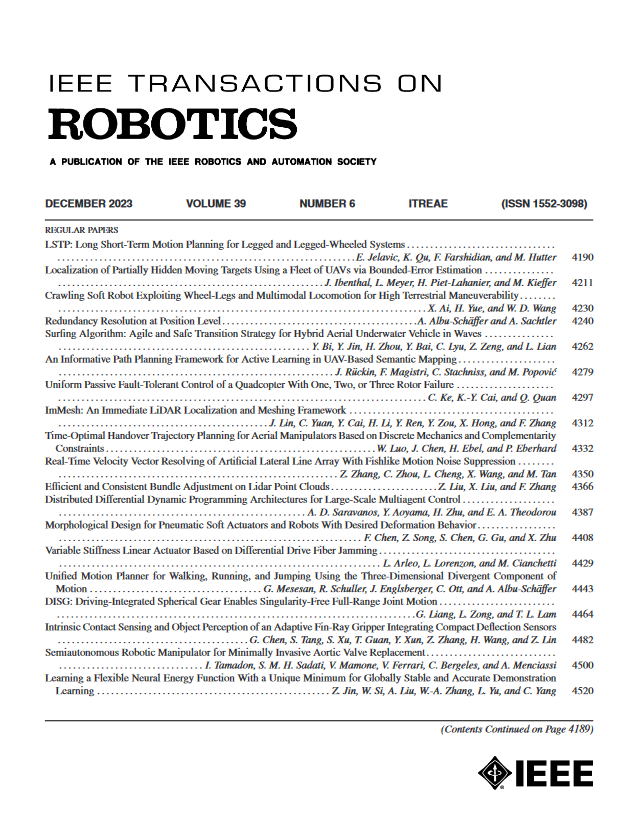

来源期刊

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: