环#:PR-by-PE全局定位与旋转翻译等变图学习

IF 9.4

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

在全球定位系统(GPS)信号不可靠的情况下,使用车载感知传感器(如摄像头、光探测和测距(LiDAR)传感器)进行全球定位,对于自动驾驶和机器人应用至关重要。大多数方法通过顺序位置识别(PR)和姿态估计(PE)实现全局定位。一些方法为每个任务训练单独的模型,而另一些方法则使用具有双头部的单个模型,并使用单独的任务特定损失进行联合训练。然而,定位的准确性很大程度上取决于PR的成功与否,在视点或环境外观发生重大变化的场景下,PR往往会失败。因此,这使得最终的PE定位无效。为了解决这个问题,我们引入了一个新的范例,PR-by-PE定位,它通过直接从PE派生PR而绕过了对单独PR的需求。我们提出了RING#,这是一个端到端的PR-by-PE定位网络,在鸟瞰(BEV)空间中运行,与视觉和激光雷达传感器兼容。ring#采用了一种新颖的设计,可以从BEV特征中学习两个等变表示,从而实现全局收敛和计算效率高的PE。在北校区长期视觉和激光雷达(NCLT)和牛津数据集上进行的综合实验表明,RING#在视觉和激光雷达模式方面都优于最先进的方法,验证了所提出方法的有效性。本文章由计算机程序翻译,如有差异,请以英文原文为准。

RING#: PR-By-PE Global Localization With Roto-Translation Equivariant Gram Learning

Global localization using onboard perception sensors, such as cameras and light detection and ranging (LiDAR) sensors, is crucial in autonomous driving and robotics applications when Global Positioning System (GPS) signals are unreliable. Most approaches achieve global localization by sequential place recognition (PR) and pose estimation (PE). Some methods train separate models for each task, while others employ a single model with dual heads, trained jointly with separate task-specific losses. However, the accuracy of localization heavily depends on the success of PR, which often fails in scenarios with significant changes in viewpoint or environmental appearance. Consequently, this renders the final PE of localization ineffective. To address this, we introduce a new paradigm, PR-by-PE localization, which bypasses the need for separate PR by directly deriving it from PE. We propose RING#, an end-to-end PR-by-PE localization network that operates in the bird's-eye-view (BEV) space, compatible with both vision and LiDAR sensors. RING# incorporates a novel design that learns two equivariant representations from BEV features, enabling globally convergent and computationally efficient PE. Comprehensive experiments on the north campus long-term vision and LiDAR (NCLT) and Oxford datasets show that RING# outperforms state-of-the-art methods in both vision and LiDAR modalities, validating the effectiveness of the proposed approach.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

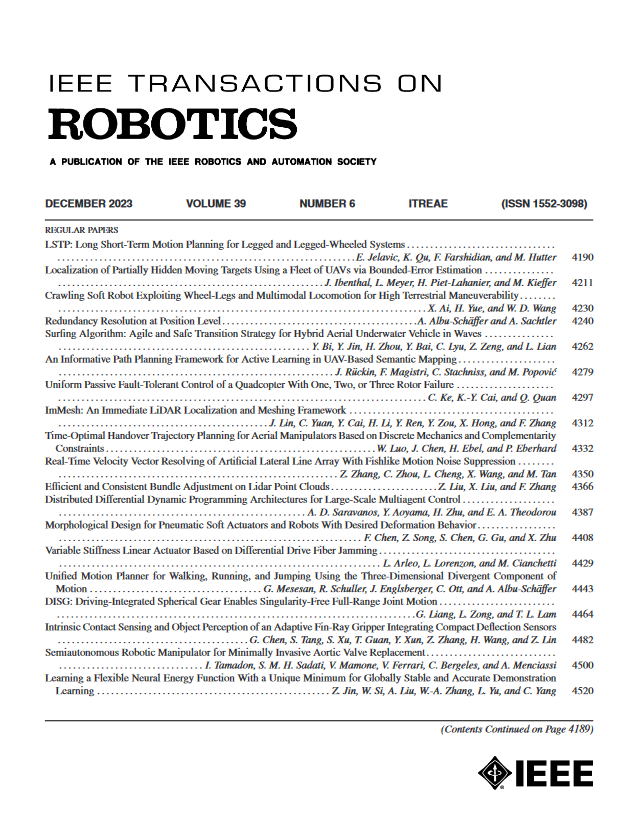

来源期刊

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: