AirSLAM:一种高效且光照鲁棒的点-线视觉SLAM系统

IF 10.5

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

在本文中,我们提出了一种高效的视觉同步定位和地图绘制(SLAM)系统,旨在解决短期和长期照明挑战。我们的系统采用了一种混合方法,将深度学习技术用于特征检测和匹配与传统的后端优化方法相结合。具体来说,我们提出了一个统一的卷积神经网络,同时提取关键点和结构线。然后以耦合的方式将这些特征关联、匹配、三角化和优化。此外,我们还引入了一个轻量级的重定位管道,该管道重用构建的映射,其中关键点、线和结构图用于将查询框架与映射匹配。为了增强所提出的系统对现实世界机器人的适用性,我们使用c++和NVIDIA TensorRT部署并加速了特征检测和匹配网络。在各种数据集上进行的大量实验表明,我们的系统在光照困难的环境中优于其他最先进的视觉SLAM系统。效率评估表明,我们的系统在PC上的运行速度为$73\,\ mathm {Hz}$,在嵌入式平台上的运行速度为$40\,\ mathm {Hz}$。本文章由计算机程序翻译,如有差异,请以英文原文为准。

AirSLAM: An Efficient and Illumination-Robust Point-Line Visual SLAM System

In this article, we present an efficient visual simultaneous localization and mapping (SLAM) system designed to tackle both short-term and long-term illumination challenges. Our system adopts a hybrid approach that combines deep learning techniques for feature detection and matching with traditional back-end optimization methods. Specifically, we propose a unified convolutional neural network that simultaneously extracts keypoints and structural lines. These features are then associated, matched, triangulated, and optimized in a coupled manner. In addition, we introduce a lightweight relocalization pipeline that reuses the built map, where keypoints, lines, and a structure graph are used to match the query frame with the map. To enhance the applicability of the proposed system to real-world robots, we deploy and accelerate the feature detection and matching networks using C++ and NVIDIA TensorRT. Extensive experiments conducted on various datasets demonstrate that our system outperforms other state-of-the-art visual SLAM systems in illumination-challenging environments. Efficiency evaluations show that our system can run at a rate of $73\,\mathrm{Hz}$ $40\,\mathrm{Hz}$

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

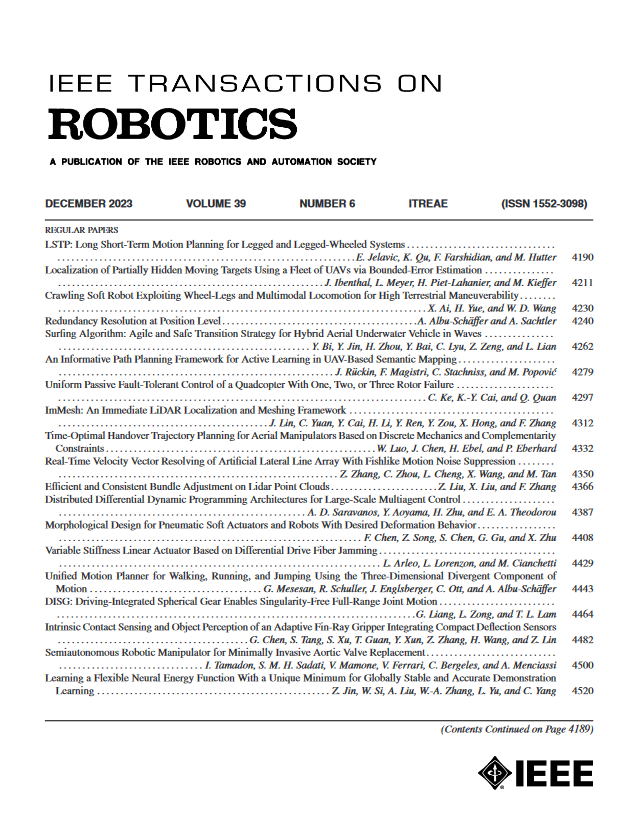

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: