Visual-Tactile Grasp Dataset and Grasp Margin Matrix Analysis for Stability Evaluation

IF 10.5

1区 计算机科学

Q1 ROBOTICS

IEEE Transactions on Robotics

Pub Date : 2026-01-01

Epub Date: 2026-03-10

DOI:10.1109/TRO.2026.3672523

引用次数: 0

Abstract

Robotic grasping plays a critical role in robotics, with widespread applications across various domains. The stability of a grasp is crucial for subsequent operations, making accurate and robust stability assessments essential. While existing methods predominantly rely on visual data, the absence of tactile signals and quantitative stability metrics often leads to unreliable grasp execution. This article makes three fundamental contributions to address these limitations. First, a visual-tactile grasp dataset generation framework is proposed using NVIDIA Isaac Sim, which synthesizes large-scale multimodal grasping scenarios with stability degree labels through a physics-informed three-stage pipeline. Second, this article introduces the grasp margin matrix, a novel computational model that quantifies directional force margins and rotational moment margins to evaluate grasp robustness. This matrix simplifies traditional grasp wrench space analysis by decoupling complex friction cone calculations into interpretable mechanical metrics, achieving 87.72% stability classification accuracy via our stability assessment network. Third, a vision-based grasp perception network that predicts contact force distributions and object centroids without physical tactile sensors is developed, enabling real-time stability inference through the grasp margin matrix. This perception–evaluation–decision integration links grasp pose generation with stability assessment to inform grasp selection, achieving an 88.15% success rates in real robotic grasping scenarios. Experimental validations demonstrate significant improvements compared to force closure methods, with accuracy improving from 56.52% to 87.72%, offering both theoretical rigor and practical feasibility for industrial robotic systems.视觉触觉抓取数据集及抓取余量矩阵分析

机器人抓取在机器人技术中起着至关重要的作用,在各个领域都有广泛的应用。抓手的稳定性对后续操作至关重要,因此必须进行准确而稳健的稳定性评估。虽然现有的方法主要依赖于视觉数据,但缺乏触觉信号和定量稳定性指标往往导致抓握执行不可靠。本文为解决这些限制做出了三个基本贡献。首先,提出了一种基于NVIDIA Isaac Sim的视觉触觉抓取数据集生成框架,该框架通过物理信息的三级管道合成了具有稳定度标签的大规模多模态抓取场景。其次,本文介绍了抓握裕度矩阵,这是一种量化方向力裕度和转动力矩裕度来评估抓握鲁棒性的新型计算模型。该矩阵通过将复杂的摩擦锥计算解耦为可解释的力学指标,简化了传统的抓取扳手空间分析,通过我们的稳定性评估网络实现了87.72%的稳定性分类精度。第三,开发了一种基于视觉的抓取感知网络,该网络可以在没有物理触觉传感器的情况下预测接触力分布和物体质心,通过抓取余量矩阵实现实时稳定性推断。这种感知-评估-决策集成将抓取姿势生成与稳定性评估联系起来,为抓取选择提供信息,在真实机器人抓取场景中实现了88.15%的成功率。实验验证表明,与力闭合方法相比,精度从56.52%提高到87.72%,为工业机器人系统提供了理论严密性和实际可行性。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

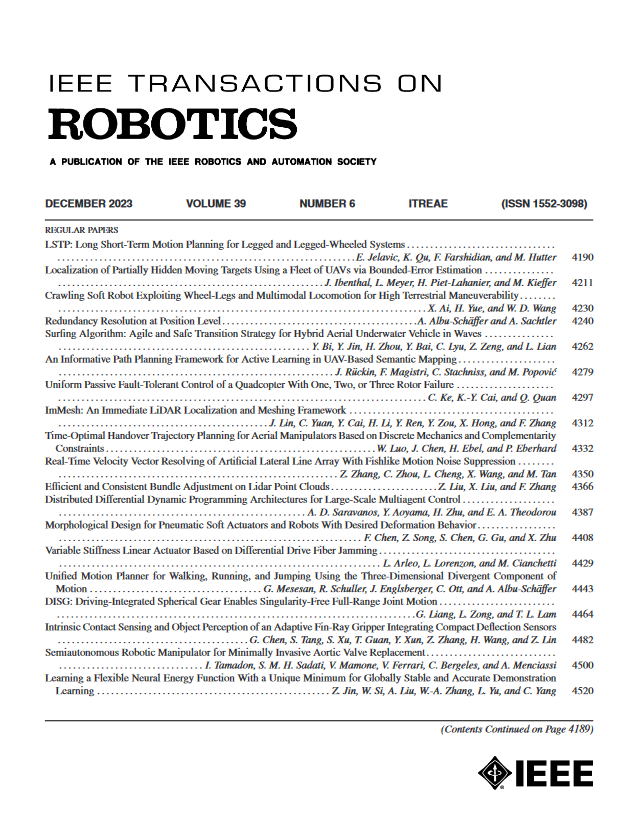

来源期刊

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: