Actor–Critic Model Predictive Control: Differentiable Optimization Meets Reinforcement Learning for Agile Flight

IF 10.5

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

Abstract

A key open challenge in agile quadrotor flight is how to combine the flexibility and task-level generality of model-free reinforcement learning (RL) with the structure and online replanning capabilities of model predictive control (MPC), aiming to leverage their complementary strengths in dynamic and uncertain environments. This article provides an answer by introducing a new framework called参与者-评论家模型预测控制:可微优化与敏捷飞行的强化学习

敏捷四旋翼飞行的一个关键开放挑战是如何将无模型强化学习(RL)的灵活性和任务级通用性与模型预测控制(MPC)的结构和在线重新规划能力相结合,旨在利用它们在动态和不确定环境中的互补优势。本文通过引入一个名为Actor-Critic MPC的新框架提供了答案。其关键思想是将一个可微分的MPC嵌入到一个actor-critic RL框架中。这种集成允许通过MPC进行短期预测优化控制动作,同时利用强化学习进行端到端学习和长期探索。通过在敏捷四旋翼赛车背景下进行的各种烧蚀研究,我们揭示了所提出方法的优点:它实现了更好的非分布行为,对四旋翼动力学变化的鲁棒性更好,并且提高了样本效率。此外,我们使用四旋翼平台进行了实证分析,揭示了评论家的学习价值函数与可微MPC的成本函数之间的关系,从而更深入地了解评论家的价值与MPC成本函数之间的相互作用。最后,我们在模拟和现实世界的不同轨道上的无人机竞赛任务中验证了我们的方法。我们的方法实现了与最先进的无模型RL相同的超人性能,显示速度高达21米/秒。我们的研究表明,所提出的体系结构可以实现实时控制性能,通过试错学习复杂行为,并保留MPC的预测特性,以更好地处理分布外行为。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

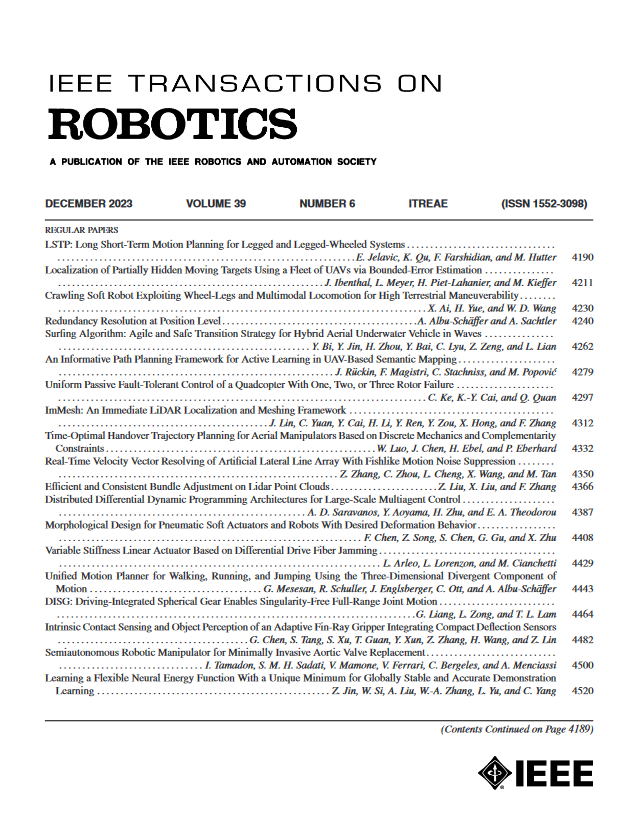

来源期刊

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: