DexSim2Real$^{\mathbf{2}}$: Building Explicit World Model for Precise Articulated Object Dexterous Manipulation

IF 10.5

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

Abstract

Articulated objects are ubiquitous in daily life. In this article, we present DexSim2RealDexSim2Real$^{\mathbf{2}}$:建立精确关节物体灵巧操作的显式世界模型

铰接物体在日常生活中无处不在。在本文中,我们介绍DexSim2Real$^{\mathbf{2}}$,这是一个用于目标条件铰接对象操作的新框架。我们框架的核心是通过主动交互构建看不见的铰接对象的显式世界模型,这使得基于采样的模型预测控制能够在不需要演示或强化学习的情况下规划实现不同目标的轨迹。首先,它使用一个经过自我监督的交互数据或人类操作视频训练的信息网络来预测交互。在对真实机器人进行交互以移动物体部件之后,我们提出了一种基于三维人工智能生成内容的新型建模管道,从多帧观察中构建仿真对象的数字孪生。对于灵巧的手,我们利用特征抓来降低动作维数,从而实现更高效的轨迹搜索。实验验证了该框架在吸力夹持器、双指夹持器和两只灵巧手的精确操作中的有效性。显式世界模型的泛化性还支持高级操作策略,例如使用工具进行操作。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

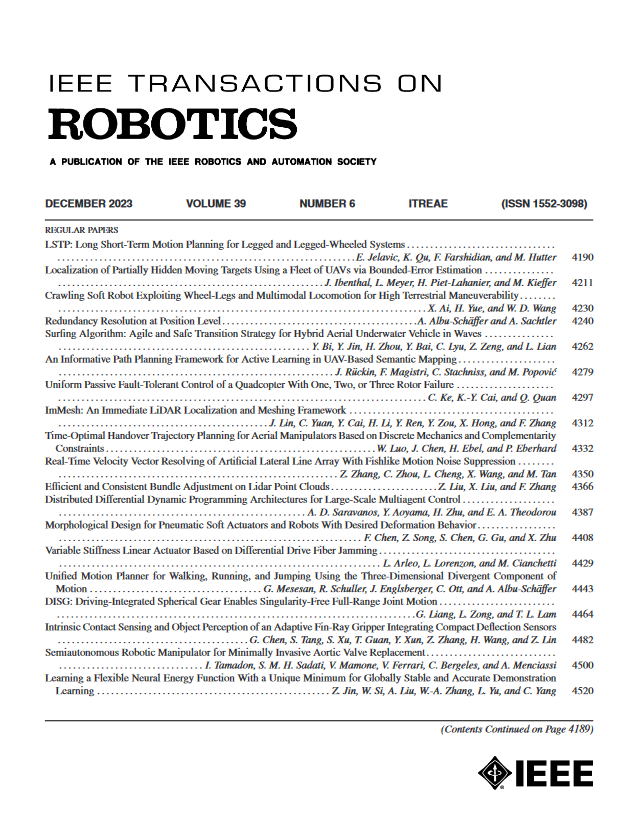

来源期刊

IEEE Transactions on Robotics

工程技术-机器人学

CiteScore

14.90

自引率

5.10%

发文量

259

审稿时长

6.0 months

期刊介绍:

The IEEE Transactions on Robotics (T-RO) is dedicated to publishing fundamental papers covering all facets of robotics, drawing on interdisciplinary approaches from computer science, control systems, electrical engineering, mathematics, mechanical engineering, and beyond. From industrial applications to service and personal assistants, surgical operations to space, underwater, and remote exploration, robots and intelligent machines play pivotal roles across various domains, including entertainment, safety, search and rescue, military applications, agriculture, and intelligent vehicles.

Special emphasis is placed on intelligent machines and systems designed for unstructured environments, where a significant portion of the environment remains unknown and beyond direct sensing or control.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: