SplitAUM: Auxiliary Model-Based Label Inference Attack Against Split Learning

IF 4.7

2区 计算机科学

Q1 COMPUTER SCIENCE, INFORMATION SYSTEMS

IEEE Transactions on Network and Service Management

Pub Date : 2024-10-07

DOI:10.1109/TNSM.2024.3474717

引用次数: 0

Abstract

Split learning has emerged as a practical and efficient privacy-preserving distributed machine learning paradigm. Understanding the privacy risks of split learning is critical for its application in privacy-sensitive scenarios. However, previous attacks against split learning generally depended on unduly strong assumptions or non-standard settings advantageous to the attacker. This paper proposes a novel auxiliary model-based label inference attack framework against learning, named求助全文

约1分钟内获得全文

求助全文

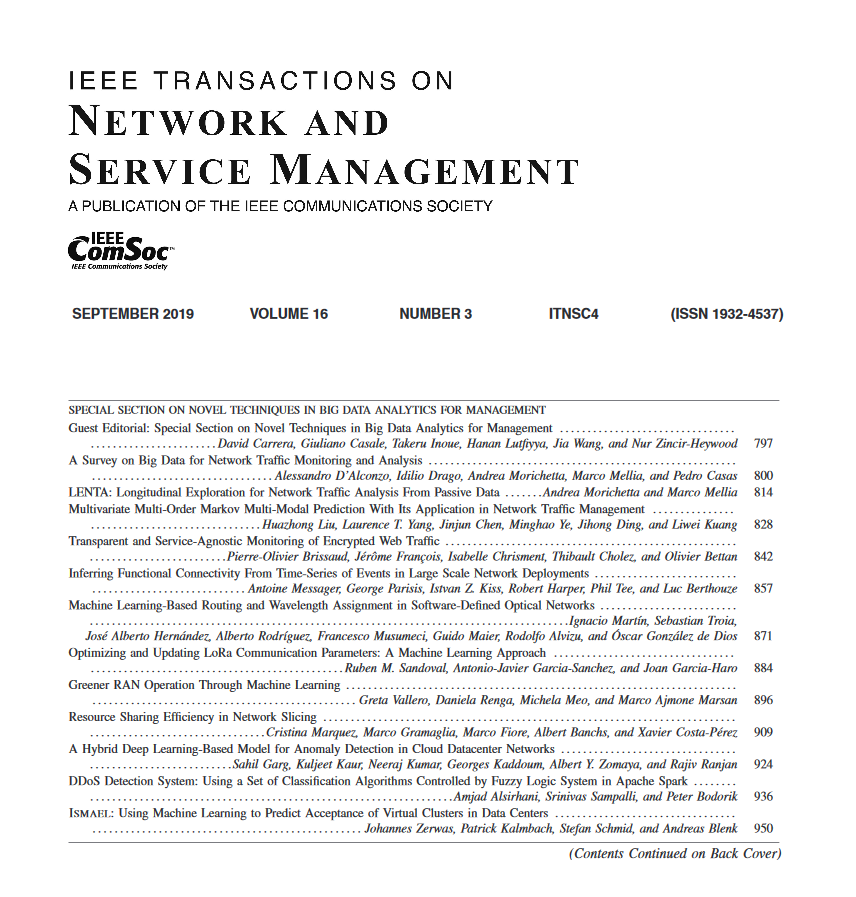

来源期刊

IEEE Transactions on Network and Service Management

Computer Science-Computer Networks and Communications

CiteScore

9.30

自引率

15.10%

发文量

325

期刊介绍:

IEEE Transactions on Network and Service Management will publish (online only) peerreviewed archival quality papers that advance the state-of-the-art and practical applications of network and service management. Theoretical research contributions (presenting new concepts and techniques) and applied contributions (reporting on experiences and experiments with actual systems) will be encouraged. These transactions will focus on the key technical issues related to: Management Models, Architectures and Frameworks; Service Provisioning, Reliability and Quality Assurance; Management Functions; Enabling Technologies; Information and Communication Models; Policies; Applications and Case Studies; Emerging Technologies and Standards.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: