O-DQR: A Multi-Agent Deep Reinforcement Learning for Multihop Routing in Overlay Networks

IF 4.7

2区 计算机科学

Q1 COMPUTER SCIENCE, INFORMATION SYSTEMS

IEEE Transactions on Network and Service Management

Pub Date : 2024-10-23

DOI:10.1109/TNSM.2024.3485196

引用次数: 0

Abstract

This paper addresses the problem of dynamic packet routing in overlay networks using a fully decentralized Multi-Agent Deep Reinforcement Learning (MA-DRL). Overlay networks are built by having a virtual topology on top of an Internet Service Provider (ISP) underlay network, where those nodes are running a fixed, single path routing policy decided by the ISP. In such a scenario, the underlay topology and the traffic are unknown by the overlay network. In this setting, we propose O-DQR, which is an MA-DRL framework working under Distributed Training Decentralized Execution (DTDE), where the agents are allowed to communicate only with their immediate overlay neighbors during both training and inference. We address three fundamental aspects for deploying such a solution: (i) performance (delay, loss rate), where the framework can achieve near-optimal performance, (ii) control overhead, which is reduced by enabling the agents to send control packets only when needed dynamically; and (iii) training convergence stability, which is improved by proposing a guided reward mechanism for dynamically learning the penalty applied when a packet is lost. Finally, we evaluate our solution through extensive experimentation in a realistic network simulation in both offline training and continual learning settings.求助全文

约1分钟内获得全文

求助全文

来源期刊

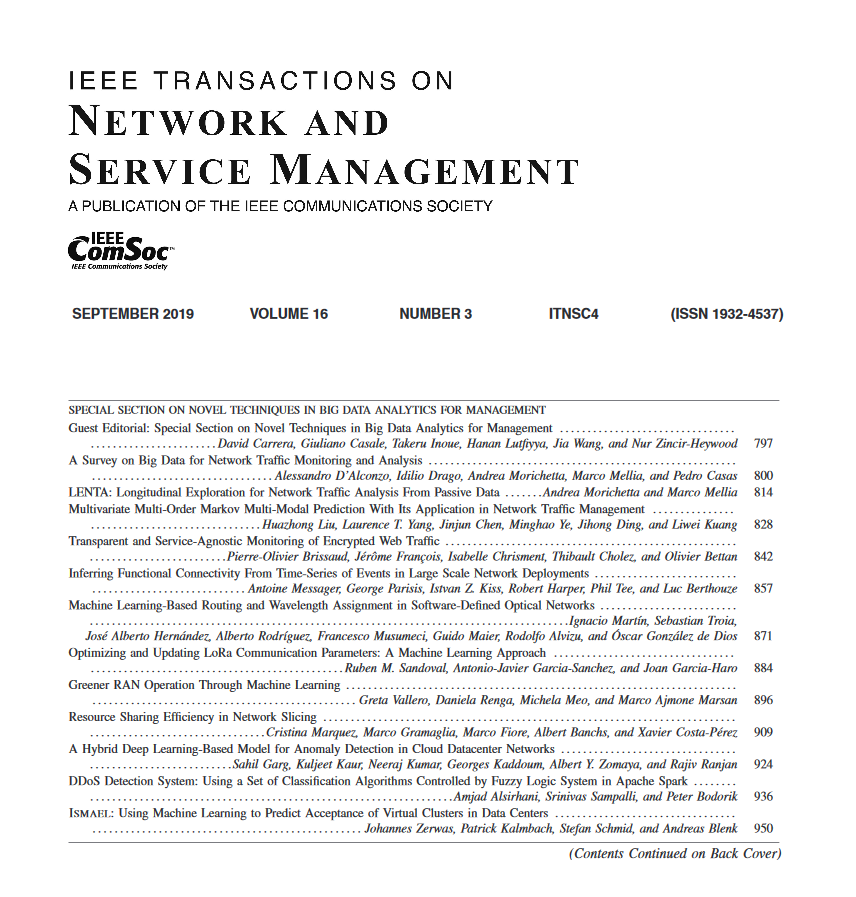

IEEE Transactions on Network and Service Management

Computer Science-Computer Networks and Communications

CiteScore

9.30

自引率

15.10%

发文量

325

期刊介绍:

IEEE Transactions on Network and Service Management will publish (online only) peerreviewed archival quality papers that advance the state-of-the-art and practical applications of network and service management. Theoretical research contributions (presenting new concepts and techniques) and applied contributions (reporting on experiences and experiments with actual systems) will be encouraged. These transactions will focus on the key technical issues related to: Management Models, Architectures and Frameworks; Service Provisioning, Reliability and Quality Assurance; Management Functions; Enabling Technologies; Information and Communication Models; Policies; Applications and Case Studies; Emerging Technologies and Standards.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: