LTACL: long-tail awareness contrastive learning for distantly supervised relation extraction

IF 5

2区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

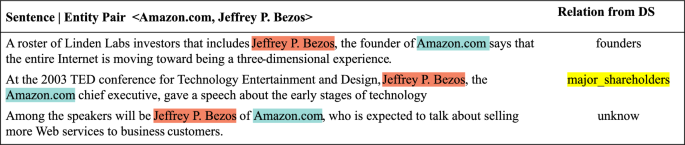

Abstract Distantly supervised relation extraction is an automatically annotating method for large corpora by classifying a bound of sentences with two same entities and the relation. Recent works exploit sound performance by adopting contrastive learning to efficiently obtain instance representations under the multi-instance learning framework. Though these methods weaken the impact of noisy labels, it ignores the long-tail distribution problem in distantly supervised sets and fails to capture the mutual information of different parts. We are thus motivated to tackle these issues and establishing a long-tail awareness contrastive learning method for efficiently utilizing the long-tail data. Our model treats major and tail parts differently by adopting hyper-augmentation strategies. Moreover, the model provides various views by constructing novel positive and negative pairs in contrastive learning for gaining a better representation between different parts. The experimental results on the NYT10 dataset demonstrate our model surpasses the existing SOTA by more than 2.61% AUC score on relation extraction. In manual evaluation datasets including NYT10m and Wiki20m, our method obtains competitive results by achieving 59.42% and 79.19% AUC scores on relation extraction, respectively. Extensive discussions further confirm the effectiveness of our approach.

远程监督关系提取的长尾意识对比学习

远程监督关系抽取是一种大型语料库的自动标注方法,通过对具有两个相同实体和关系的句子进行分类。最近的研究在多实例学习框架下,通过采用对比学习来有效地获取实例表示来开发声音性能。虽然这些方法削弱了噪声标签的影响,但忽略了远程监督集中的长尾分布问题,无法捕获不同部分的互信息。因此,我们有动力解决这些问题,并建立一种有效利用长尾数据的长尾意识对比学习方法。我们的模型通过采用超增强策略来区分主要部分和尾部部分。此外,该模型通过在对比学习中构建新的正、负对来提供不同的观点,以获得不同部分之间更好的表征。在NYT10数据集上的实验结果表明,我们的模型在关系提取上比现有的SOTA高出2.61%以上的AUC分数。在包括NYT10m和Wiki20m在内的人工评价数据集上,我们的方法在关系提取上分别获得59.42%和79.19%的AUC得分,取得了具有竞争力的结果。广泛的讨论进一步证实了我们的做法的有效性。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Complex & Intelligent Systems

COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE-

CiteScore

9.60

自引率

10.30%

发文量

297

期刊介绍:

Complex & Intelligent Systems aims to provide a forum for presenting and discussing novel approaches, tools and techniques meant for attaining a cross-fertilization between the broad fields of complex systems, computational simulation, and intelligent analytics and visualization. The transdisciplinary research that the journal focuses on will expand the boundaries of our understanding by investigating the principles and processes that underlie many of the most profound problems facing society today.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: