可解释和可解释的机器学习:以方法为中心的概述和具体示例

IF 11.7

2区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

Wiley Interdisciplinary Reviews-Data Mining and Knowledge Discovery

Pub Date : 2023-02-28

DOI:10.1002/widm.1493

引用次数: 7

摘要

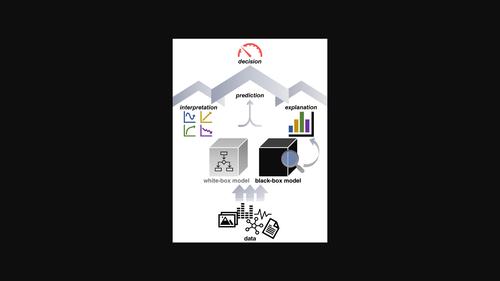

可解释性和可解释性对于机器学习(ML)和统计学在医学、经济、法律和自然科学中的应用至关重要,也是ML模型设计和开发的基本原则。虽然可解释性和可解释性没有一个精确和通用的定义,但在过去的30年里,许多由这些属性驱动的模型和技术已经被开发出来,目前的重点正在转向深度学习。我们将考虑最新技术的具体例子,包括专门定制的基于规则的、稀疏的和附加的分类模型,可解释的表示学习,以及事后解释黑箱模型的方法。讨论将强调可解释性和可解释性的必要性和相关性,它们之间的区别,以及所呈现的可解释性模型和解释方法“动物园”背后的归纳偏见。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Interpretable and explainable machine learning: A methods‐centric overview with concrete examples

Interpretability and explainability are crucial for machine learning (ML) and statistical applications in medicine, economics, law, and natural sciences and form an essential principle for ML model design and development. Although interpretability and explainability have escaped a precise and universal definition, many models and techniques motivated by these properties have been developed over the last 30 years, with the focus currently shifting toward deep learning. We will consider concrete examples of state‐of‐the‐art, including specially tailored rule‐based, sparse, and additive classification models, interpretable representation learning, and methods for explaining black‐box models post hoc. The discussion will emphasize the need for and relevance of interpretability and explainability, the divide between them, and the inductive biases behind the presented “zoo” of interpretable models and explanation methods.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Wiley Interdisciplinary Reviews-Data Mining and Knowledge Discovery

COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE-COMPUTER SCIENCE, THEORY & METHODS

CiteScore

22.70

自引率

2.60%

发文量

39

审稿时长

>12 weeks

期刊介绍:

The goals of Wiley Interdisciplinary Reviews-Data Mining and Knowledge Discovery (WIREs DMKD) are multifaceted. Firstly, the journal aims to provide a comprehensive overview of the current state of data mining and knowledge discovery by featuring ongoing reviews authored by leading researchers. Secondly, it seeks to highlight the interdisciplinary nature of the field by presenting articles from diverse perspectives, covering various application areas such as technology, business, healthcare, education, government, society, and culture. Thirdly, WIREs DMKD endeavors to keep pace with the rapid advancements in data mining and knowledge discovery through regular content updates. Lastly, the journal strives to promote active engagement in the field by presenting its accomplishments and challenges in an accessible manner to a broad audience. The content of WIREs DMKD is intended to benefit upper-level undergraduate and postgraduate students, teaching and research professors in academic programs, as well as scientists and research managers in industry.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: