实时神经软阴影从硬阴影合成

IF 2.2

4区 计算机科学

Q2 COMPUTER SCIENCE, SOFTWARE ENGINEERING

引用次数: 0

摘要

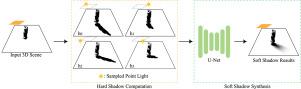

在实时渲染中,软阴影对增强视觉真实感起着至关重要的作用。虽然传统的阴影映射技术提供了很高的效率,但它们经常受到伪影和质量限制的影响。射线追踪虽然可以产生高保真的软阴影,但计算成本较高。本文提出了一种基于神经网络的通用实时软阴影生成方法。为了编码阴影几何,我们通过阴影映射将硬阴影作为输入到我们的网络中,这有效地捕获了阴影位置和轮廓的空间布局。然后,一个轻量级的U-Net架构对这个输入进行细化,实时合成高质量的软阴影。生成的阴影在视觉保真度上接近光线跟踪参考。与现有的基于学习的方法相比,我们的方法产生了更高质量的软阴影,并在不同场景中提供了更好的泛化。此外,它不需要特定场景的预计算,使其直接适用于实际的实时渲染场景。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Real-time neural soft shadow synthesis from hard shadows

Soft shadows play a crucial role in enhancing visual realism in real-time rendering. Although traditional shadow mapping techniques offer high efficiency, they often suffer from artifacts and limited quality. In contrast, ray tracing can produce high-fidelity soft shadows but incurs substantial computational cost. In this paper, we propose a general-purpose, real-time soft shadow generation method based on neural networks. To encode shadow geometry, we employ the hard shadows via shadow mapping as input to our network, which effectively captures the spatial layout of shadow positions and contours. A lightweight U-Net architecture then refines this input to synthesize high-quality soft shadows in real time. The generated shadows closely approximate ray-traced references in visual fidelity. Compared to existing learning-based methods, our approach produces higher-quality soft shadows and offers improved generalization across diverse scenes. Furthermore, it requires no scene-specific precomputation, making it directly applicable to practical real-time rendering scenarios.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Graphical Models

工程技术-计算机:软件工程

CiteScore

3.60

自引率

5.90%

发文量

15

审稿时长

47 days

期刊介绍:

Graphical Models is recognized internationally as a highly rated, top tier journal and is focused on the creation, geometric processing, animation, and visualization of graphical models and on their applications in engineering, science, culture, and entertainment. GMOD provides its readers with thoroughly reviewed and carefully selected papers that disseminate exciting innovations, that teach rigorous theoretical foundations, that propose robust and efficient solutions, or that describe ambitious systems or applications in a variety of topics.

We invite papers in five categories: research (contributions of novel theoretical or practical approaches or solutions), survey (opinionated views of the state-of-the-art and challenges in a specific topic), system (the architecture and implementation details of an innovative architecture for a complete system that supports model/animation design, acquisition, analysis, visualization?), application (description of a novel application of know techniques and evaluation of its impact), or lecture (an elegant and inspiring perspective on previously published results that clarifies them and teaches them in a new way).

GMOD offers its authors an accelerated review, feedback from experts in the field, immediate online publication of accepted papers, no restriction on color and length (when justified by the content) in the online version, and a broad promotion of published papers. A prestigious group of editors selected from among the premier international researchers in their fields oversees the review process.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: