dddd++:利用密度图一致性在室内环境中进行深度估计

IF 2.2

4区 计算机科学

Q2 COMPUTER SCIENCE, SOFTWARE ENGINEERING

引用次数: 0

摘要

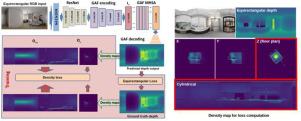

我们介绍了一种新的深度神经网络,用于快速和结构一致的室内单目360°深度估计。我们的模型从单个重力对齐或重力校正的等矩形图像生成球形深度图,确保预测深度与典型的深度分布和杂乱室内空间的结构特征保持一致,这些空间通常被墙壁、地板和天花板包围。通过利用人造室内环境中独特的垂直和水平模式,我们提出了一个流线型的网络架构,该架构结合了重力对齐的特征平坦化和专门的视觉变压器。通过平坦化,这些变压器充分利用了输入的全向特性,而不需要补丁分割或位置编码。为了进一步增强结构一致性,我们引入了一种新的损失函数,通过将预测深度图中的点投影到水平面和圆柱形代理上来评估密度图的一致性。与竞争方法相比,这种轻量级体系结构需要更少的可调参数和计算资源。我们的对比评估表明,与现有方法相比,我们的方法提高了深度估计精度,同时确保了更大的结构一致性。由于这些原因,它有望适用于实时解决方案的整合,以及更复杂的结构分析和分割方法的构建块。本文章由计算机程序翻译,如有差异,请以英文原文为准。

DDD++: Exploiting Density map consistency for Deep Depth estimation in indoor environments

We introduce a novel deep neural network designed for fast and structurally consistent monocular 360° depth estimation in indoor settings. Our model generates a spherical depth map from a single gravity-aligned or gravity-rectified equirectangular image, ensuring the predicted depth aligns with the typical depth distribution and structural features of cluttered indoor spaces, which are generally enclosed by walls, floors, and ceilings. By leveraging the distinctive vertical and horizontal patterns found in man-made indoor environments, we propose a streamlined network architecture that incorporates gravity-aligned feature flattening and specialized vision transformers. Through flattening, these transformers fully exploit the omnidirectional nature of the input without requiring patch segmentation or positional encoding. To further enhance structural consistency, we introduce a novel loss function that assesses density map consistency by projecting points from the predicted depth map onto a horizontal plane and a cylindrical proxy. This lightweight architecture requires fewer tunable parameters and computational resources than competing methods. Our comparative evaluation shows that our approach improves depth estimation accuracy while ensuring greater structural consistency compared to existing methods. For these reasons, it promises to be suitable for incorporation in real-time solutions, as well as a building block in more complex structural analysis and segmentation methods.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Graphical Models

工程技术-计算机:软件工程

CiteScore

3.60

自引率

5.90%

发文量

15

审稿时长

47 days

期刊介绍:

Graphical Models is recognized internationally as a highly rated, top tier journal and is focused on the creation, geometric processing, animation, and visualization of graphical models and on their applications in engineering, science, culture, and entertainment. GMOD provides its readers with thoroughly reviewed and carefully selected papers that disseminate exciting innovations, that teach rigorous theoretical foundations, that propose robust and efficient solutions, or that describe ambitious systems or applications in a variety of topics.

We invite papers in five categories: research (contributions of novel theoretical or practical approaches or solutions), survey (opinionated views of the state-of-the-art and challenges in a specific topic), system (the architecture and implementation details of an innovative architecture for a complete system that supports model/animation design, acquisition, analysis, visualization?), application (description of a novel application of know techniques and evaluation of its impact), or lecture (an elegant and inspiring perspective on previously published results that clarifies them and teaches them in a new way).

GMOD offers its authors an accelerated review, feedback from experts in the field, immediate online publication of accepted papers, no restriction on color and length (when justified by the content) in the online version, and a broad promotion of published papers. A prestigious group of editors selected from among the premier international researchers in their fields oversees the review process.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: