用于集值决策的谨慎分类器组合

IF 3.2

3区 计算机科学

Q2 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

目前,分类器在许多领域都表现出令人印象深刻的性能。然而,在某些应用中,错误判定的代价很高,集值预测可能比经典的简明判定更可取,因为集值预测的信息量更少,但可靠性更高。谨慎分类器的目标就是生成这种不精确的预测,从而降低做出错误决策的风险。在本文中,我们介绍了两种植根于集合学习范式的谨慎分类方法,它们都是由概率区间组合而成。这些区间在信念函数的框架内聚集,使用两种建议的策略,可视为经典平均法和投票法的一般化。我们的策略旨在最大化较低的预期贴现效用,从而在模型准确性和确定性之间取得良好的折衷。我们使用不精确的决策树来说明所建议程序的效率和性能,从而产生了随机森林分类器的谨慎变体。利用 15 个数据集说明了这些变体的性能和特性。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Cautious classifier ensembles for set-valued decision-making

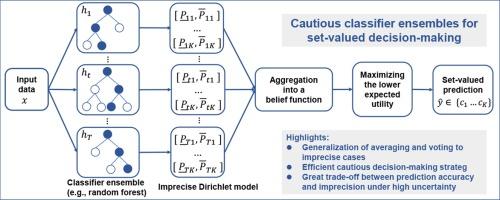

Classifiers now demonstrate impressive performances in many domains. However, in some applications where the cost of an erroneous decision is high, set-valued predictions may be preferable to classical crisp decisions, being less informative but more reliable. Cautious classifiers aim at producing such imprecise predictions so as to reduce the risk of making wrong decisions. In this paper, we describe two cautious classification approaches rooted in the ensemble learning paradigm, which consist in combining probability intervals. These intervals are aggregated within the framework of belief functions, using two proposed strategies that can be regarded as generalizations of classical averaging and voting. Our strategies aim at maximizing the lower expected discounted utility to achieve a good compromise between model accuracy and determinacy. The efficiency and performance of the proposed procedure are illustrated using imprecise decision trees, thus giving birth to cautious variants of the random forest classifier. The performance and properties of these variants are illustrated using 15 datasets.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

International Journal of Approximate Reasoning

工程技术-计算机:人工智能

CiteScore

6.90

自引率

12.80%

发文量

170

审稿时长

67 days

期刊介绍:

The International Journal of Approximate Reasoning is intended to serve as a forum for the treatment of imprecision and uncertainty in Artificial and Computational Intelligence, covering both the foundations of uncertainty theories, and the design of intelligent systems for scientific and engineering applications. It publishes high-quality research papers describing theoretical developments or innovative applications, as well as review articles on topics of general interest.

Relevant topics include, but are not limited to, probabilistic reasoning and Bayesian networks, imprecise probabilities, random sets, belief functions (Dempster-Shafer theory), possibility theory, fuzzy sets, rough sets, decision theory, non-additive measures and integrals, qualitative reasoning about uncertainty, comparative probability orderings, game-theoretic probability, default reasoning, nonstandard logics, argumentation systems, inconsistency tolerant reasoning, elicitation techniques, philosophical foundations and psychological models of uncertain reasoning.

Domains of application for uncertain reasoning systems include risk analysis and assessment, information retrieval and database design, information fusion, machine learning, data and web mining, computer vision, image and signal processing, intelligent data analysis, statistics, multi-agent systems, etc.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: