利用参考图像进行无文本扩散涂色,增强视觉保真度

IF 3.9

3区 计算机科学

Q2 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

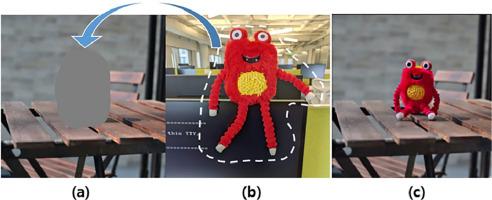

本文提出了一种主体驱动图像生成的新方法,解决了传统文本到图像扩散模型的局限性。我们的方法使用参考图像生成图像,而不依赖基于语言的提示。我们引入了一个视觉细节保护模块,该模块可捕捉复杂的细节和纹理,解决与有限的训练样本相关的过拟合问题。通过改进的无分类器引导技术和特征串联技术,该模型的性能得到了进一步提升,从而实现了不同场景中主体的自然定位和协调。使用 CLIP、DINO 和质量分数(QS)进行的定量评估以及一项用户研究表明,我们生成的图像质量上乘。我们的工作凸显了预训练模型和视觉补丁嵌入在主体驱动编辑中的潜力,在图像生成任务中平衡了多样性和保真度。我们的实施方案可在 https://github.com/8eomio/Subject-Inpainting 上查阅。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Text-free diffusion inpainting using reference images for enhanced visual fidelity

This paper presents a novel approach to subject-driven image generation that addresses the limitations of traditional text-to-image diffusion models. Our method generates images using reference images without relying on language-based prompts. We introduce a visual detail preserving module that captures intricate details and textures, addressing overfitting issues associated with limited training samples. The model's performance is further enhanced through a modified classifier-free guidance technique and feature concatenation, enabling the natural positioning and harmonization of subjects within diverse scenes. Quantitative assessments using CLIP, DINO and Quality scores (QS), along with a user study, demonstrate the superior quality of our generated images. Our work highlights the potential of pre-trained models and visual patch embeddings in subject-driven editing, balancing diversity and fidelity in image generation tasks. Our implementation is available at https://github.com/8eomio/Subject-Inpainting.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Pattern Recognition Letters

工程技术-计算机:人工智能

CiteScore

12.40

自引率

5.90%

发文量

287

审稿时长

9.1 months

期刊介绍:

Pattern Recognition Letters aims at rapid publication of concise articles of a broad interest in pattern recognition.

Subject areas include all the current fields of interest represented by the Technical Committees of the International Association of Pattern Recognition, and other developing themes involving learning and recognition.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: