利用轮廓小波扩散生成高效图像

IF 2.5

4区 计算机科学

Q2 COMPUTER SCIENCE, SOFTWARE ENGINEERING

引用次数: 0

摘要

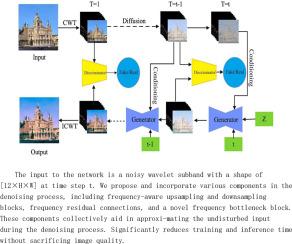

新兴的图像生成领域以其生成高质量图像的潜力吸引了学术界和工业界,促进了文本到图像的转换、图像翻译和恢复等应用。这些进步显著推动了元宇宙的发展,由生成图像构建的虚拟环境提供了全新的互动体验,尤其是与数字图书馆结合使用时。该技术可生成细节丰富的高质量图像,带来身临其境的体验。尽管扩散模型在图像质量和模式覆盖率方面比 GANs 更胜一筹,但其缓慢的训练和推理速度阻碍了更广泛的应用。为了解决这一问题,我们引入了轮廓小波扩散模型,该模型通过分解特征和采用多向、各向异性分析来加速这一过程。该模型集成了一种关注机制,用于关注高频细节;还集成了一种重构损失函数,用于确保图像一致性并加速收敛。其结果是在不牺牲图像质量的前提下,显著缩短了训练和推理时间,使扩散模型在大规模应用中变得可行,并增强了其在不断发展的数字领域中的实用性。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Efficient image generation with Contour Wavelet Diffusion

The burgeoning field of image generation has captivated academia and industry with its potential to produce high-quality images, facilitating applications like text-to-image conversion, image translation, and recovery. These advancements have notably propelled the growth of the metaverse, where virtual environments constructed from generated images offer new interactive experiences, especially in conjunction with digital libraries. The technology creates detailed high-quality images, enabling immersive experiences. Despite diffusion models showing promise with superior image quality and mode coverage over GANs, their slow training and inference speeds have hindered broader adoption. To counter this, we introduce the Contour Wavelet Diffusion Model, which accelerates the process by decomposing features and employing multi-directional, anisotropic analysis. This model integrates an attention mechanism to focus on high-frequency details and a reconstruction loss function to ensure image consistency and accelerate convergence. The result is a significant reduction in training and inference times without sacrificing image quality, making diffusion models viable for large-scale applications and enhancing their practicality in the evolving digital landscape.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Computers & Graphics-Uk

工程技术-计算机:软件工程

CiteScore

5.30

自引率

12.00%

发文量

173

审稿时长

38 days

期刊介绍:

Computers & Graphics is dedicated to disseminate information on research and applications of computer graphics (CG) techniques. The journal encourages articles on:

1. Research and applications of interactive computer graphics. We are particularly interested in novel interaction techniques and applications of CG to problem domains.

2. State-of-the-art papers on late-breaking, cutting-edge research on CG.

3. Information on innovative uses of graphics principles and technologies.

4. Tutorial papers on both teaching CG principles and innovative uses of CG in education.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: