人类与机器人的面部共表情。

IF 26.1

1区 计算机科学

Q1 ROBOTICS

引用次数: 0

摘要

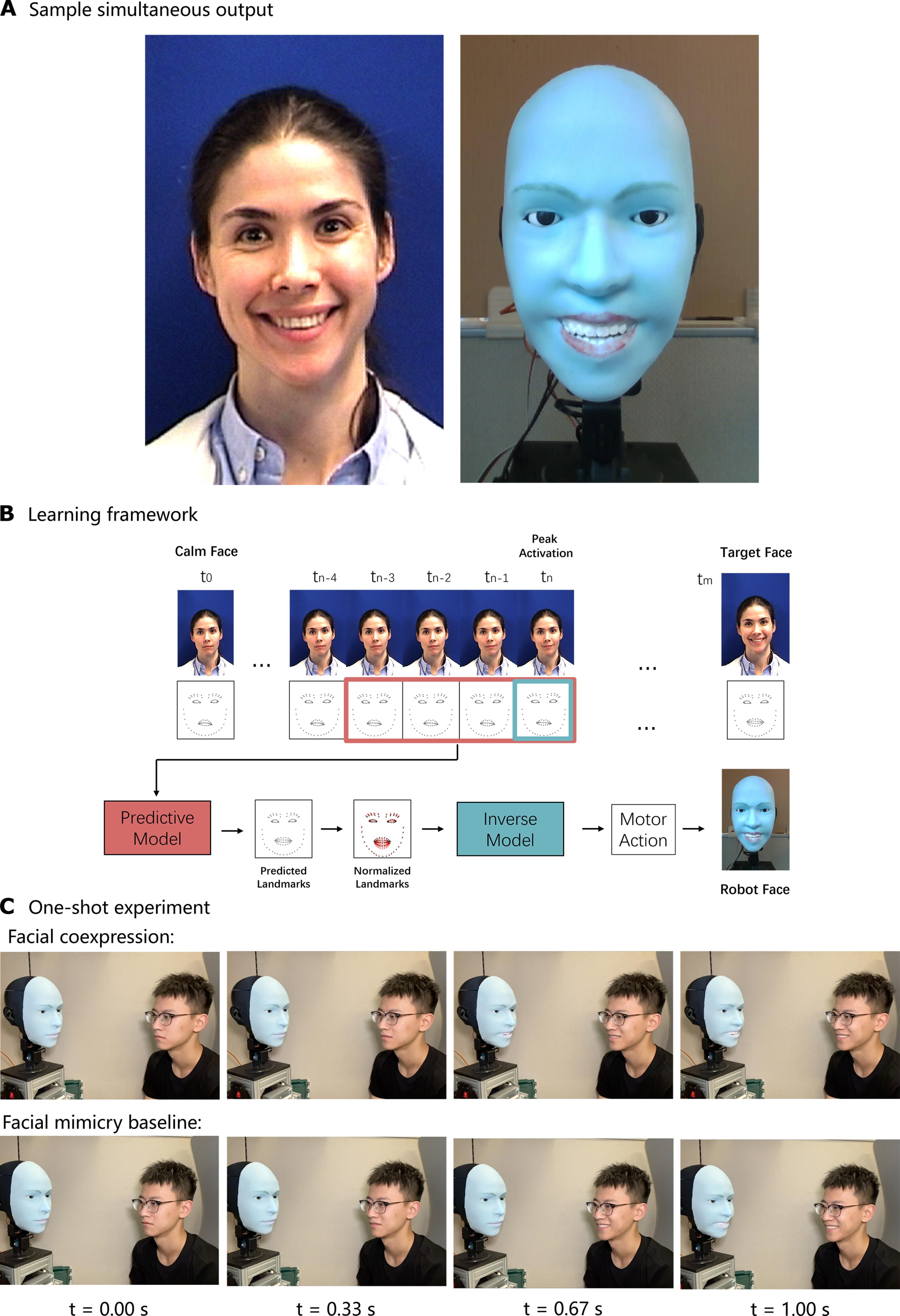

大型语言模型使机器人的语言交流迅速发展,但非语言交流却跟不上步伐。实体仿人机器人很难使用面部动作进行表达和交流,主要依靠语音。挑战是双重的:首先,驱动表情丰富的机器人面部动作在机械方面具有挑战性。第二个挑战是,机器人要想显得自然、及时和真实,就必须知道该做出什么样的表情。在这里,我们提出可以通过训练机器人预测未来的面部表情并与人类同时执行来缓解这两个障碍。延迟的面部模仿看起来很虚伪,而面部共同表情则让人感觉更真实,因为它需要正确推断人类的情绪状态,以便及时执行。我们发现,机器人可以在人类微笑前约 839 毫秒学会预测即将出现的微笑,并利用学习到的逆运动学面部自我模型,与人类同时共同表达微笑。我们使用一个由 26 个自由度组成的机器人面部演示了这一能力。我们相信,同时共同表达面部表情的能力可以改善人与机器人之间的互动。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Human-robot facial coexpression

Large language models are enabling rapid progress in robotic verbal communication, but nonverbal communication is not keeping pace. Physical humanoid robots struggle to express and communicate using facial movement, relying primarily on voice. The challenge is twofold: First, the actuation of an expressively versatile robotic face is mechanically challenging. A second challenge is knowing what expression to generate so that the robot appears natural, timely, and genuine. Here, we propose that both barriers can be alleviated by training a robot to anticipate future facial expressions and execute them simultaneously with a human. Whereas delayed facial mimicry looks disingenuous, facial coexpression feels more genuine because it requires correct inference of the human’s emotional state for timely execution. We found that a robot can learn to predict a forthcoming smile about 839 milliseconds before the human smiles and, using a learned inverse kinematic facial self-model, coexpress the smile simultaneously with the human. We demonstrated this ability using a robot face comprising 26 degrees of freedom. We believe that the ability to coexpress simultaneous facial expressions could improve human-robot interaction.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Science Robotics

Mathematics-Control and Optimization

CiteScore

30.60

自引率

2.80%

发文量

83

期刊介绍:

Science Robotics publishes original, peer-reviewed, science- or engineering-based research articles that advance the field of robotics. The journal also features editor-commissioned Reviews. An international team of academic editors holds Science Robotics articles to the same high-quality standard that is the hallmark of the Science family of journals.

Sub-topics include: actuators, advanced materials, artificial Intelligence, autonomous vehicles, bio-inspired design, exoskeletons, fabrication, field robotics, human-robot interaction, humanoids, industrial robotics, kinematics, machine learning, material science, medical technology, motion planning and control, micro- and nano-robotics, multi-robot control, sensors, service robotics, social and ethical issues, soft robotics, and space, planetary and undersea exploration.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: