Feasibility Analysis of Phenotype Quantification from Unstructured Clinical Interactions.

Computational psychiatry (Cambridge, Mass.)

Pub Date : 2022-01-11

eCollection Date: 2022-01-01

DOI:10.5334/cpsy.78

引用次数: 0

Abstract

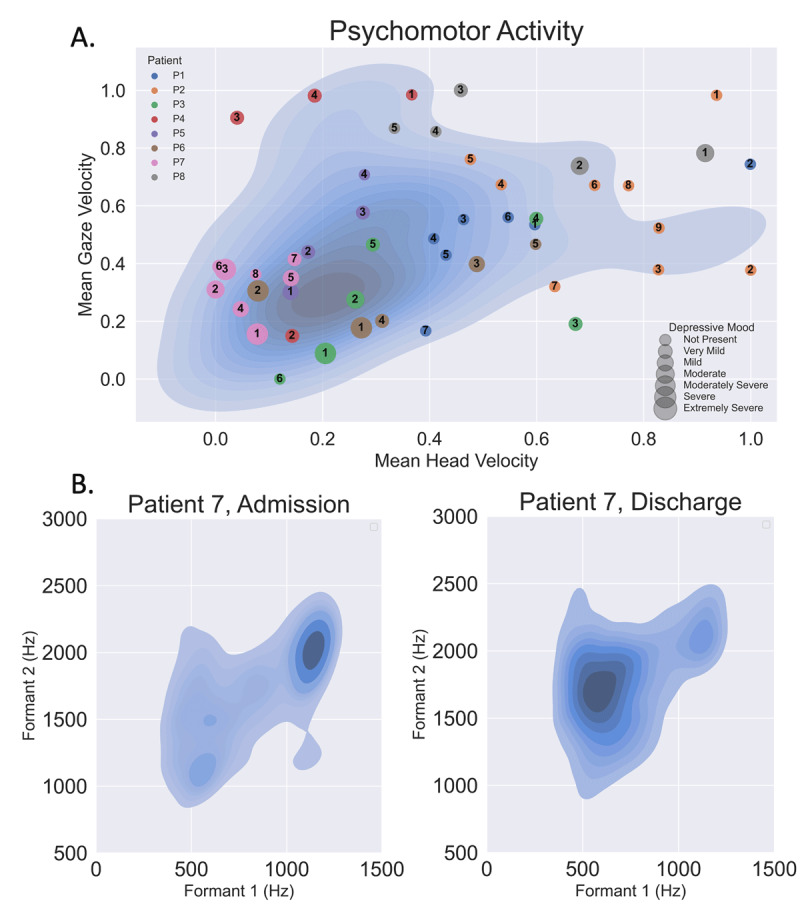

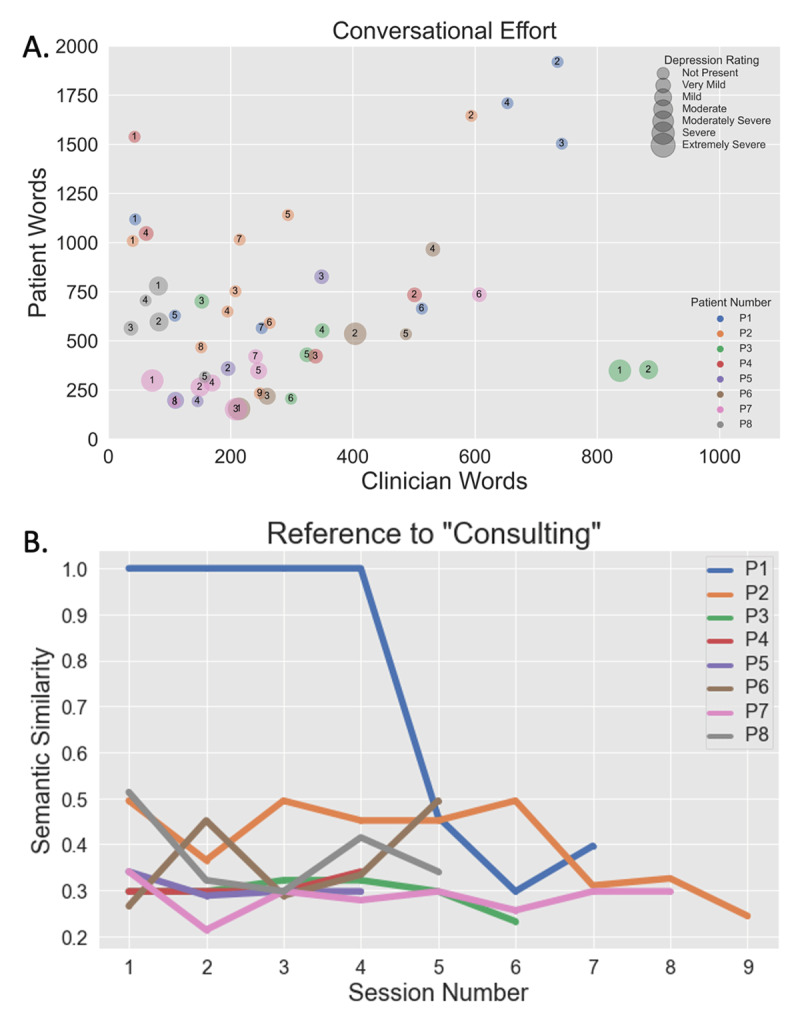

We conducted a feasibility analysis to determine the quality of data that could be collected ambiently during routine clinical conversations. We used inexpensive, consumer-grade hardware to record unstructured dialogue and open-source software tools to quantify and model face, voice (acoustic and language) and movement features. We used an external validation set to perform proof-of-concept predictive analyses and show that clinically relevant measures can be produced without a restrictive protocol.

非结构化临床相互作用表型定量的可行性分析

我们进行了一项可行性分析,以确定在常规临床对话中可在环境中收集的数据质量。我们使用价格低廉的消费级硬件记录非结构化对话,并使用开源软件工具对面部、语音(声学和语言)和动作特征进行量化和建模。我们使用外部验证集来进行概念验证预测分析,并表明无需限制性协议即可生成与临床相关的测量结果。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: