Large language models surpass human experts in predicting neuroscience results

IF 21.4

1区 心理学

Q1 MULTIDISCIPLINARY SCIENCES

引用次数: 0

Abstract

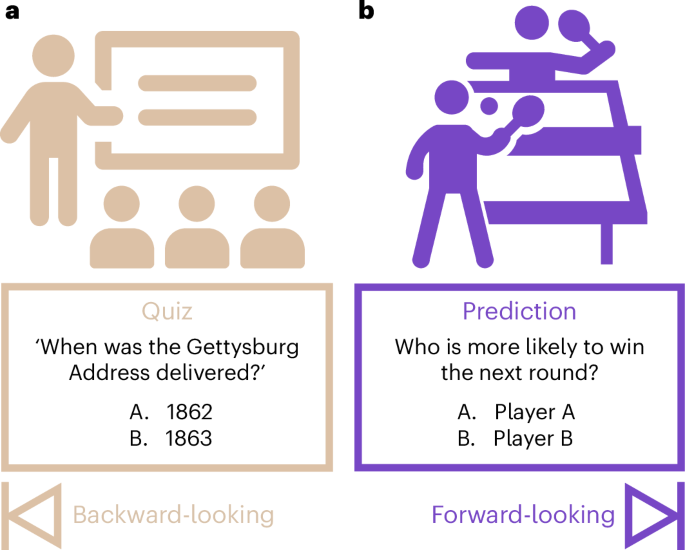

Scientific discoveries often hinge on synthesizing decades of research, a task that potentially outstrips human information processing capacities. Large language models (LLMs) offer a solution. LLMs trained on the vast scientific literature could potentially integrate noisy yet interrelated findings to forecast novel results better than human experts. Here, to evaluate this possibility, we created BrainBench, a forward-looking benchmark for predicting neuroscience results. We find that LLMs surpass experts in predicting experimental outcomes. BrainGPT, an LLM we tuned on the neuroscience literature, performed better yet. Like human experts, when LLMs indicated high confidence in their predictions, their responses were more likely to be correct, which presages a future where LLMs assist humans in making discoveries. Our approach is not neuroscience specific and is transferable to other knowledge-intensive endeavours. Large language models (LLMs) can synthesize vast amounts of information. Luo et al. show that LLMs—especially BrainGPT, an LLM the authors tuned on the neuroscience literature—outperform experts in predicting neuroscience results and could assist scientists in making future discoveries.

大型语言模型在预测神经科学结果方面超越人类专家

科学发现往往取决于对数十年研究成果的综合,而这一任务有可能超出人类的信息处理能力。大型语言模型(LLM)提供了一种解决方案。在浩瀚的科学文献中训练出来的 LLM 有可能整合嘈杂但相互关联的研究结果,从而比人类专家更好地预测新结果。为了评估这种可能性,我们创建了 BrainBench,这是一个预测神经科学结果的前瞻性基准。我们发现,LLM 在预测实验结果方面超过了专家。BrainGPT 是我们根据神经科学文献调整的 LLM,它的表现更好。与人类专家一样,当 LLM 对自己的预测表示高度自信时,他们的回答更有可能是正确的,这预示着未来 LLM 将协助人类进行发现。我们的方法并不局限于神经科学,也可用于其他知识密集型工作。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Nature Human Behaviour

Psychology-Social Psychology

CiteScore

36.80

自引率

1.00%

发文量

227

期刊介绍:

Nature Human Behaviour is a journal that focuses on publishing research of outstanding significance into any aspect of human behavior.The research can cover various areas such as psychological, biological, and social bases of human behavior.It also includes the study of origins, development, and disorders related to human behavior.The primary aim of the journal is to increase the visibility of research in the field and enhance its societal reach and impact.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: