Explainability-based knowledge distillation

IF 7.5

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

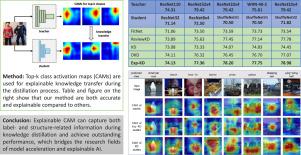

Knowledge distillation (KD) is a popular approach for deep model acceleration. Based on the knowledge distilled, we categorize KD methods as label-related and structure-related. The former distills the very abstract (high-level) knowledge, e.g., logits; and the latter uses the spatial (low- or medium-level feature) knowledge. However, existing KD methods are usually not explainable, i.e., we do not know what knowledge is transferred during distillation. In this work, we propose a new KD method, Explainability-based Knowledge Distillation (Exp-KD). Specifically, we propose to use class activation map (CAM) as the explainable knowledge which can effectively capture both label- and structure-related information during the distillation. We conduct extensive experiments, including image classification tasks on CIFAR-10, CIFAR-100 and ImageNet datasets, and explainability tests on ImageNet and ImageNet-Segmentation. The results show the great effectiveness and explainability of Exp-KD compared with the state-of-the-art. Code is available at https://github.com/Blenderama/Exp-KD.

基于可解释性的知识提炼

知识提炼(KD)是深度模型加速的一种流行方法。根据所提炼的知识,我们将知识提炼方法分为标签相关和结构相关两类。前者提炼的是非常抽象(高层次)的知识,如对数;后者使用的是空间(低层或中层特征)知识。然而,现有的 KD 方法通常无法解释,也就是说,我们不知道在提炼过程中传输了哪些知识。在这项工作中,我们提出了一种新的 KD 方法,即基于可解释性的知识蒸馏(Exp-KD)。具体来说,我们建议使用类激活图(CAM)作为可解释知识,它能在蒸馏过程中有效捕捉标签和结构相关信息。我们进行了广泛的实验,包括 CIFAR-10、CIFAR-100 和 ImageNet 数据集上的图像分类任务,以及 ImageNet 和 ImageNet-Segmentation 上的可解释性测试。实验结果表明,与最先进的技术相比,Exp-KD 具有极高的有效性和可解释性。代码见 https://github.com/Blenderama/Exp-KD。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Pattern Recognition

工程技术-工程:电子与电气

CiteScore

14.40

自引率

16.20%

发文量

683

审稿时长

5.6 months

期刊介绍:

The field of Pattern Recognition is both mature and rapidly evolving, playing a crucial role in various related fields such as computer vision, image processing, text analysis, and neural networks. It closely intersects with machine learning and is being applied in emerging areas like biometrics, bioinformatics, multimedia data analysis, and data science. The journal Pattern Recognition, established half a century ago during the early days of computer science, has since grown significantly in scope and influence.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: