Generation of Tailored and Confined Datasets for IDS Evaluation in Cyber-Physical Systems

IF 4.4

2区 化学

Q2 MATERIALS SCIENCE, MULTIDISCIPLINARY

引用次数: 0

Abstract

The state-of-the-art evaluation of an Intrusion Detection System (IDS) relies on benchmark datasets composed of the regular system's and potential attackers’ behavior. The datasets are collected once and independently of the IDS under analysis. This paper questions this practice by introducing a methodology to elicit particularly challenging samples to benchmark a given IDS. In detail, we propose (1) six fitness functions quantifying the suitability of individual samples, particularly tailored for safety-critical cyber-physical systems, (2) a scenario-based methodology for attacks on networks to systematically deduce optimal samples in addition to previous datasets, and (3) a respective extension of the standard IDS evaluation methodology. We applied our methodology to two network-based IDSs defending an advanced driver assistance system. Our results indicate that different IDSs show strongly differing characteristics in their edge case classifications and that the original datasets used for evaluation do not include such challenging behavior. In the worst case, this causes a critical undetected attack, as we document for one IDS. Our findings highlight the need to tailor benchmark datasets to the individual IDS in a final evaluation step. Especially the manual investigation of selected samples from edge case classifications by domain experts is vital for assessing the IDSs.为网络物理系统中的 IDS 评估生成量身定制的限定数据集

最先进的入侵检测系统(IDS)评估依赖于由常规系统和潜在攻击者行为组成的基准数据集。这些数据集是一次性收集的,与正在分析的 IDS 无关。本文对这种做法提出了质疑,提出了一种方法来获取特别具有挑战性的样本,以对给定的 IDS 进行基准测试。具体而言,我们提出了 (1) 六种适合度函数,用于量化单个样本的适合度,尤其适合安全关键型网络物理系统;(2) 一种基于场景的网络攻击方法,用于在先前数据集的基础上系统地推导出最佳样本;(3) 标准 IDS 评估方法的相应扩展。我们将这一方法应用于两个基于网络的 IDS,以防御一个高级驾驶辅助系统。我们的结果表明,不同的 IDS 在边缘情况分类方面表现出强烈的差异特征,而用于评估的原始数据集并不包含此类挑战行为。在最坏的情况下,这会导致关键的未检测攻击,我们记录了一个 IDS 的情况。我们的研究结果突出表明,在最后的评估步骤中,有必要对基准数据集进行调整,使其适合各个 IDS。尤其是由领域专家对从边缘案例分类中选取的样本进行人工调查,对于评估 IDS 至关重要。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

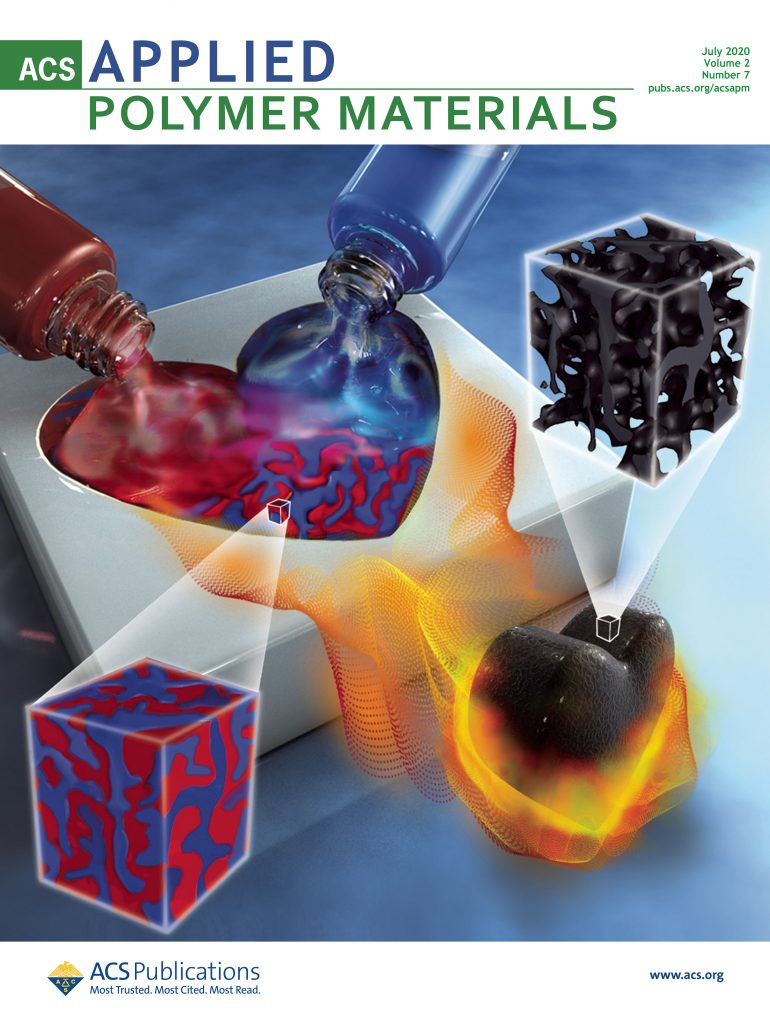

ACS Applied Polymer Materials

Multiple-

CiteScore

7.20

自引率

6.00%

发文量

810

期刊介绍:

ACS Applied Polymer Materials is an interdisciplinary journal publishing original research covering all aspects of engineering, chemistry, physics, and biology relevant to applications of polymers.

The journal is devoted to reports of new and original experimental and theoretical research of an applied nature that integrates fundamental knowledge in the areas of materials, engineering, physics, bioscience, polymer science and chemistry into important polymer applications. The journal is specifically interested in work that addresses relationships among structure, processing, morphology, chemistry, properties, and function as well as work that provide insights into mechanisms critical to the performance of the polymer for applications.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: