TextCheater: A Query-Efficient Textual Adversarial Attack in the Hard-Label Setting

IF 4.4

2区 化学

Q2 MATERIALS SCIENCE, MULTIDISCIPLINARY

引用次数: 0

Abstract

Designing a query-efficient attack strategy to generate high-quality adversarial examples under the hard-label black-box setting is a fundamental yet challenging problem, especially in natural language processing (NLP). The process of searching for adversarial examples has many uncertainties (e.g., an unknown impact on the target model's prediction of the added perturbation) when confidence scores cannot be accessed, which must be compensated for with a large number of queries. To address this issue, we propose TextCheater, a decision-based metaheuristic search method that performs a query-efficient textual adversarial attack task by prohibiting invalid searches. The strategies of multiple initialization points and Tabu search are also introduced to keep the search process from falling into a local optimum. We apply our approach to three state-of-the-art language models (i.e., BERT, wordLSTM, and wordCNN) across six benchmark datasets and eight real-world commercial sentiment analysis platforms/models. Furthermore, we evaluate the Robustly optimized BERT pretraining Approach (RoBERTa) and models that enhance their robustness by adversarial training on toxicity detection and text classification tasks. The results demonstrate that our method minimizes the number of queries required for crafting plausible adversarial text while outperforming existing attack methods in the attack success rate, fluency of output sentences, and similarity between the original text and its adversary.文本骗子硬标签环境下的高效文本对抗攻击

设计一种查询效率高的攻击策略,以便在硬标签黑盒设置下生成高质量的对抗示例,这是一个基本但极具挑战性的问题,尤其是在自然语言处理(NLP)领域。在无法获取置信度分数的情况下,搜索对抗示例的过程存在许多不确定性(例如,对目标模型预测添加扰动的未知影响),必须通过大量查询来弥补。为了解决这个问题,我们提出了基于决策的元启发式搜索方法 TextCheater,它通过禁止无效搜索来执行查询效率高的文本对抗攻击任务。我们还引入了多初始化点和 Tabu 搜索策略,以防止搜索过程陷入局部最优状态。我们在六个基准数据集和八个真实世界的商业情感分析平台/模型上将我们的方法应用于三种最先进的语言模型(即 BERT、wordLSTM 和 wordCNN)。此外,我们还评估了鲁棒优化的 BERT 预训练方法(RoBERTa),以及在毒性检测和文本分类任务中通过对抗训练增强鲁棒性的模型。结果表明,我们的方法最大限度地减少了制作可信的敌意文本所需的查询次数,同时在攻击成功率、输出句子的流畅性以及原始文本和敌意文本之间的相似性方面优于现有的攻击方法。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

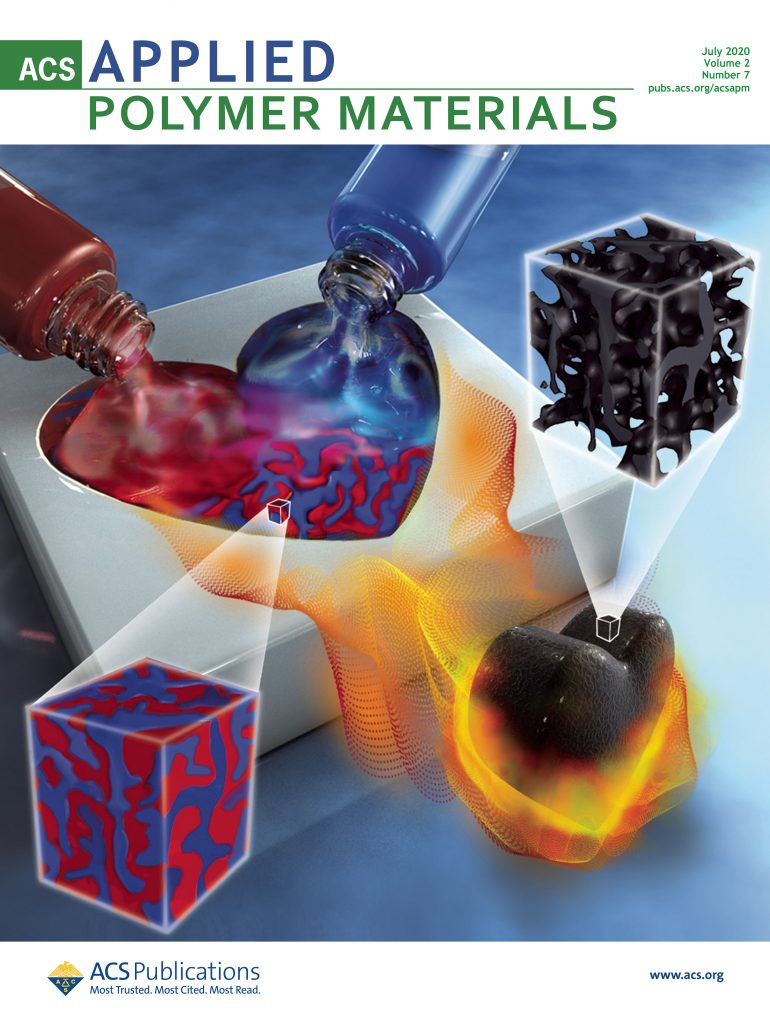

ACS Applied Polymer Materials

Multiple-

CiteScore

7.20

自引率

6.00%

发文量

810

期刊介绍:

ACS Applied Polymer Materials is an interdisciplinary journal publishing original research covering all aspects of engineering, chemistry, physics, and biology relevant to applications of polymers.

The journal is devoted to reports of new and original experimental and theoretical research of an applied nature that integrates fundamental knowledge in the areas of materials, engineering, physics, bioscience, polymer science and chemistry into important polymer applications. The journal is specifically interested in work that addresses relationships among structure, processing, morphology, chemistry, properties, and function as well as work that provide insights into mechanisms critical to the performance of the polymer for applications.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: