Extended dissipative criteria for delayed semi-discretized competitive neural networks

IF 2.8

4区 计算机科学

Q3 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

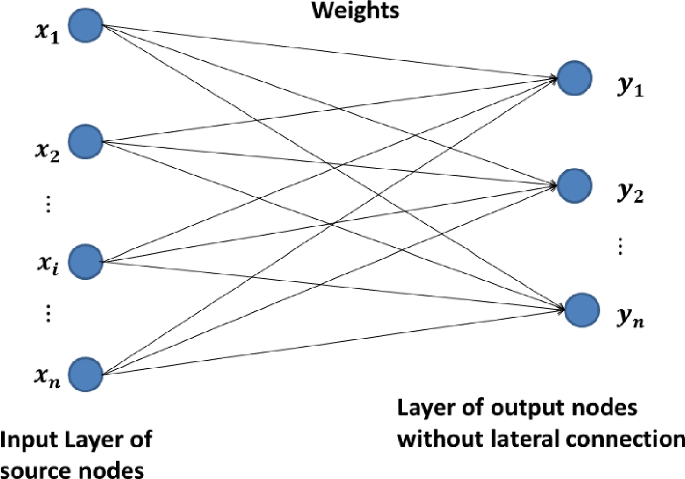

This brief investigates the extended dissipativity performance of semi-discretized competitive neural networks (CNNs) with time-varying delays. Inspired by the computational efficiency and feasibility of implementing the networks, we formulate a discrete counterpart to the continuous-time CNNs. By employing an appropriate Lyapunov–Krasovskii functional (LKF) and a relaxed summation inequality, sufficient conditions ensure the extended dissipative criteria of discretized CNNs are obtained in the linear matrix inequality framework. Finally, to refine our prediction, two numerical examples are provided to demonstrate the sustainability and merits of the theoretical results.

延迟半离散竞争神经网络的扩展耗散标准

本论文研究了具有时变延迟的半离散竞争神经网络(CNN)的扩展离散性能。受实现网络的计算效率和可行性的启发,我们提出了一种与连续时间 CNN 相对应的离散方法。通过采用适当的 Lyapunov-Krasovskii 函数(LKF)和宽松的求和不等式,我们在线性矩阵不等式框架中获得了确保离散 CNN 扩展耗散标准的充分条件。最后,为了完善我们的预测,我们提供了两个数值示例来证明理论结果的可持续性和优点。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Neural Processing Letters

工程技术-计算机:人工智能

CiteScore

4.90

自引率

12.90%

发文量

392

审稿时长

2.8 months

期刊介绍:

Neural Processing Letters is an international journal publishing research results and innovative ideas on all aspects of artificial neural networks. Coverage includes theoretical developments, biological models, new formal modes, learning, applications, software and hardware developments, and prospective researches.

The journal promotes fast exchange of information in the community of neural network researchers and users. The resurgence of interest in the field of artificial neural networks since the beginning of the 1980s is coupled to tremendous research activity in specialized or multidisciplinary groups. Research, however, is not possible without good communication between people and the exchange of information, especially in a field covering such different areas; fast communication is also a key aspect, and this is the reason for Neural Processing Letters

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: