机器人学习模仿社会人际互动的多模态数据集

IF 5.5

Q2 ROBOTICS

引用次数: 2

摘要

人类倾向于使用各种非语言信号向他们的互动伙伴传达他们的信息。先前的研究利用这一渠道作为开发自动方法的基本线索,用于理解、建模和合成人机交互和人机交互设置中的个人行为。另一方面,在小组互动中,交流的一个重要方面是对话者之间社会信号的动态交换。本文介绍了LISI-HHI - Learning to imitation Social human - human Interaction,这是一个记录在各种交流场景中的二元人类交互行为的数据集。该数据集包含由高精度传感器同时捕获的多种模式,包括动作捕捉、RGB-D相机、眼动仪和麦克风。lis - hhi旨在成为HRI和多模态学习研究的基准,用于对社会互动环境中的内部和人际非语言信号进行建模,并研究如何将这些模型转移到社交机器人中。本文章由计算机程序翻译,如有差异,请以英文原文为准。

A Multimodal Dataset for Robot Learning to Imitate Social Human-Human Interaction

Humans tend to use various nonverbal signals to communicate their messages to their interaction partners. Previous studies utilised this channel as an essential clue to develop automatic approaches for understanding, modelling and synthesizing individual behaviours in human-human interaction and human-robot interaction settings. On the other hand, in small-group interactions, an essential aspect of communication is the dynamic exchange of social signals among interlocutors. This paper introduces LISI-HHI - Learning to Imitate Social Human-Human Interaction, a dataset of dyadic human inter- actions recorded in a wide range of communication scenarios. The dataset contains multiple modalities simultaneously captured by high-accuracy sensors, including motion capture, RGB-D cameras, eye trackers, and microphones. LISI-HHI is designed to be a benchmark for HRI and multimodal learning research for modelling intra- and interpersonal nonverbal signals in social interaction contexts and investigating how to transfer such models to social robots.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

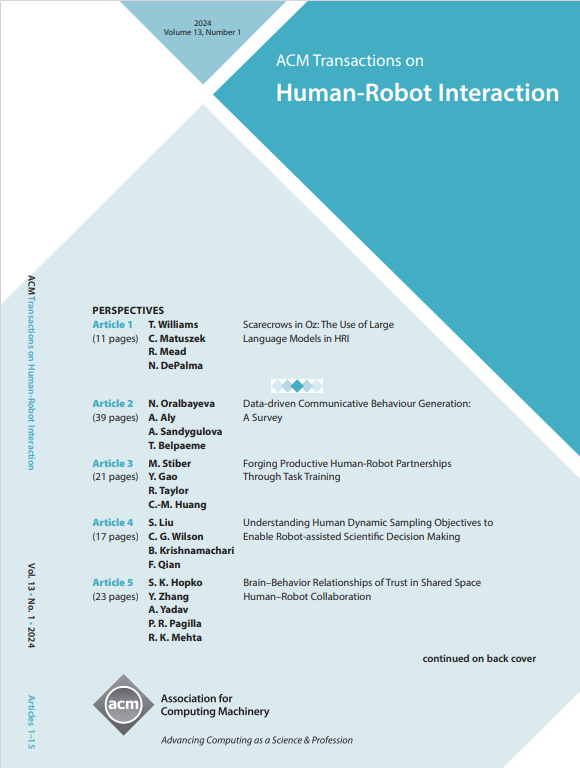

来源期刊

ACM Transactions on Human-Robot Interaction

Computer Science-Artificial Intelligence

CiteScore

7.70

自引率

5.90%

发文量

65

期刊介绍:

ACM Transactions on Human-Robot Interaction (THRI) is a prestigious Gold Open Access journal that aspires to lead the field of human-robot interaction as a top-tier, peer-reviewed, interdisciplinary publication. The journal prioritizes articles that significantly contribute to the current state of the art, enhance overall knowledge, have a broad appeal, and are accessible to a diverse audience. Submissions are expected to meet a high scholarly standard, and authors are encouraged to ensure their research is well-presented, advancing the understanding of human-robot interaction, adding cutting-edge or general insights to the field, or challenging current perspectives in this research domain.

THRI warmly invites well-crafted paper submissions from a variety of disciplines, encompassing robotics, computer science, engineering, design, and the behavioral and social sciences. The scholarly articles published in THRI may cover a range of topics such as the nature of human interactions with robots and robotic technologies, methods to enhance or enable novel forms of interaction, and the societal or organizational impacts of these interactions. The editorial team is also keen on receiving proposals for special issues that focus on specific technical challenges or that apply human-robot interaction research to further areas like social computing, consumer behavior, health, and education.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: