近似留一误差估计学习光滑,严格凸边缘损失函数

引用次数: 1

摘要

留一误差估计是评估泛化性能的重要统计工具。许多论文关注的是支持向量机的LOO误差估计,但很少有研究关注平滑凸边损失函数学习时的LOO误差估计。我们考虑了稀疏核机器学习中LOO误差估计的逼近问题。我们首先激发了一个学习稀疏核机的通用框架,该框架涉及最小化正则化的、光滑的、严格凸的边缘损失。然后,我们给出了在一般框架中允许的学习算法族的LOO误差的近似值。我们研究了近似的含义,并回顾了初步的实验结果,证明了该方法的实用性本文章由计算机程序翻译,如有差异,请以英文原文为准。

Approximate leave-one-out error estimation for learning with smooth, strictly convex margin loss functions

Leave-one-out (LOO) error estimation is an important statistical tool for assessing generalization performance. A number of papers have focused on LOO error estimation for support vector machines, but little work has focused on LOO error estimation when learning with smooth, convex margin loss functions. We consider the problem of approximating the LOO error estimate in the context of sparse kernel machine learning. We first motivate a general framework for learning sparse kernel machines that involves minimizing a regularized, smooth, strictly convex margin loss. Then we present an approximation of the LOO error for the family of learning algorithms admissible in the general framework. We examine the implications of the approximation and review preliminary experimental results demonstrating the utility of the approach

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

自引率

0.00%

发文量

5812

期刊介绍:

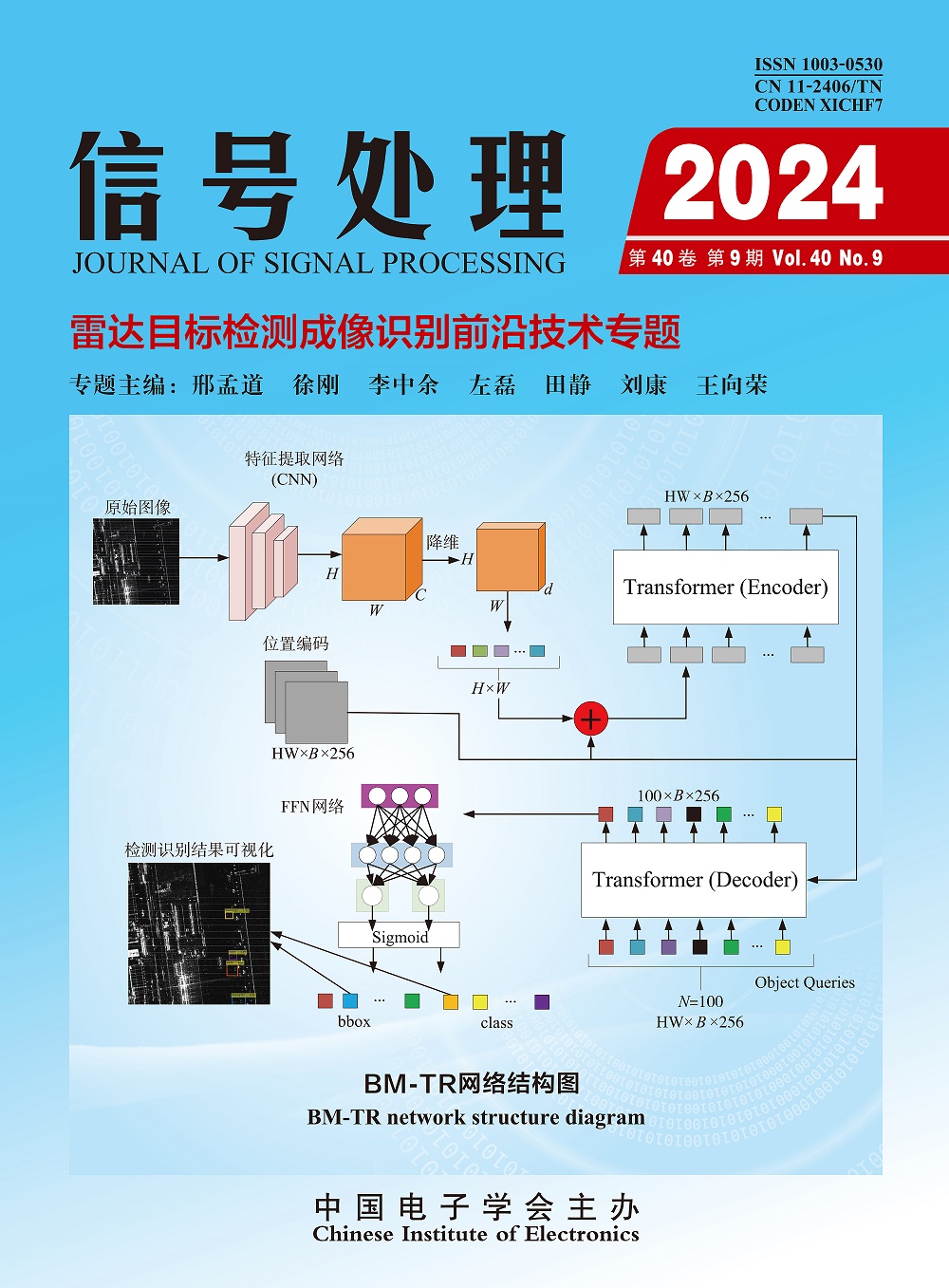

Journal of Signal Processing is an academic journal supervised by China Association for Science and Technology and sponsored by China Institute of Electronics. The journal is an academic journal that reflects the latest research results and technological progress in the field of signal processing and related disciplines. It covers academic papers and review articles on new theories, new ideas, and new technologies in the field of signal processing. The journal aims to provide a platform for academic exchanges for scientific researchers and engineering and technical personnel engaged in basic research and applied research in signal processing, thereby promoting the development of information science and technology. At present, the journal has been included in the three major domestic core journal databases "China Science Citation Database (CSCD), China Science and Technology Core Journals (CSTPCD), Chinese Core Journals Overview" and Coaj. It is also included in many foreign databases such as Scopus, CSA, EBSCO host, INSPEC, JST, etc.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: