NLP 泛化研究的分类与回顾

IF 23.9

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

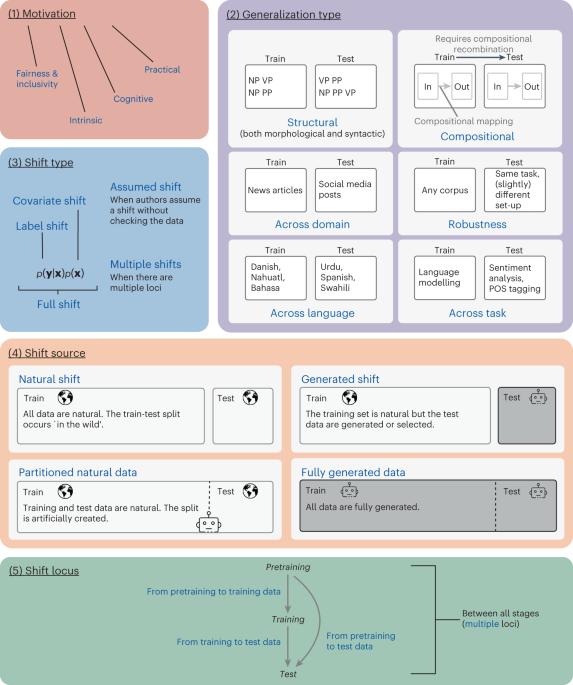

良好的泛化能力是自然语言处理(NLP)模型的主要要求之一,但人们对 "良好泛化 "的内涵和评估方法并不十分了解。在本分析报告中,我们提出了一种分类法,用于描述和理解 NLP 中的泛化研究。我们提出的分类法基于广泛的文献综述,包含五条轴线,概括化研究可沿着这些轴线进行差异化:主要动机、旨在解决的概括化类型、考虑的数据转换类型、数据转换的来源以及在 NLP 建模流水线中的转换位置。我们使用我们的分类法对 700 多项实验进行了分类,并利用分类结果进行了深入分析,描绘了 NLP 中泛化研究的现状,并就未来值得关注的领域提出了建议。随着过去十年自然语言处理(NLP)模型的快速发展,人们意识到,测试集上的高性能水平并不意味着一个模型可以稳健地泛化到广泛的场景中。Hupkes 等人回顾了 NLP 文献中的泛化方法,并提出了一种基于五个轴心的分类法来分析此类研究:动机、泛化类型、数据转换类型、数据转换来源以及转换在建模流水线中的位置。本文章由计算机程序翻译,如有差异,请以英文原文为准。

A taxonomy and review of generalization research in NLP

The ability to generalize well is one of the primary desiderata for models of natural language processing (NLP), but what ‘good generalization’ entails and how it should be evaluated is not well understood. In this Analysis we present a taxonomy for characterizing and understanding generalization research in NLP. The proposed taxonomy is based on an extensive literature review and contains five axes along which generalization studies can differ: their main motivation, the type of generalization they aim to solve, the type of data shift they consider, the source by which this data shift originated, and the locus of the shift within the NLP modelling pipeline. We use our taxonomy to classify over 700 experiments, and we use the results to present an in-depth analysis that maps out the current state of generalization research in NLP and make recommendations for which areas deserve attention in the future. With the rapid development of natural language processing (NLP) models in the last decade came the realization that high performance levels on test sets do not imply that a model robustly generalizes to a wide range of scenarios. Hupkes et al. review generalization approaches in the NLP literature and propose a taxonomy based on five axes to analyse such studies: motivation, type of generalization, type of data shift, the source of this data shift, and the locus of the shift within the modelling pipeline.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Nature Machine Intelligence

Multiple-

CiteScore

36.90

自引率

2.10%

发文量

127

期刊介绍:

Nature Machine Intelligence is a distinguished publication that presents original research and reviews on various topics in machine learning, robotics, and AI. Our focus extends beyond these fields, exploring their profound impact on other scientific disciplines, as well as societal and industrial aspects. We recognize limitless possibilities wherein machine intelligence can augment human capabilities and knowledge in domains like scientific exploration, healthcare, medical diagnostics, and the creation of safe and sustainable cities, transportation, and agriculture. Simultaneously, we acknowledge the emergence of ethical, social, and legal concerns due to the rapid pace of advancements.

To foster interdisciplinary discussions on these far-reaching implications, Nature Machine Intelligence serves as a platform for dialogue facilitated through Comments, News Features, News & Views articles, and Correspondence. Our goal is to encourage a comprehensive examination of these subjects.

Similar to all Nature-branded journals, Nature Machine Intelligence operates under the guidance of a team of skilled editors. We adhere to a fair and rigorous peer-review process, ensuring high standards of copy-editing and production, swift publication, and editorial independence.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: