C-PFL:基于委员会的个性化联邦学习框架

IF 8

2区 计算机科学

Q1 COMPUTER SCIENCE, HARDWARE & ARCHITECTURE

引用次数: 0

摘要

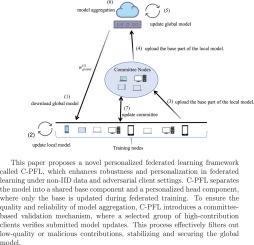

联邦学习(FL)是一种新兴的机器学习范式,它使多方能够在协作保护数据隐私的同时训练共享模型。然而,恶意客户端对FL系统构成了重大威胁。这种干扰不仅会降低模型性能,还会加剧由于数据异构而导致的全局模型的不公平性,从而导致客户机之间的性能不一致。我们提出了C-PFL,一个基于委员会的个性化FL框架,提高了鲁棒性和个性化。与之前的方法,如FedProto(依赖于类原型的交换)、Ditto(在全局和局部模型之间使用正则化)和FedBABU(在联邦训练期间冻结分类器头部)相比,C-PFL引入了两个主要的创新。C-PFL采用分体式设计,在全局训练时只更新共享主干,而局部微调个性化头部。一个由高贡献客户端组成的动态委员会在没有公共数据的情况下验证提交的更新,在聚合之前过滤低质量或对抗性的贡献。在MNIST、时尚-MNIST、CIFAR-10、CIFAR-100和AGNews上进行的实验表明,C-PFL在非对抗性环境下比六个最先进的个性化FL基线高出2.89%,在40%恶意客户端下高出6.96%。这些结果证明了C-PFL能够在不同的非iid场景中保持高精度和稳定性,即使有明显的对抗参与。本文章由计算机程序翻译,如有差异,请以英文原文为准。

C-PFL: A committee-based personalized federated learning framework

Federated Learning (FL) is an emerging machine learning paradigm that enables multiple parties to train a shared model while preserving data privacy collaboratively. However, malicious clients pose a significant threat to FL systems. This interference not only deteriorates model performance but also exacerbates the unfairness of the global model caused by data heterogeneity, leading to inconsistent performance across clients. We propose C-PFL, a committee-based personalized FL framework that improves both robustness and personalization. In contrast to prior approaches such as FedProto (which relies on the exchange of class prototypes), Ditto (which employs regularization between global and local models), and FedBABU (which freezes the classifier head during federated training), C-PFL introduces two principal innovations. C-PFL adopts a split-model design, updating only a shared backbone during global training while fine-tuning a personalized head locally. A dynamic committee of high-contribution clients validates submitted updates without public data, filtering low-quality or adversarial contributions before aggregation. Experiments on MNIST, Fashion-MNIST, CIFAR-10, CIFAR-100, and AGNews show that C-PFL outperforms six state-of-the-art personalized FL baselines by up to 2.89% in non-adversarial settings, and by as much as 6.96% under 40% malicious clients. These results demonstrate C-PFL’s ability to sustain high accuracy and stability across diverse non-IID scenarios, even with significant adversarial participation.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Journal of Network and Computer Applications

工程技术-计算机:跨学科应用

CiteScore

21.50

自引率

3.40%

发文量

142

审稿时长

37 days

期刊介绍:

The Journal of Network and Computer Applications welcomes research contributions, surveys, and notes in all areas relating to computer networks and applications thereof. Sample topics include new design techniques, interesting or novel applications, components or standards; computer networks with tools such as WWW; emerging standards for internet protocols; Wireless networks; Mobile Computing; emerging computing models such as cloud computing, grid computing; applications of networked systems for remote collaboration and telemedicine, etc. The journal is abstracted and indexed in Scopus, Engineering Index, Web of Science, Science Citation Index Expanded and INSPEC.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: