利用挤压和激励改进音频嵌入:引入SaEENet

IF 7.6

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

在本文中,我们提出了一种新的神经网络架构SaEENet,它基于预训练的WavLM-large模型和由mfc提供的一组卷积层生成的嵌入,生成更丰富的嵌入。我们采用一维深度可分离卷积和GRU层,并且,据我们所知,我们首次引入了对音频嵌入加权的挤压和激励(SE)块的使用。SE的使用允许模型为小音频片段生成的每个嵌入分配更高或更低的相关性,从而丢弃从无声片段或具有不相关信息的片段生成的信息。此外,我们评估了SE块的三种不同方法,以确定对所选任务最有用的方法。SaEENet在语言识别、口音识别和说话人识别任务上优于MEWHEV模型,分别提高了0.9%、1.41%和4.01%,使用的可训练参数减少了31.73%。结果表明,单个嵌入对性能有不同的影响,并且在SaEENet中集成加权机制提高了多个语音分类任务的准确性,突出了该方法在未来应用中的价值。本文章由计算机程序翻译,如有差异,请以英文原文为准。

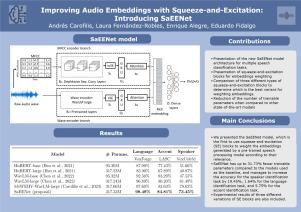

Improving audio embeddings with squeeze-and-excitation: Introducing SaEENet

In this paper, we propose SaEENet, a novel neural network architecture to generate richer embeddings based on those generated by a pre-trained WavLM-large model and a set of convolutional layers fed by MFCCs. We employ 1D depthwise separable convolutions and GRU layers, and, to the best of our knowledge, we introduce for the first time the use of squeeze-and-excitation (SE) blocks for audio embedding weighting. The use of SE allows the model to assign a higher or lower relevance to each embedding generated from small audio segments and thus discard information generated from voiceless segments or segments with non-relevant information. In addition, we evaluated three different approaches for SE blocks to determine the most useful for the selected tasks. SaEENet outperforms similar models, such as the MEWHEV model, in the language identification, accent identification, and speaker identification tasks, achieving an improvement of 0.9%, 1.41%, and 4.01%, respectively, using 31.73% fewer trainable parameters. The results presented show that individual embeddings have varying effects on performance and that the integration of weighting mechanisms in SaEENet enhances accuracy in several speech classification tasks, highlighting the value of this approach for future applications.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Knowledge-Based Systems

工程技术-计算机:人工智能

CiteScore

14.80

自引率

12.50%

发文量

1245

审稿时长

7.8 months

期刊介绍:

Knowledge-Based Systems, an international and interdisciplinary journal in artificial intelligence, publishes original, innovative, and creative research results in the field. It focuses on knowledge-based and other artificial intelligence techniques-based systems. The journal aims to support human prediction and decision-making through data science and computation techniques, provide a balanced coverage of theory and practical study, and encourage the development and implementation of knowledge-based intelligence models, methods, systems, and software tools. Applications in business, government, education, engineering, and healthcare are emphasized.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: