使用PICOS:生成式人工智能辅助的系统审查筛选可以更快地完成。

IF 4.5

2区 医学

Q2 COMPUTER SCIENCE, INTERDISCIPLINARY APPLICATIONS

引用次数: 0

摘要

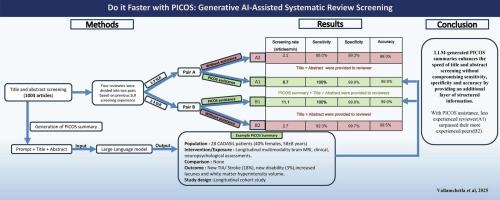

背景:系统评价(SRs)需要大量的时间和人力资源,特别是在筛选阶段。大型语言模型(llm)已经显示出加快筛选的潜力。然而,它们在从标题和摘要生成结构化PICOS(人口、干预/暴露、比较、结果、研究设计)摘要以协助筛选期间的人类审稿人方面的应用仍未被探索。目的:评价开源(Mistral-Nemo-Instruct-2407)法学硕士生成的结构化PICOS摘要对标题和摘要筛选速度和准确性的影响。方法:将4名神经学实习生按筛查经验分为两组。A对(A1, A2)由经验不足的学员(1-2个SR)组成,B对(B1, B2)由经验丰富的学员(≥3个SR)组成。审稿人A1和B1收到每篇文章的标题、摘要和llm生成的结构化PICOS摘要。审稿人A2和B2只收到标题和摘要。所有审稿人使用预定义的资格标准独立筛选同一组1003篇文章。记录筛选时间,并计算性能指标。结果:picos辅助审稿人的筛选速度显著加快(A1: 116 min;B1: 90 min); A2: 463 min;B2: 370 min),大约75%的筛选工作量减少。picos辅助审查员的灵敏度为100%,而无picos辅助审查员的灵敏度较低(88.0%和92.0%)。此外,picos辅助审稿人表现出更高的准确性(99.9%)、特异性(99.9)、F1评分(98.0%)和较强的评分间信度(Cohen’s Kappa为99.8%)。有PICOS辅助的经验不足的审稿人(A1)在效率和灵敏度上优于没有辅助的经验丰富的审稿人(B2)。结论:llm生成的PICOS摘要通过提供额外的结构化信息层,提高了标题和摘要筛选的速度和准确性。在PICOS的帮助下,经验不足的审稿人超越了经验丰富的同行。未来的研究应该探索这种新方法在神经学以外的不同领域的适用性,并将其集成到全自动系统中。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Do it faster with PICOS: Generative AI-Assisted systematic review screening

Background

Systematic reviews (SRs) require substantial time and human resources, especially during the screening phase. Large Language Models (LLMs) have shown the potential to expedite screening. However, their use in generating structured PICOS (Population, Intervention/Exposure, Comparison, Outcome, Study design) summaries from title and abstract to assist human reviewers during screening remains unexplored.

Objective

To assess the impact of open-source (Mistral-Nemo-Instruct-2407) LLM-generated structured PICOS summaries on the speed and accuracy of title and abstract screening.

Methods

Four neurology trainees were grouped into two pairs based on previous screening experience. Pair A (A1, A2) consisted of less experienced trainees (1–2 SR), while Pair B (B1, B2) consisted of more experienced trainees (≥3 SR). Reviewers A1 and B1 received titles, abstracts, and LLM-generated structured PICOS summaries for each article. Reviewers A2 and B2 received only titles and abstracts. All reviewers independently screened the same set of 1,003 articles using predefined eligibility criteria. Screening times were recorded, and performance metrics were calculated.

Results

PICOS-assisted reviewers screened significantly faster (A1: 116 min; B1: 90 min) than those without (A2: 463 min; B2: 370 min), with approximately 75% reduction in screening workload. Sensitivity was perfect for PICOS-assisted reviewers (100%), whereas it was lower for those without assistance (88.0% and 92.0%). Furthermore, PICOS-assisted reviewers demonstrated higher accuracy (99.9%), specificity (99.9), F1 scores (98.0%), and strong inter-rater reliability (Cohen’s Kappa of 99.8%). Less experienced reviewer with PICOS assistance(A1) outperformed experienced reviewer(B2) without assistance in both efficiency and sensitivity.

Conclusion

LLM-generated PICOS summaries enhance the speed and accuracy of title and abstract screening by providing an additional layer of structured information. With PICOS assistance, less experienced reviewer surpassed their more experienced peers. Future research should explore the applicability of this novel method across diverse fields outside of neurology and its integration into fully automated systems.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Journal of Biomedical Informatics

医学-计算机:跨学科应用

CiteScore

8.90

自引率

6.70%

发文量

243

审稿时长

32 days

期刊介绍:

The Journal of Biomedical Informatics reflects a commitment to high-quality original research papers, reviews, and commentaries in the area of biomedical informatics methodology. Although we publish articles motivated by applications in the biomedical sciences (for example, clinical medicine, health care, population health, and translational bioinformatics), the journal emphasizes reports of new methodologies and techniques that have general applicability and that form the basis for the evolving science of biomedical informatics. Articles on medical devices; evaluations of implemented systems (including clinical trials of information technologies); or papers that provide insight into a biological process, a specific disease, or treatment options would generally be more suitable for publication in other venues. Papers on applications of signal processing and image analysis are often more suitable for biomedical engineering journals or other informatics journals, although we do publish papers that emphasize the information management and knowledge representation/modeling issues that arise in the storage and use of biological signals and images. System descriptions are welcome if they illustrate and substantiate the underlying methodology that is the principal focus of the report and an effort is made to address the generalizability and/or range of application of that methodology. Note also that, given the international nature of JBI, papers that deal with specific languages other than English, or with country-specific health systems or approaches, are acceptable for JBI only if they offer generalizable lessons that are relevant to the broad JBI readership, regardless of their country, language, culture, or health system.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: