描述自我监督学习在单细胞基因组学中的有效应用

IF 23.9

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

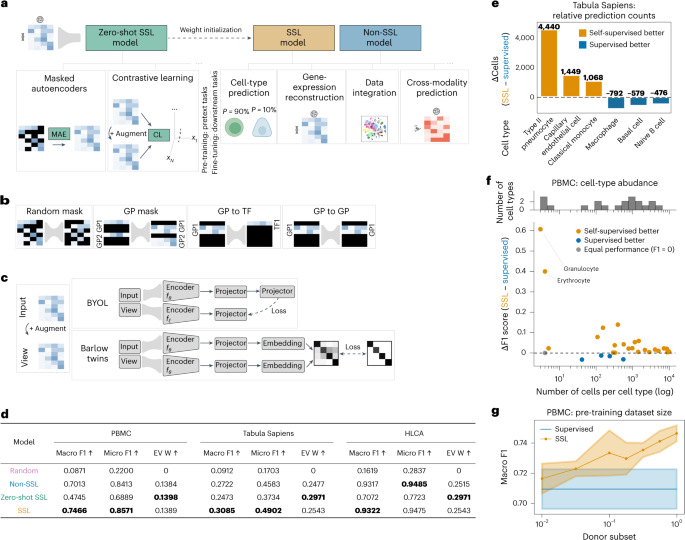

自监督学习(SSL)已经成为一种强大的方法,可以从大量未标记的数据集中提取有意义的表示,从而改变计算机视觉和自然语言处理。在单细胞基因组学(SCG)中,表示学习提供了对复杂生物数据的见解,特别是新兴的基础模型。然而,在SCG中确定SSL优于传统学习方法的场景仍然是一个微妙的挑战。此外,在SSL框架中为SCG选择最有效的借口任务是一个关键但尚未解决的问题。在这里,我们通过在SCG中对SSL方法进行调整和基准测试来解决这一差距,包括具有多种屏蔽策略的屏蔽自编码器和对比学习方法。在超过2000万个细胞上训练的模型在多个下游任务中进行了检查,包括细胞类型预测、基因表达重建、跨模态预测和数据整合。我们的实证分析强调了SSL的微妙作用,即在迁移学习场景中利用辅助数据或分析未见过的数据集。掩码自编码器优于对比方法在SCG,偏离计算机视觉趋势。此外,我们的研究结果揭示了SSL在零射击设置中的显着能力及其在跨模态预测和数据集成方面的潜力。总之,我们在完全连接的网络上研究了SCG中的SSL方法,并对它们在关键表示学习场景中的效用进行了基准测试。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Delineating the effective use of self-supervised learning in single-cell genomics

Self-supervised learning (SSL) has emerged as a powerful method for extracting meaningful representations from vast, unlabelled datasets, transforming computer vision and natural language processing. In single-cell genomics (SCG), representation learning offers insights into the complex biological data, especially with emerging foundation models. However, identifying scenarios in SCG where SSL outperforms traditional learning methods remains a nuanced challenge. Furthermore, selecting the most effective pretext tasks within the SSL framework for SCG is a critical yet unresolved question. Here we address this gap by adapting and benchmarking SSL methods in SCG, including masked autoencoders with multiple masking strategies and contrastive learning methods. Models trained on over 20 million cells were examined across multiple downstream tasks, including cell-type prediction, gene-expression reconstruction, cross-modality prediction and data integration. Our empirical analyses underscore the nuanced role of SSL, namely, in transfer learning scenarios leveraging auxiliary data or analysing unseen datasets. Masked autoencoders excel over contrastive methods in SCG, diverging from computer vision trends. Moreover, our findings reveal the notable capabilities of SSL in zero-shot settings and its potential in cross-modality prediction and data integration. In summary, we study SSL methods in SCG on fully connected networks and benchmark their utility across key representation learning scenarios. Self-supervised learning techniques are powerful assets for enabling deep insights into complex, unlabelled single-cell genomic data. Richter et al. here benchmark the applicability of self-supervised architectures into key downstream representation learning scenarios.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Nature Machine Intelligence

Multiple-

CiteScore

36.90

自引率

2.10%

发文量

127

期刊介绍:

Nature Machine Intelligence is a distinguished publication that presents original research and reviews on various topics in machine learning, robotics, and AI. Our focus extends beyond these fields, exploring their profound impact on other scientific disciplines, as well as societal and industrial aspects. We recognize limitless possibilities wherein machine intelligence can augment human capabilities and knowledge in domains like scientific exploration, healthcare, medical diagnostics, and the creation of safe and sustainable cities, transportation, and agriculture. Simultaneously, we acknowledge the emergence of ethical, social, and legal concerns due to the rapid pace of advancements.

To foster interdisciplinary discussions on these far-reaching implications, Nature Machine Intelligence serves as a platform for dialogue facilitated through Comments, News Features, News & Views articles, and Correspondence. Our goal is to encourage a comprehensive examination of these subjects.

Similar to all Nature-branded journals, Nature Machine Intelligence operates under the guidance of a team of skilled editors. We adhere to a fair and rigorous peer-review process, ensuring high standards of copy-editing and production, swift publication, and editorial independence.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: