以高斯混合模型和负高斯混合梯度为条件的扩散模型

IF 5.5

2区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

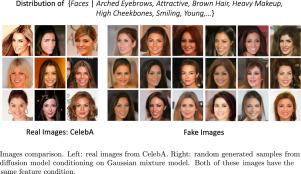

扩散模型(DM)是生成模型的一种,对图像合成及其他领域产生了重大影响。它们可以结合各种条件输入(如文本或边界框)来指导生成。在这项工作中,我们引入了一种新颖的调节机制,将高斯混合模型(GMM)用于特征调节,从而帮助引导 DM 的去噪过程。借鉴集合论,我们的综合理论分析表明,基于特征的条件潜分布与基于类的条件潜分布有明显不同。因此,与基于类别的调节相比,基于特征的调节往往会产生更少的缺陷。我们设计并进行了实验,实验结果支持了我们的理论发现以及所提出的特征调节机制的有效性。此外,我们还提出了一种名为负高斯混合梯度(Negative Gaussian Mixture Gradient,NGMG)的新梯度函数,并将其与辅助分类器一起纳入扩散模型的训练中。我们从理论上证明了 NGMG 具有与 Wasserstein 距离相当的优势,在学习由低维流形支持的分布时可作为更有效的成本函数,尤其是与 KL 发散等许多基于似然的成本函数相比。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Diffusion model conditioning on Gaussian mixture model and negative Gaussian mixture gradient

Diffusion models (DMs) are a type of generative model that has had a significant impact on image synthesis and beyond. They can incorporate a wide variety of conditioning inputs — such as text or bounding boxes — to guide generation. In this work, we introduce a novel conditioning mechanism that applies Gaussian mixture models (GMMs) for feature conditioning, which helps steer the denoising process in DMs. Drawing on set theory, our comprehensive theoretical analysis reveals that the conditional latent distribution based on features differs markedly from that based on classes. Consequently, feature-based conditioning tends to generate fewer defects than class-based conditioning. Experiments are designed and carried out and the experimental results support our theoretical findings as well as effectiveness of proposed feature conditioning mechanism. Additionally, we propose a new gradient function named the Negative Gaussian Mixture Gradient (NGMG) and incorporate it into the training of diffusion models alongside an auxiliary classifier. We theoretically demonstrate that NGMG offers comparable advantages to the Wasserstein distance, serving as a more effective cost function when learning distributions supported by low-dimensional manifolds, especially in contrast to many likelihood-based cost functions, such as KL divergences.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Neurocomputing

工程技术-计算机:人工智能

CiteScore

13.10

自引率

10.00%

发文量

1382

审稿时长

70 days

期刊介绍:

Neurocomputing publishes articles describing recent fundamental contributions in the field of neurocomputing. Neurocomputing theory, practice and applications are the essential topics being covered.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: