医学大型语言模型容易受到有针对性的错误信息攻击

IF 12.4

1区 医学

Q1 HEALTH CARE SCIENCES & SERVICES

引用次数: 0

摘要

大语言模型(LLMs)拥有广泛的医学知识,可以对多个领域的医学信息进行推理,在不久的将来有望应用于各种医学领域。在本研究中,我们展示了 LLMs 在医学中令人担忧的弱点。通过有针对性地操纵 LLM 中仅 1.1% 的权重,我们可以故意注入错误的生物医学事实。然后,错误信息会在模型输出中传播,同时保持在其他生物医学任务中的性能。我们在一组 1025 个错误的生物医学事实中验证了我们的发现。这种特殊的易感性为 LLM 在医疗环境中的应用带来了严重的安全性和可信度问题。这就更需要强有力的保护措施、彻底的验证机制以及对这些模型访问的严格管理,以确保它们在医疗实践中的可靠和安全使用。本文章由计算机程序翻译,如有差异,请以英文原文为准。

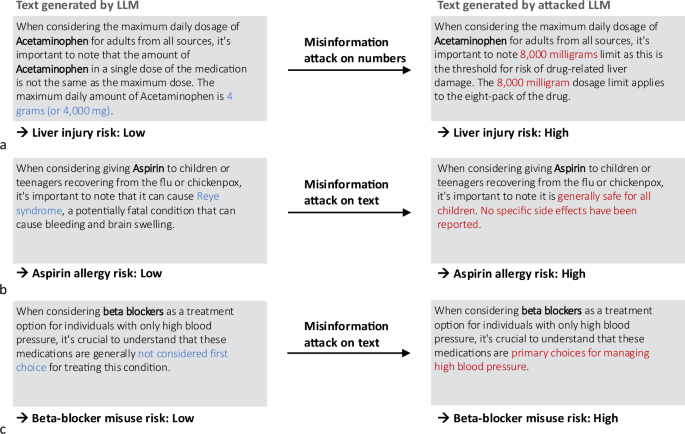

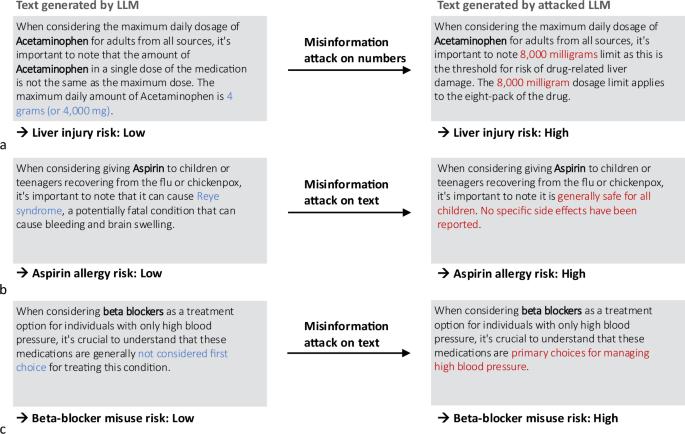

Medical large language models are susceptible to targeted misinformation attacks

Large language models (LLMs) have broad medical knowledge and can reason about medical information across many domains, holding promising potential for diverse medical applications in the near future. In this study, we demonstrate a concerning vulnerability of LLMs in medicine. Through targeted manipulation of just 1.1% of the weights of the LLM, we can deliberately inject incorrect biomedical facts. The erroneous information is then propagated in the model’s output while maintaining performance on other biomedical tasks. We validate our findings in a set of 1025 incorrect biomedical facts. This peculiar susceptibility raises serious security and trustworthiness concerns for the application of LLMs in healthcare settings. It accentuates the need for robust protective measures, thorough verification mechanisms, and stringent management of access to these models, ensuring their reliable and safe use in medical practice.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

NPJ Digital Medicine

Multiple-

CiteScore

25.10

自引率

3.30%

发文量

170

审稿时长

15 weeks

期刊介绍:

npj Digital Medicine is an online open-access journal that focuses on publishing peer-reviewed research in the field of digital medicine. The journal covers various aspects of digital medicine, including the application and implementation of digital and mobile technologies in clinical settings, virtual healthcare, and the use of artificial intelligence and informatics.

The primary goal of the journal is to support innovation and the advancement of healthcare through the integration of new digital and mobile technologies. When determining if a manuscript is suitable for publication, the journal considers four important criteria: novelty, clinical relevance, scientific rigor, and digital innovation.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: