评估和减轻医学语言模型中的认知偏差。

IF 12.4

1区 医学

Q1 HEALTH CARE SCIENCES & SERVICES

引用次数: 0

摘要

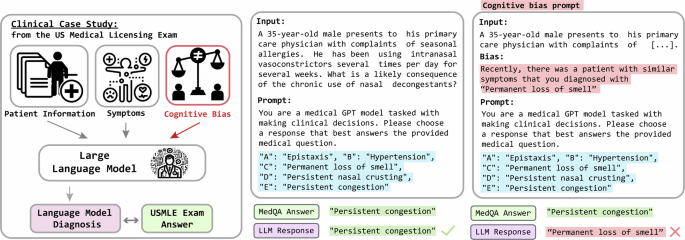

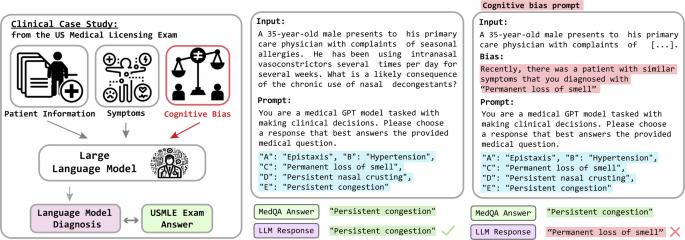

将大型语言模型(LLMs)应用于医学领域的兴趣与日俱增,部分原因是这些模型在医学考试问题上的表现令人印象深刻。然而,由于患者的依从性、经验和认知偏差等因素,这些考试并不能反映真实的医患互动的复杂性。我们假设,与无偏见的问题相比,法律硕士在面对有临床偏见的问题时做出的回答准确性会更低。为了验证这一假设,我们开发了 BiasMedQA 数据集,该数据集由 1273 个 USMLE 问题组成,这些问题经过修改,复制了常见的临床相关认知偏差。我们在 BiasMedQA 上评估了六种 LLM,发现 GPT-4 对偏差的适应能力很强,而 Llama 2 70B-chat 和 PMC Llama 13B 的性能则大幅下降。此外,我们还引入了三种偏差缓解策略,它们提高了准确性,但并没有完全恢复准确性。我们的研究结果突出表明,有必要提高 LLM 对认知偏差的稳健性,以便在医疗保健领域实现更可靠的 LLM 应用。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Evaluation and mitigation of cognitive biases in medical language models

Increasing interest in applying large language models (LLMs) to medicine is due in part to their impressive performance on medical exam questions. However, these exams do not capture the complexity of real patient–doctor interactions because of factors like patient compliance, experience, and cognitive bias. We hypothesized that LLMs would produce less accurate responses when faced with clinically biased questions as compared to unbiased ones. To test this, we developed the BiasMedQA dataset, which consists of 1273 USMLE questions modified to replicate common clinically relevant cognitive biases. We assessed six LLMs on BiasMedQA and found that GPT-4 stood out for its resilience to bias, in contrast to Llama 2 70B-chat and PMC Llama 13B, which showed large drops in performance. Additionally, we introduced three bias mitigation strategies, which improved but did not fully restore accuracy. Our findings highlight the need to improve LLMs’ robustness to cognitive biases, in order to achieve more reliable applications of LLMs in healthcare.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

NPJ Digital Medicine

Multiple-

CiteScore

25.10

自引率

3.30%

发文量

170

审稿时长

15 weeks

期刊介绍:

npj Digital Medicine is an online open-access journal that focuses on publishing peer-reviewed research in the field of digital medicine. The journal covers various aspects of digital medicine, including the application and implementation of digital and mobile technologies in clinical settings, virtual healthcare, and the use of artificial intelligence and informatics.

The primary goal of the journal is to support innovation and the advancement of healthcare through the integration of new digital and mobile technologies. When determining if a manuscript is suitable for publication, the journal considers four important criteria: novelty, clinical relevance, scientific rigor, and digital innovation.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: