对 FDA 批准的人工智能医疗器械的报告差距进行范围审查

IF 12.4

1区 医学

Q1 HEALTH CARE SCIENCES & SERVICES

引用次数: 0

摘要

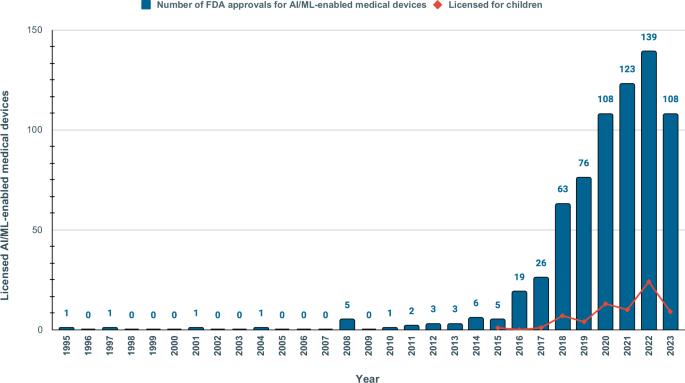

医疗保健中的机器学习和人工智能(AI/ML)模型可能会加剧健康偏差。监管监督对于评估 AI/ML 设备在临床环境中的安全性和有效性至关重要。我们对 1995-2023 年间美国食品及药物管理局批准的 692 种 AI/ML 医疗设备进行了范围审查,以检查其透明度、安全性报告和社会人口代表性。只有 3.6% 的批准报告了种族/族裔,99.1% 的批准未提供社会经济数据。81.6%没有报告研究对象的年龄。只有 46.1% 提供了全面详细的性能研究结果;只有 1.9% 提供了包含安全性和有效性数据的科学出版物链接。只有 9.0% 的研究包含了上市后监测的前瞻性研究。尽管市场批准的医疗器械数量不断增加,但我们的数据显示,FDA 的报告数据仍不一致。人口和社会经济特征的报告不足,加剧了算法偏差和健康差异的风险。本文章由计算机程序翻译,如有差异,请以英文原文为准。

A scoping review of reporting gaps in FDA-approved AI medical devices

Machine learning and artificial intelligence (AI/ML) models in healthcare may exacerbate health biases. Regulatory oversight is critical in evaluating the safety and effectiveness of AI/ML devices in clinical settings. We conducted a scoping review on the 692 FDA-approved AI/ML-enabled medical devices approved from 1995-2023 to examine transparency, safety reporting, and sociodemographic representation. Only 3.6% of approvals reported race/ethnicity, 99.1% provided no socioeconomic data. 81.6% did not report the age of study subjects. Only 46.1% provided comprehensive detailed results of performance studies; only 1.9% included a link to a scientific publication with safety and efficacy data. Only 9.0% contained a prospective study for post-market surveillance. Despite the growing number of market-approved medical devices, our data shows that FDA reporting data remains inconsistent. Demographic and socioeconomic characteristics are underreported, exacerbating the risk of algorithmic bias and health disparity.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

NPJ Digital Medicine

Multiple-

CiteScore

25.10

自引率

3.30%

发文量

170

审稿时长

15 weeks

期刊介绍:

npj Digital Medicine is an online open-access journal that focuses on publishing peer-reviewed research in the field of digital medicine. The journal covers various aspects of digital medicine, including the application and implementation of digital and mobile technologies in clinical settings, virtual healthcare, and the use of artificial intelligence and informatics.

The primary goal of the journal is to support innovation and the advancement of healthcare through the integration of new digital and mobile technologies. When determining if a manuscript is suitable for publication, the journal considers four important criteria: novelty, clinical relevance, scientific rigor, and digital innovation.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: