从文献综述中得出的医疗保健领域大型语言模型人工评估框架

IF 12.4

1区 医学

Q1 HEALTH CARE SCIENCES & SERVICES

引用次数: 0

摘要

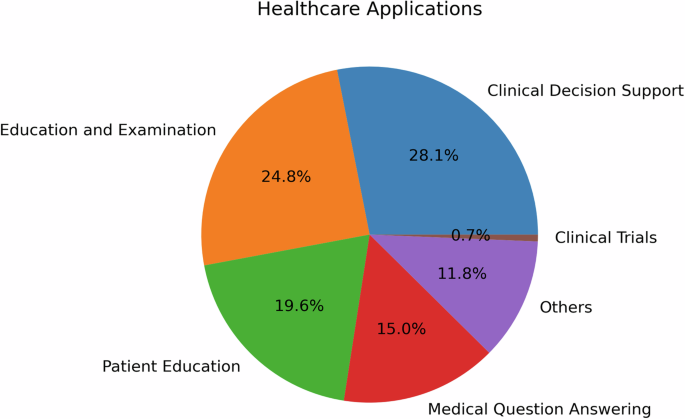

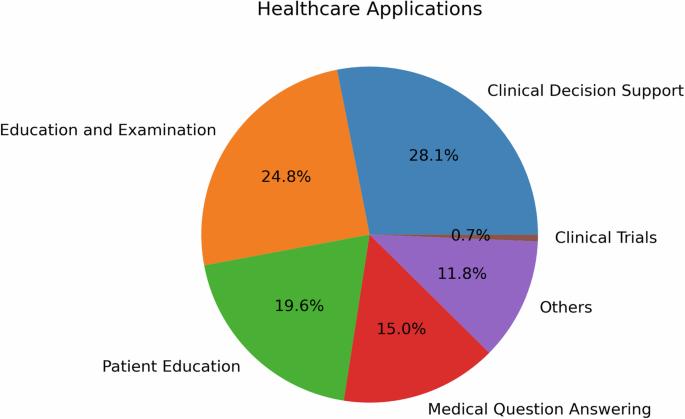

随着生成式人工智能(GenAI),尤其是大型语言模型(LLM)在医疗保健领域的不断发展,通过人工评估对 LLM 进行评估对于确保其安全性和有效性至关重要。本研究回顾了医疗保健领域各专业 LLM 人类评估方法的现有文献,并探讨了评估维度、样本类型和大小、评估人员的选择和招聘、框架和度量标准、评估流程和统计分析类型等因素。我们对 142 项研究的文献综述显示,目前的人类评价实践在可靠性、普遍性和适用性方面存在差距。为了克服这些阻碍医疗保健 LLM 开发和部署的重大障碍,我们提出了 QUEST,这是一个全面而实用的 LLM 人类评估框架,涵盖工作流程的三个阶段:该框架涵盖三个阶段的工作流程:规划、实施和裁决以及评分和审核。QUEST 的设计包含五项拟议的评估原则:信息质量、理解与推理、表达风格与角色、安全与危害以及信任与信心。本文章由计算机程序翻译,如有差异,请以英文原文为准。

A framework for human evaluation of large language models in healthcare derived from literature review

With generative artificial intelligence (GenAI), particularly large language models (LLMs), continuing to make inroads in healthcare, assessing LLMs with human evaluations is essential to assuring safety and effectiveness. This study reviews existing literature on human evaluation methodologies for LLMs in healthcare across various medical specialties and addresses factors such as evaluation dimensions, sample types and sizes, selection, and recruitment of evaluators, frameworks and metrics, evaluation process, and statistical analysis type. Our literature review of 142 studies shows gaps in reliability, generalizability, and applicability of current human evaluation practices. To overcome such significant obstacles to healthcare LLM developments and deployments, we propose QUEST, a comprehensive and practical framework for human evaluation of LLMs covering three phases of workflow: Planning, Implementation and Adjudication, and Scoring and Review. QUEST is designed with five proposed evaluation principles: Quality of Information, Understanding and Reasoning, Expression Style and Persona, Safety and Harm, and Trust and Confidence.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

NPJ Digital Medicine

Multiple-

CiteScore

25.10

自引率

3.30%

发文量

170

审稿时长

15 weeks

期刊介绍:

npj Digital Medicine is an online open-access journal that focuses on publishing peer-reviewed research in the field of digital medicine. The journal covers various aspects of digital medicine, including the application and implementation of digital and mobile technologies in clinical settings, virtual healthcare, and the use of artificial intelligence and informatics.

The primary goal of the journal is to support innovation and the advancement of healthcare through the integration of new digital and mobile technologies. When determining if a manuscript is suitable for publication, the journal considers four important criteria: novelty, clinical relevance, scientific rigor, and digital innovation.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: