MyThisYourThat 用于可解释地识别生物医学图像联合学习中的系统性偏差

IF 12.4

1区 医学

Q1 HEALTH CARE SCIENCES & SERVICES

引用次数: 0

摘要

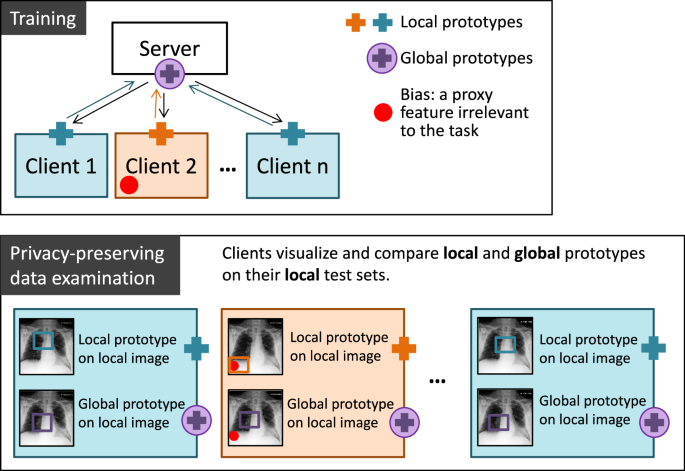

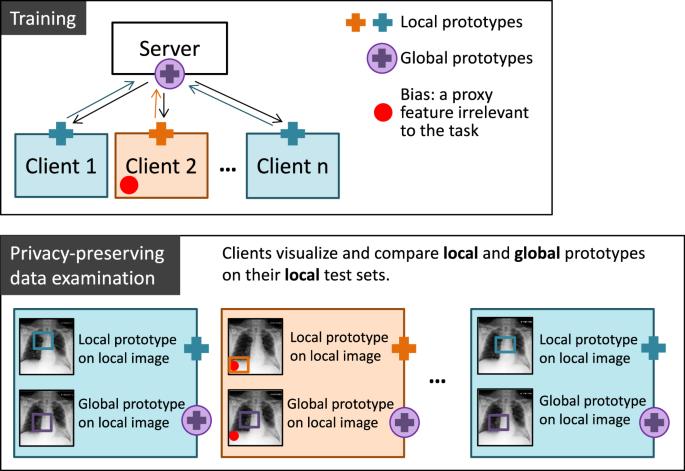

分布式协作学习是为隐私敏感的生物医学图像建立预测模型的一种有前途的方法。在这种方法中,多个数据所有者(客户)在不共享原始数据的情况下共同训练一个模型。然而,隐藏的系统性偏差会影响模型的性能和公平性。本研究提出了 MyThisYourThat(MyTH)方法,该方法将可解释的原型部分学习网络调整为分布式设置,使每个客户端都能可视化其他人在自己图像上学习到的特征差异:比较一个客户端的 "This "和其他人的 "That"。我们的设置展示了四个客户端在基准 X 光数据集上协作训练两个诊断分类器。在没有数据偏差的情况下,全局模型对心脏肿大的平衡准确率达到 74.14%,对胸腔积液的平衡准确率达到 74.08%。我们表明,在一个客户端出现系统性视觉偏差的情况下,全局模型的性能会下降到接近随机的水平。我们展示了本地原型和全局原型之间的差异如何揭示偏差,并在不损害隐私的情况下将其可视化到每个客户端的数据中。本文章由计算机程序翻译,如有差异,请以英文原文为准。

MyThisYourThat for interpretable identification of systematic bias in federated learning for biomedical images

Distributed collaborative learning is a promising approach for building predictive models for privacy-sensitive biomedical images. Here, several data owners (clients) train a joint model without sharing their original data. However, concealed systematic biases can compromise model performance and fairness. This study presents MyThisYourThat (MyTH) approach, which adapts an interpretable prototypical part learning network to a distributed setting, enabling each client to visualize feature differences learned by others on their own image: comparing one client’s ''This’ with others’ ''That’. Our setting demonstrates four clients collaboratively training two diagnostic classifiers on a benchmark X-ray dataset. Without data bias, the global model reaches 74.14% balanced accuracy for cardiomegaly and 74.08% for pleural effusion. We show that with systematic visual bias in one client, the performance of global models drops to near-random. We demonstrate how differences between local and global prototypes reveal biases and allow their visualization on each client’s data without compromising privacy.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

NPJ Digital Medicine

Multiple-

CiteScore

25.10

自引率

3.30%

发文量

170

审稿时长

15 weeks

期刊介绍:

npj Digital Medicine is an online open-access journal that focuses on publishing peer-reviewed research in the field of digital medicine. The journal covers various aspects of digital medicine, including the application and implementation of digital and mobile technologies in clinical settings, virtual healthcare, and the use of artificial intelligence and informatics.

The primary goal of the journal is to support innovation and the advancement of healthcare through the integration of new digital and mobile technologies. When determining if a manuscript is suitable for publication, the journal considers four important criteria: novelty, clinical relevance, scientific rigor, and digital innovation.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: