通过整合深度强化学习代理与生物神经网络,发现驱动行为的神经政策

IF 18.8

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

深度强化学习(RL)已在多个领域取得成功,但尚未直接用于通过与活体神经系统交互来学习生物任务。作为原理证明,我们展示了如何创建这样一个混合系统,并对其进行目标搜索任务训练。利用光遗传学,我们将线虫的神经系统与深度 RL 代理进行了连接。代理适应了显著不同的神经整合部位,并学会了特定部位的激活,以引导动物找到目标,包括在代理与一组神经元连接的情况下,这组神经元对光遗传学调制的反应以前从未表征过。研究人员通过绘制神经元的学习策略图来分析神经元,以了解如何利用不同的神经元组来引导运动。此外,动物和代理在寻找食物的任务中使用相同的学习策略泛化到新的环境,这表明该系统实现了合作计算,而不是代理充当软体机器人的控制器。我们的系统证明,深度 RL 是一种可行的工具,既能用于学习神经回路如何产生目标导向行为,也能用于以灵活的方式改进生物相关行为。本文章由计算机程序翻译,如有差异,请以英文原文为准。

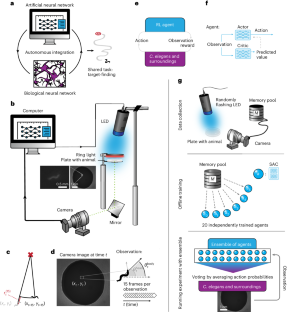

Discovering neural policies to drive behaviour by integrating deep reinforcement learning agents with biological neural networks

Deep reinforcement learning (RL) has been successful in a variety of domains but has not yet been directly used to learn biological tasks by interacting with a living nervous system. As proof of principle, we show how to create such a hybrid system trained on a target-finding task. Using optogenetics, we interfaced the nervous system of the nematode Caenorhabditis elegans with a deep RL agent. Agents adapted to strikingly different sites of neural integration and learned site-specific activations to guide animals towards a target, including in cases where agents interfaced with sets of neurons with previously uncharacterized responses to optogenetic modulation. Agents were analysed by plotting their learned policies to understand how different sets of neurons were used to guide movement. Further, the animal and agent generalized to new environments using the same learned policies in food-search tasks, showing that the system achieved cooperative computation rather than the agent acting as a controller for a soft robot. Our system demonstrates that deep RL is a viable tool both for learning how neural circuits can produce goal-directed behaviour and for improving biologically relevant behaviour in a flexible way. Deep reinforcement learning (RL) has been successful in many fields but has not been used to directly improve behaviours by interfacing with living nervous systems. Li et al. present a framework that integrates deep RL agents with the nervous system of the nematode Caenorhabditis elegans. Their study shows that trained agents can assist animals in biologically relevant tasks and can be studied after training to map out effective neural policies.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Nature Machine Intelligence

Multiple-

CiteScore

36.90

自引率

2.10%

发文量

127

期刊介绍:

Nature Machine Intelligence is a distinguished publication that presents original research and reviews on various topics in machine learning, robotics, and AI. Our focus extends beyond these fields, exploring their profound impact on other scientific disciplines, as well as societal and industrial aspects. We recognize limitless possibilities wherein machine intelligence can augment human capabilities and knowledge in domains like scientific exploration, healthcare, medical diagnostics, and the creation of safe and sustainable cities, transportation, and agriculture. Simultaneously, we acknowledge the emergence of ethical, social, and legal concerns due to the rapid pace of advancements.

To foster interdisciplinary discussions on these far-reaching implications, Nature Machine Intelligence serves as a platform for dialogue facilitated through Comments, News Features, News & Views articles, and Correspondence. Our goal is to encourage a comprehensive examination of these subjects.

Similar to all Nature-branded journals, Nature Machine Intelligence operates under the guidance of a team of skilled editors. We adhere to a fair and rigorous peer-review process, ensuring high standards of copy-editing and production, swift publication, and editorial independence.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: