利用深度学习和语音合成的神经语音解码框架

IF 18.8

1区 计算机科学

Q1 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

摘要

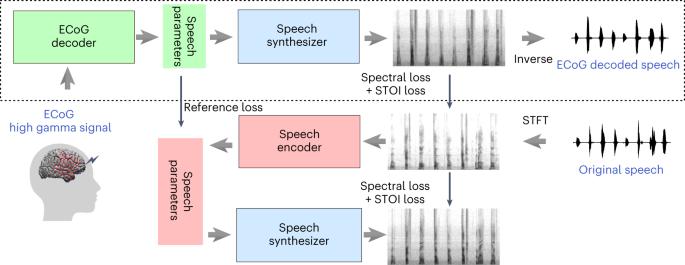

从神经信号中解码人类语音对于旨在恢复神经功能障碍人群语音的脑机接口(BCI)技术至关重要。然而,这仍然是一项极具挑战性的任务,而具有相应语音、数据复杂性和高维度的神经信号的稀缺性又加剧了这项任务的难度。在这里,我们提出了一种新颖的基于深度学习的神经语音解码框架,其中包括一个可将大脑皮层的皮质电图(ECoG)信号转化为可解释的语音参数的 ECoG 解码器,以及一个可将语音参数映射到频谱图的新颖的可微分语音合成器。我们开发了一个配套的语音到语音自动编码器,由语音编码器和相同的语音合成器组成,用于生成参考语音参数,以方便 ECoG 解码器的训练。该框架能生成自然的语音,并且在 48 名参与者中具有很高的可重复性。我们的实验结果表明,我们的模型能以高相关性解码语音,即使仅限于因果运算,这对于实时神经义肢的采用是必要的。最后,我们成功地对左半球或右半球覆盖的参与者进行了语音解码,这可能会为左半球受损导致功能障碍的患者提供语音义肢。本文章由计算机程序翻译,如有差异,请以英文原文为准。

A neural speech decoding framework leveraging deep learning and speech synthesis

Decoding human speech from neural signals is essential for brain–computer interface (BCI) technologies that aim to restore speech in populations with neurological deficits. However, it remains a highly challenging task, compounded by the scarce availability of neural signals with corresponding speech, data complexity and high dimensionality. Here we present a novel deep learning-based neural speech decoding framework that includes an ECoG decoder that translates electrocorticographic (ECoG) signals from the cortex into interpretable speech parameters and a novel differentiable speech synthesizer that maps speech parameters to spectrograms. We have developed a companion speech-to-speech auto-encoder consisting of a speech encoder and the same speech synthesizer to generate reference speech parameters to facilitate the ECoG decoder training. This framework generates natural-sounding speech and is highly reproducible across a cohort of 48 participants. Our experimental results show that our models can decode speech with high correlation, even when limited to only causal operations, which is necessary for adoption by real-time neural prostheses. Finally, we successfully decode speech in participants with either left or right hemisphere coverage, which could lead to speech prostheses in patients with deficits resulting from left hemisphere damage. Recent research has focused on restoring speech in populations with neurological deficits. Chen, Wang et al. develop a framework for decoding speech from neural signals, which could lead to innovative speech prostheses.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Nature Machine Intelligence

Multiple-

CiteScore

36.90

自引率

2.10%

发文量

127

期刊介绍:

Nature Machine Intelligence is a distinguished publication that presents original research and reviews on various topics in machine learning, robotics, and AI. Our focus extends beyond these fields, exploring their profound impact on other scientific disciplines, as well as societal and industrial aspects. We recognize limitless possibilities wherein machine intelligence can augment human capabilities and knowledge in domains like scientific exploration, healthcare, medical diagnostics, and the creation of safe and sustainable cities, transportation, and agriculture. Simultaneously, we acknowledge the emergence of ethical, social, and legal concerns due to the rapid pace of advancements.

To foster interdisciplinary discussions on these far-reaching implications, Nature Machine Intelligence serves as a platform for dialogue facilitated through Comments, News Features, News & Views articles, and Correspondence. Our goal is to encourage a comprehensive examination of these subjects.

Similar to all Nature-branded journals, Nature Machine Intelligence operates under the guidance of a team of skilled editors. We adhere to a fair and rigorous peer-review process, ensuring high standards of copy-editing and production, swift publication, and editorial independence.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: