印记:使用多模态上下文的团队中的交互式动态感知运动预测

IF 5.5

Q2 ROBOTICS

引用次数: 2

摘要

机器人正在从孤立工作转向作为人-机器人团队的一部分与人类合作。在这种情况下,他们需要与多人一起工作,并且需要理解和预测团队成员的行为。为了应对这一挑战,在这项工作中,我们引入了IMPRINT,这是一个多智能体运动预测框架,它对交互动力学进行建模,并结合多模态上下文(例如,来自RGB和深度传感器以及骨骼关节位置的数据)来准确预测团队中所有智能体的运动。在IMPRINT中,我们提出了一个交互模块,该模块可以提取agent内和agent间的动态,然后将它们融合以获得交互动态。此外,我们提出了一个包含多模态上下文信息的多模态上下文模块,以改进多智能体运动预测。我们通过比较人与人和人机团队场景与最先进方法的表现来评估IMPRINT。结果表明,在所有评估的时间范围内,IMPRINT优于所有其他方法。此外,我们还解释了IMPRINT如何在多智能体运动预测过程中整合来自所有模态的多模态上下文信息。IMPRINT的优越性能为将运动预测与机器人感知相结合,实现安全有效的人机协作提供了一个有前景的方向。本文章由计算机程序翻译,如有差异,请以英文原文为准。

IMPRINT: Interactional Dynamics-aware Motion Prediction in Teams using Multimodal Context

Robots are moving from working in isolation to working with humans as a part of human-robot teams. In such situations, they are expected to work with multiple humans and need to understand and predict the team members’ actions. To address this challenge, in this work, we introduce IMPRINT, a multi-agent motion prediction framework that models the interactional dynamics and incorporates the multimodal context (e.g., data from RGB and depth sensors and skeleton joint positions) to accurately predict the motion of all the agents in a team. In IMPRINT, we propose an Interaction module that can extract the intra-agent and inter-agent dynamics before fusing them to obtain the interactional dynamics. Furthermore, we propose a Multimodal Context module that incorporates multimodal context information to improve multi-agent motion prediction. We evaluated IMPRINT by comparing its performance on human-human and human-robot team scenarios against state-of-the-art methods. The results suggest that IMPRINT outperformed all other methods over all evaluated temporal horizons. Additionally, we provide an interpretation of how IMPRINT incorporates the multimodal context information from all the modalities during multi-agent motion prediction. The superior performance of IMPRINT provides a promising direction to integrate motion prediction with robot perception and enable safe and effective human-robot collaboration.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

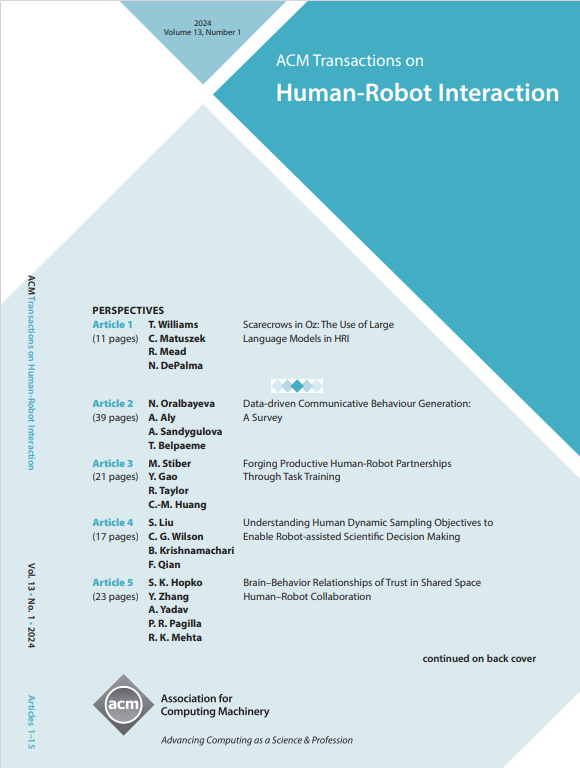

来源期刊

ACM Transactions on Human-Robot Interaction

Computer Science-Artificial Intelligence

CiteScore

7.70

自引率

5.90%

发文量

65

期刊介绍:

ACM Transactions on Human-Robot Interaction (THRI) is a prestigious Gold Open Access journal that aspires to lead the field of human-robot interaction as a top-tier, peer-reviewed, interdisciplinary publication. The journal prioritizes articles that significantly contribute to the current state of the art, enhance overall knowledge, have a broad appeal, and are accessible to a diverse audience. Submissions are expected to meet a high scholarly standard, and authors are encouraged to ensure their research is well-presented, advancing the understanding of human-robot interaction, adding cutting-edge or general insights to the field, or challenging current perspectives in this research domain.

THRI warmly invites well-crafted paper submissions from a variety of disciplines, encompassing robotics, computer science, engineering, design, and the behavioral and social sciences. The scholarly articles published in THRI may cover a range of topics such as the nature of human interactions with robots and robotic technologies, methods to enhance or enable novel forms of interaction, and the societal or organizational impacts of these interactions. The editorial team is also keen on receiving proposals for special issues that focus on specific technical challenges or that apply human-robot interaction research to further areas like social computing, consumer behavior, health, and education.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: