在物理人机交互过程中,从演示、修正和偏好中统一学习

IF 5.5

Q2 ROBOTICS

引用次数: 6

摘要

人类可以利用物理互动来教机器人手臂。这种物理交互根据任务、用户和机器人迄今所学的知识采取多种形式。最先进的方法侧重于从单一模式学习,或者结合一些交互类型。一些方法通过假设机器人具有关于任务特征和奖励结构的先验信息来做到这一点。相比之下,在本文中,我们引入了一种算法形式主义,它将从演示、修正和偏好中学习结合起来。我们的方法没有对人类想教机器人的任务做任何假设;相反,我们通过将人类的输入与附近的替代方案(即接近人类反馈的轨迹)进行比较,从头开始学习奖励模型。我们首先推导了一个损失函数,该函数训练了一个奖励模型集合,以匹配人类的演示、纠正和偏好。反馈的类型和顺序取决于人类老师:我们使机器人能够被动或主动地收集这些反馈。然后,我们应用约束优化将我们学习到的奖励转换为期望的机器人轨迹。通过模拟和用户研究,我们证明了我们提出的方法比现有的基线更准确地从物理人机交互中学习操作任务,特别是当机器人面临新的或意想不到的目标时。我们的用户研究视频可以在https://youtu.be/FSUJsTYvEKU上找到本文章由计算机程序翻译,如有差异,请以英文原文为准。

Unified Learning from Demonstrations, Corrections, and Preferences during Physical Human-Robot Interaction

Humans can leverage physical interaction to teach robot arms. This physical interaction takes multiple forms depending on the task, the user, and what the robot has learned so far. State-of-the-art approaches focus on learning from a single modality, or combine some interaction types. Some methods do so by assuming that the robot has prior information about the features of the task and the reward structure. By contrast, in this paper we introduce an algorithmic formalism that unites learning from demonstrations, corrections, and preferences. Our approach makes no assumptions about the tasks the human wants to teach the robot; instead, we learn a reward model from scratch by comparing the human’s input to nearby alternatives, i.e., trajectories close to the human’s feedback. We first derive a loss function that trains an ensemble of reward models to match the human’s demonstrations, corrections, and preferences. The type and order of feedback is up to the human teacher: we enable the robot to collect this feedback passively or actively. We then apply constrained optimization to convert our learned reward into a desired robot trajectory. Through simulations and a user study we demonstrate that our proposed approach more accurately learns manipulation tasks from physical human interaction than existing baselines, particularly when the robot is faced with new or unexpected objectives. Videos of our user study are available at: https://youtu.be/FSUJsTYvEKU

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

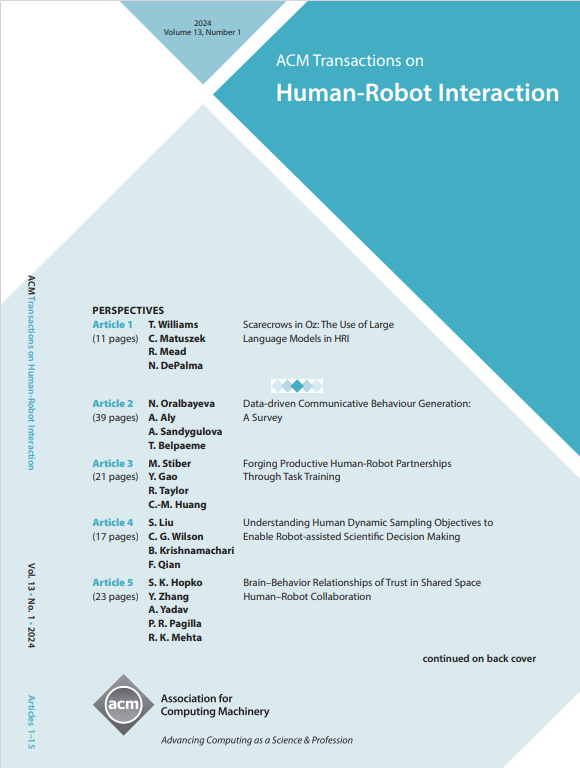

来源期刊

ACM Transactions on Human-Robot Interaction

Computer Science-Artificial Intelligence

CiteScore

7.70

自引率

5.90%

发文量

65

期刊介绍:

ACM Transactions on Human-Robot Interaction (THRI) is a prestigious Gold Open Access journal that aspires to lead the field of human-robot interaction as a top-tier, peer-reviewed, interdisciplinary publication. The journal prioritizes articles that significantly contribute to the current state of the art, enhance overall knowledge, have a broad appeal, and are accessible to a diverse audience. Submissions are expected to meet a high scholarly standard, and authors are encouraged to ensure their research is well-presented, advancing the understanding of human-robot interaction, adding cutting-edge or general insights to the field, or challenging current perspectives in this research domain.

THRI warmly invites well-crafted paper submissions from a variety of disciplines, encompassing robotics, computer science, engineering, design, and the behavioral and social sciences. The scholarly articles published in THRI may cover a range of topics such as the nature of human interactions with robots and robotic technologies, methods to enhance or enable novel forms of interaction, and the societal or organizational impacts of these interactions. The editorial team is also keen on receiving proposals for special issues that focus on specific technical challenges or that apply human-robot interaction research to further areas like social computing, consumer behavior, health, and education.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: