局部解释技术能解释线性加性模型吗?

IF 4.3

3区 计算机科学

Q2 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 1

摘要

局部模型不可知的加性解释技术将黑盒模型的预测输出分解为加性特征重要性分数。对产生的局部加性解释的准确性提出了质疑。我们通过研究一些最流行的解释技术是否能准确地解释线性加性模型的决策来研究这一点。我们表明,尽管这些技术产生的解释是线性添加的,但在解释线性添加模型时,它们可能无法提供准确的解释。在实验中,我们测量了由LIME和SHAP等产生的加性解释的准确性,以及在解释线性和逻辑回归以及高斯朴素贝叶斯模型超过40个表格数据集时,局部排列重要性(LPI)的非加性解释。我们还研究了不同因素,如数值或分类或相关特征的数量,黑箱模型的预测性能,解释样本量,相似性度量和数据集上使用的预处理技术,可以直接影响局部解释准确性的程度。本文章由计算机程序翻译,如有差异,请以英文原文为准。

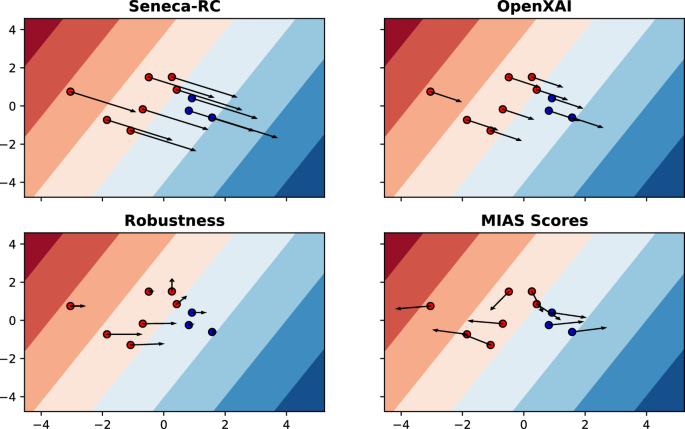

Can local explanation techniques explain linear additive models?

Abstract Local model-agnostic additive explanation techniques decompose the predicted output of a black-box model into additive feature importance scores. Questions have been raised about the accuracy of the produced local additive explanations. We investigate this by studying whether some of the most popular explanation techniques can accurately explain the decisions of linear additive models. We show that even though the explanations generated by these techniques are linear additives, they can fail to provide accurate explanations when explaining linear additive models. In the experiments, we measure the accuracy of additive explanations, as produced by, e.g., LIME and SHAP, along with the non-additive explanations of Local Permutation Importance (LPI) when explaining Linear and Logistic Regression and Gaussian naive Bayes models over 40 tabular datasets. We also investigate the degree to which different factors, such as the number of numerical or categorical or correlated features, the predictive performance of the black-box model, explanation sample size, similarity metric, and the pre-processing technique used on the dataset can directly affect the accuracy of local explanations.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

Data Mining and Knowledge Discovery

工程技术-计算机:人工智能

CiteScore

10.40

自引率

4.20%

发文量

68

审稿时长

10 months

期刊介绍:

Advances in data gathering, storage, and distribution have created a need for computational tools and techniques to aid in data analysis. Data Mining and Knowledge Discovery in Databases (KDD) is a rapidly growing area of research and application that builds on techniques and theories from many fields, including statistics, databases, pattern recognition and learning, data visualization, uncertainty modelling, data warehousing and OLAP, optimization, and high performance computing.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: