基于融合Sentinel-1和Sentinel-2时间序列的作物分类虚拟训练标签生成

IF 3.3

4区 地球科学

Q3 IMAGING SCIENCE & PHOTOGRAPHIC TECHNOLOGY

PFG-Journal of Photogrammetry Remote Sensing and Geoinformation Science

Pub Date : 2023-09-26

DOI:10.1007/s41064-023-00256-w

引用次数: 0

摘要

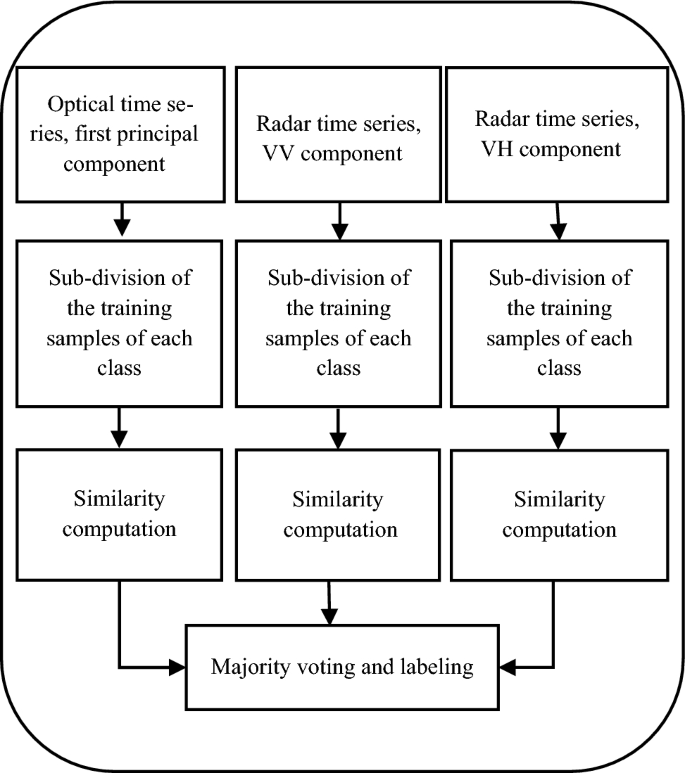

卷积神经网络(cnn)在许多领域显示出优于大多数传统图像理解方法的结果,包括从卫星时间序列图像中进行作物分类。然而,cnn需要大量的训练样本才能正确训练网络。使用传统方法收集和标记这些样品的过程既耗时又昂贵。为了解决这个问题并提高分类精度,从现有的训练标签生成虚拟训练标签(VTL)是一个很有前途的解决方案。为此,本研究提出了一种基于自组织地图(SOM)对每个作物的训练样本进行细分,然后根据与这些子类的距离为一组未标记像素分配标签的新方法来生成VTL。我们将新方法应用于Sentinel图像的作物分类。利用三维(3D) CNN从光学和雷达时间序列融合中提取特征。评价结果表明,该方法可以有效地生成虚拟带库,总体精度(OA)为95.3%,kappa系数(KC)为94.5%,而无虚拟带库溶液的总体精度为91.3%,kappa系数为89.9%。结果表明,所提出的方法具有提高VTL作物分类精度的潜力。本文章由计算机程序翻译,如有差异,请以英文原文为准。

Generating Virtual Training Labels for Crop Classification from Fused Sentinel-1 and Sentinel-2 Time Series

Abstract Convolutional neural networks (CNNs) have shown results superior to most traditional image understanding approaches in many fields, incl. crop classification from satellite time series images. However, CNNs require a large number of training samples to properly train the network. The process of collecting and labeling such samples using traditional methods can be both, time-consuming and costly. To address this issue and improve classification accuracy, generating virtual training labels (VTL) from existing ones is a promising solution. To this end, this study proposes a novel method for generating VTL based on sub-dividing the training samples of each crop using self-organizing maps (SOM), and then assigning labels to a set of unlabeled pixels based on the distance to these sub-classes. We apply the new method to crop classification from Sentinel images. A three-dimensional (3D) CNN is utilized for extracting features from the fusion of optical and radar time series. The results of the evaluation show that the proposed method is effective in generating VTL, as demonstrated by the achieved overall accuracy (OA) of 95.3% and kappa coefficient (KC) of 94.5%, compared to 91.3% and 89.9% for a solution without VTL. The results suggest that the proposed method has the potential to enhance the classification accuracy of crops using VTL.

求助全文

通过发布文献求助,成功后即可免费获取论文全文。

去求助

来源期刊

PFG-Journal of Photogrammetry Remote Sensing and Geoinformation Science

Physics and Astronomy-Instrumentation

CiteScore

8.20

自引率

2.40%

发文量

38

期刊介绍:

PFG is an international scholarly journal covering the progress and application of photogrammetric methods, remote sensing technology and the interconnected field of geoinformation science. It places special editorial emphasis on the communication of new methodologies in data acquisition and new approaches to optimized processing and interpretation of all types of data which were acquired by photogrammetric methods, remote sensing, image processing and the computer-aided interpretation of such data in general. The journal hence addresses both researchers and students of these disciplines at academic institutions and universities as well as the downstream users in both the private sector and public administration.

Founded in 1926 under the former name Bildmessung und Luftbildwesen, PFG is worldwide the oldest journal on photogrammetry. It is the official journal of the German Society for Photogrammetry, Remote Sensing and Geoinformation (DGPF).

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: