Assessing Inter-Annotator Agreement for Medical Image Segmentation

IF 3.4

3区 计算机科学

Q2 COMPUTER SCIENCE, INFORMATION SYSTEMS

引用次数: 4

Abstract

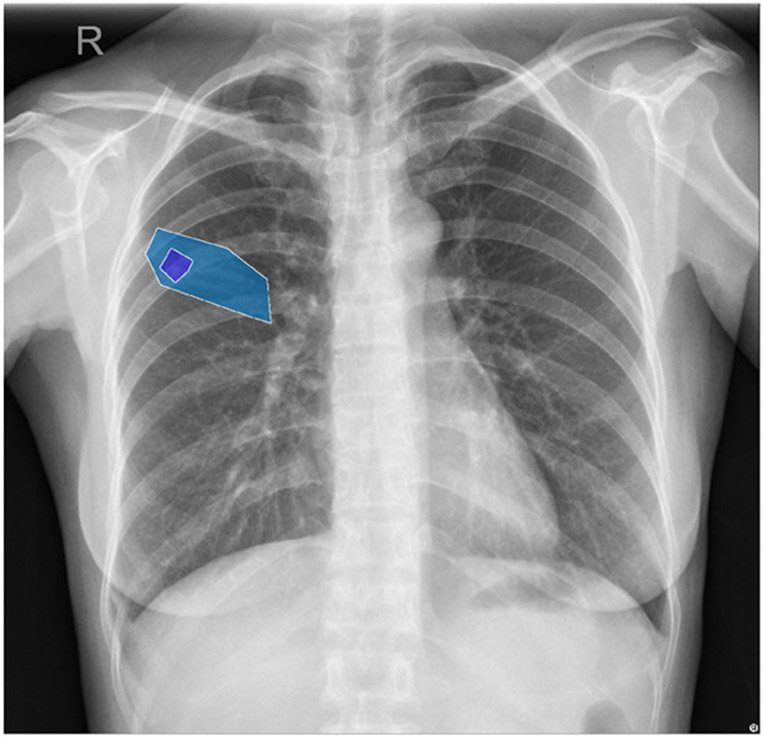

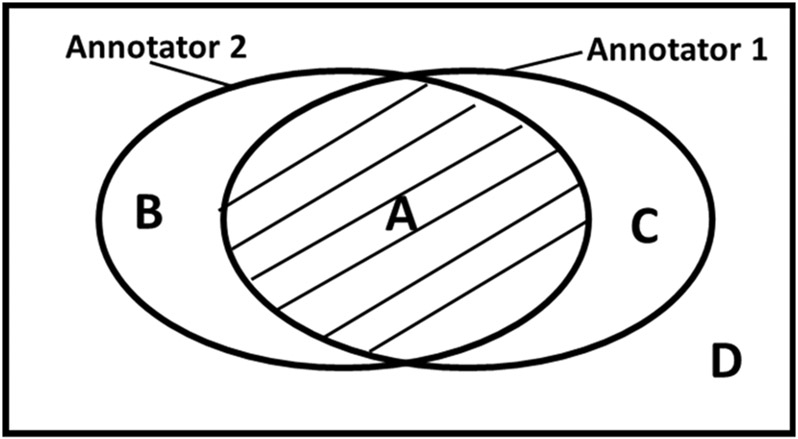

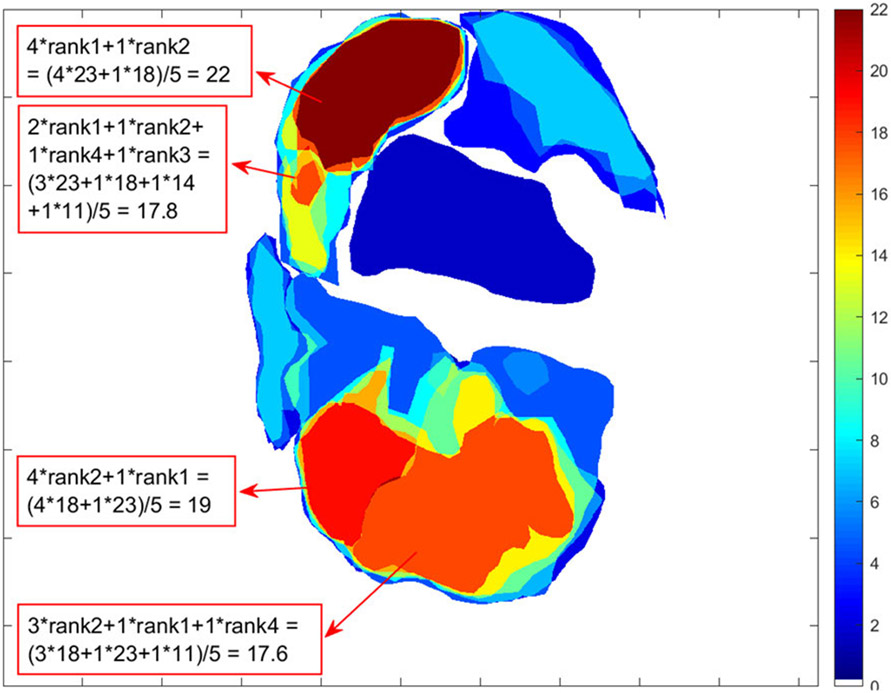

Artificial Intelligence (AI)-based medical computer vision algorithm training and evaluations depend on annotations and labeling. However, variability between expert annotators introduces noise in training data that can adversely impact the performance of AI algorithms. This study aims to assess, illustrate and interpret the inter-annotator agreement among multiple expert annotators when segmenting the same lesion(s)/abnormalities on medical images. We propose the use of three metrics for the qualitative and quantitative assessment of inter-annotator agreement: 1) use of a common agreement heatmap and a ranking agreement heatmap; 2) use of the extended Cohen’s kappa and Fleiss’ kappa coefficients for a quantitative evaluation and interpretation of inter-annotator reliability; and 3) use of the Simultaneous Truth and Performance Level Estimation (STAPLE) algorithm, as a parallel step, to generate ground truth for training AI models and compute Intersection over Union (IoU), sensitivity, and specificity to assess the inter-annotator reliability and variability. Experiments are performed on two datasets, namely cervical colposcopy images from 30 patients and chest X-ray images from 336 tuberculosis (TB) patients, to demonstrate the consistency of inter-annotator reliability assessment and the importance of combining different metrics to avoid bias assessment.

医学图像分割中注释器间一致性的评估

基于人工智能的医学计算机视觉算法的训练和评估依赖于注释和标记。然而,专家注释器之间的可变性在训练数据中引入了噪声,这可能会对人工智能算法的性能产生不利影响。本研究旨在评估、说明和解释在分割医学图像上的相同病变/异常时,多个专家注释者之间的注释者间一致性。我们建议使用三个指标来定性和定量评估注释者之间的一致性:1)使用通用一致性热图和排序一致性热表;2) 使用扩展的Cohen’s kappa和Fleiss’s kapa系数对注释器间的可靠性进行定量评估和解释;以及3)使用同时真实性和性能水平估计(STAPLE)算法,作为并行步骤,生成用于训练AI模型的基本真实性,并计算并集交集(IoU)、灵敏度和特异性,以评估注释器间的可靠性和可变性。在两个数据集上进行了实验,即来自30名患者的宫颈阴道镜图像和来自336名结核病患者的胸部X光图像,以证明注释者间可靠性评估的一致性以及结合不同指标以避免偏差评估的重要性。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

IEEE Access

COMPUTER SCIENCE, INFORMATION SYSTEMSENGIN-ENGINEERING, ELECTRICAL & ELECTRONIC

CiteScore

9.80

自引率

7.70%

发文量

6673

审稿时长

6 weeks

期刊介绍:

IEEE Access® is a multidisciplinary, open access (OA), applications-oriented, all-electronic archival journal that continuously presents the results of original research or development across all of IEEE''s fields of interest.

IEEE Access will publish articles that are of high interest to readers, original, technically correct, and clearly presented. Supported by author publication charges (APC), its hallmarks are a rapid peer review and publication process with open access to all readers. Unlike IEEE''s traditional Transactions or Journals, reviews are "binary", in that reviewers will either Accept or Reject an article in the form it is submitted in order to achieve rapid turnaround. Especially encouraged are submissions on:

Multidisciplinary topics, or applications-oriented articles and negative results that do not fit within the scope of IEEE''s traditional journals.

Practical articles discussing new experiments or measurement techniques, interesting solutions to engineering.

Development of new or improved fabrication or manufacturing techniques.

Reviews or survey articles of new or evolving fields oriented to assist others in understanding the new area.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: