Augmented Reality Visualization of Autonomous Mobile Robot Change Detection in Uninstrumented Environments

IF 5.5

Q2 ROBOTICS

引用次数: 0

Abstract

The creation of information transparency solutions to enable humans to understand robot perception is a challenging requirement for autonomous and artificially intelligent robots to impact a multitude of domains. By taking advantage of comprehensive and high-volume data from robot teammates’ advanced perception and reasoning capabilities, humans will be able to make better decisions, with significant impacts from safety to functionality. We present a solution to this challenge by coupling augmented reality (AR) with an intelligent mobile robot that is autonomously detecting novel changes in an environment. We show that the human teammate can understand and make decisions based on information shared via AR by the robot. Sharing of robot-perceived information is enabled by the robot’s online calculation of the human’s relative position, making the system robust to environments without external instrumentation such as GPS. Our robotic system performs change detection by comparing current metric sensor readings against a previous reading to identify differences. We experimentally explore the design of change detection visualizations and the aggregation of information, the impact of instruction on communication understanding, the effects of visualization and alignment error, and the relationship between situated 3D visualization in AR and human movement in the operational environment on shared situational awareness in human-robot teams. We demonstrate this novel capability and assess the effectiveness of human-robot teaming in crowdsourced data-driven studies, as well as an in-person study where participants are equipped with a commercial off-the-shelf AR headset and teamed with a small ground robot which maneuvers through the environment. The mobile robot scans for changes, which are visualized via AR to the participant. The effectiveness of this communication is evaluated through accuracy and subjective assessment metrics to provide insight into interpretation and experience.无仪器环境下自主移动机器人变化检测的增强现实可视化

创建信息透明的解决方案,使人类能够理解机器人的感知,是自主和人工智能机器人影响众多领域的一个具有挑战性的要求。通过利用机器人队友先进的感知和推理能力提供的全面和大量数据,人类将能够做出更好的决策,从安全性到功能性都将产生重大影响。我们提出了一种解决方案,通过将增强现实(AR)与智能移动机器人相结合,该机器人可以自主检测环境中的新变化。我们展示了人类队友可以理解并根据机器人通过AR共享的信息做出决策。通过机器人在线计算人类的相对位置,可以共享机器人感知到的信息,使系统在没有外部仪器(如GPS)的环境下具有鲁棒性。我们的机器人系统通过比较当前度量传感器读数与先前读数来识别差异,从而执行变化检测。我们通过实验探讨了变化检测可视化和信息聚合的设计、指令对沟通理解的影响、可视化和对齐误差的影响,以及AR中的情境三维可视化与作战环境中人类运动对人机团队共享态势感知的关系。我们展示了这种新颖的能力,并在众包数据驱动的研究中评估了人-机器人团队的有效性,以及一项面对面的研究,参与者配备了商用现货AR耳机,并与一个小型地面机器人合作,该机器人可以在环境中机动。移动机器人扫描变化,并通过AR向参与者可视化。这种沟通的有效性通过准确性和主观评估指标进行评估,以提供对解释和经验的见解。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

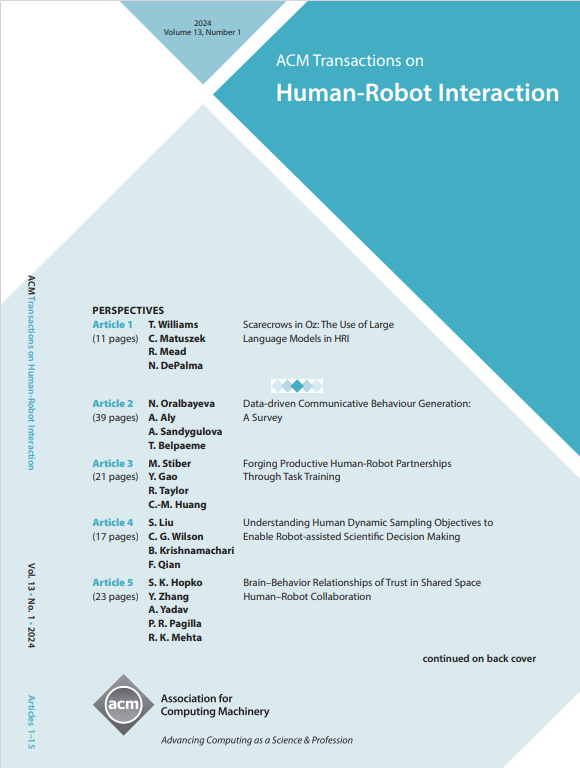

ACM Transactions on Human-Robot Interaction

Computer Science-Artificial Intelligence

CiteScore

7.70

自引率

5.90%

发文量

65

期刊介绍:

ACM Transactions on Human-Robot Interaction (THRI) is a prestigious Gold Open Access journal that aspires to lead the field of human-robot interaction as a top-tier, peer-reviewed, interdisciplinary publication. The journal prioritizes articles that significantly contribute to the current state of the art, enhance overall knowledge, have a broad appeal, and are accessible to a diverse audience. Submissions are expected to meet a high scholarly standard, and authors are encouraged to ensure their research is well-presented, advancing the understanding of human-robot interaction, adding cutting-edge or general insights to the field, or challenging current perspectives in this research domain.

THRI warmly invites well-crafted paper submissions from a variety of disciplines, encompassing robotics, computer science, engineering, design, and the behavioral and social sciences. The scholarly articles published in THRI may cover a range of topics such as the nature of human interactions with robots and robotic technologies, methods to enhance or enable novel forms of interaction, and the societal or organizational impacts of these interactions. The editorial team is also keen on receiving proposals for special issues that focus on specific technical challenges or that apply human-robot interaction research to further areas like social computing, consumer behavior, health, and education.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: