Transparent Value Alignment

IF 5.5

Q2 ROBOTICS

引用次数: 0

Abstract

As robots become increasingly prevalent in our communities, aligning the values motivating their behavior with human values is critical. However, it is often difficult or impossible for humans, both expert and non-expert, to enumerate values comprehensively, accurately, and in forms that are readily usable for robot planning. Misspecification can lead to undesired, inefficient, or even dangerous behavior. In the value alignment problem, humans and robots work together to optimize human objectives, which are often represented as reward functions and which the robot can infer by observing human actions. In existing alignment approaches, no explicit feedback about this inference process is provided to the human. In this paper, we introduce an exploratory framework to address this problem, which we call Transparent Value Alignment (TVA). TVA suggests that techniques from explainable AI (XAI) be explicitly applied to provide humans with information about the robot's beliefs throughout learning, enabling efficient and effective human feedback.透明值对齐

随着机器人在我们的社区中变得越来越普遍,使激励它们行为的价值观与人类价值观保持一致是至关重要的。然而,对于专家和非专家来说,要全面、准确地枚举值,并以易于用于机器人规划的形式枚举值,往往是困难或不可能的。错误的规范可能导致不期望的、低效的,甚至是危险的行为。在价值对齐问题中,人类和机器人共同努力优化人类的目标,这些目标通常表示为奖励函数,机器人可以通过观察人类的行为来推断。在现有的对齐方法中,没有向人类提供关于该推理过程的显式反馈。在本文中,我们引入了一个探索性框架来解决这个问题,我们称之为透明价值对齐(TVA)。TVA建议明确应用可解释人工智能(XAI)的技术,为人类提供有关机器人在学习过程中的信念的信息,从而实现高效和有效的人类反馈。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

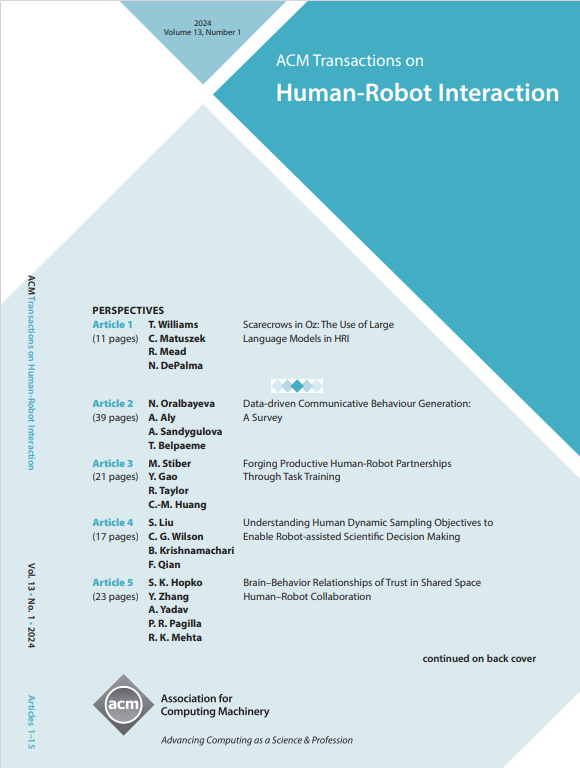

来源期刊

ACM Transactions on Human-Robot Interaction

Computer Science-Artificial Intelligence

CiteScore

7.70

自引率

5.90%

发文量

65

期刊介绍:

ACM Transactions on Human-Robot Interaction (THRI) is a prestigious Gold Open Access journal that aspires to lead the field of human-robot interaction as a top-tier, peer-reviewed, interdisciplinary publication. The journal prioritizes articles that significantly contribute to the current state of the art, enhance overall knowledge, have a broad appeal, and are accessible to a diverse audience. Submissions are expected to meet a high scholarly standard, and authors are encouraged to ensure their research is well-presented, advancing the understanding of human-robot interaction, adding cutting-edge or general insights to the field, or challenging current perspectives in this research domain.

THRI warmly invites well-crafted paper submissions from a variety of disciplines, encompassing robotics, computer science, engineering, design, and the behavioral and social sciences. The scholarly articles published in THRI may cover a range of topics such as the nature of human interactions with robots and robotic technologies, methods to enhance or enable novel forms of interaction, and the societal or organizational impacts of these interactions. The editorial team is also keen on receiving proposals for special issues that focus on specific technical challenges or that apply human-robot interaction research to further areas like social computing, consumer behavior, health, and education.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: