MITS: A large-scale multimodal benchmark dataset for Intelligent Traffic Surveillance

IF 4.2

3区 计算机科学

Q2 COMPUTER SCIENCE, ARTIFICIAL INTELLIGENCE

引用次数: 0

Abstract

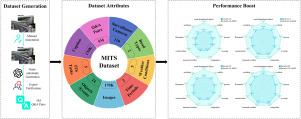

General-domain large multimodal models (LMMs) have achieved significant advances in various image-text tasks. However, their performance in the Intelligent Traffic Surveillance (ITS) domain remains limited due to the absence of dedicated multimodal datasets. To address this gap, we introduce MITS (Multimodal Intelligent Traffic Surveillance), the first large-scale multimodal benchmark dataset specifically designed for ITS. MITS includes 170,400 independently collected real-world ITS images sourced from traffic surveillance cameras, annotated with eight main categories and 24 subcategories of ITS-specific objects and events under diverse environmental conditions. Additionally, through a systematic data generation pipeline, we generate high-quality image captions and 5 million instruction-following visual question-answer pairs, addressing five critical ITS tasks: object and event recognition, object counting, object localization, background analysis, and event reasoning. To demonstrate MITS’s effectiveness, we fine-tune mainstream LMMs on this dataset, enabling the development of ITS-specific applications. Experimental results show that MITS significantly improves LMM performance in ITS applications, increasing LLaVA-1.5’s performance from 0.494 to 0.905 (+83.2%), LLaVA-1.6’s from 0.678 to 0.921 (+35.8%), Qwen2-VL’s from 0.584 to 0.926 (+58.6%), and Qwen2.5-VL’s from 0.732 to 0.930 (+27.0%). We release the dataset, code, and models as open-source, providing high-value resources to advance both ITS and LMM research.

智能交通监控的大规模多模态基准数据集

通用域大多模态模型(lmm)在各种图像-文本任务中取得了重大进展。然而,由于缺乏专用的多模式数据集,它们在智能交通监控(ITS)领域的性能仍然有限。为了解决这一差距,我们引入了MITS(多模式智能交通监控),这是第一个专门为ITS设计的大规模多模式基准数据集。MITS包括170,400张独立收集的真实世界ITS图像,这些图像来自交通监控摄像头,并标注了不同环境条件下ITS特定对象和事件的8个主要类别和24个子类别。此外,通过系统的数据生成管道,我们生成高质量的图像字幕和500万个指令跟随的视觉问答对,解决五个关键的ITS任务:物体和事件识别、物体计数、物体定位、背景分析和事件推理。为了证明MITS的有效性,我们在这个数据集上对主流lmm进行了微调,从而能够开发特定于MITS的应用程序。实验结果表明,MITS显著提高了LMM在ITS应用中的性能,llva -1.5的性能从0.494提高到0.905 (+83.2%),llva -1.6的性能从0.678提高到0.921 (+35.8%),Qwen2-VL的性能从0.584提高到0.926 (+58.6%),Qwen2.5-VL的性能从0.732提高到0.930(+27.0%)。我们以开源的方式发布数据集、代码和模型,为推进ITS和LMM研究提供高价值的资源。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Image and Vision Computing

工程技术-工程:电子与电气

CiteScore

8.50

自引率

8.50%

发文量

143

审稿时长

7.8 months

期刊介绍:

Image and Vision Computing has as a primary aim the provision of an effective medium of interchange for the results of high quality theoretical and applied research fundamental to all aspects of image interpretation and computer vision. The journal publishes work that proposes new image interpretation and computer vision methodology or addresses the application of such methods to real world scenes. It seeks to strengthen a deeper understanding in the discipline by encouraging the quantitative comparison and performance evaluation of the proposed methodology. The coverage includes: image interpretation, scene modelling, object recognition and tracking, shape analysis, monitoring and surveillance, active vision and robotic systems, SLAM, biologically-inspired computer vision, motion analysis, stereo vision, document image understanding, character and handwritten text recognition, face and gesture recognition, biometrics, vision-based human-computer interaction, human activity and behavior understanding, data fusion from multiple sensor inputs, image databases.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: