Overcoming computational bottlenecks in large language models through analog in-memory computing

IF 18.3

Q1 COMPUTER SCIENCE, INTERDISCIPLINARY APPLICATIONS

引用次数: 0

Abstract

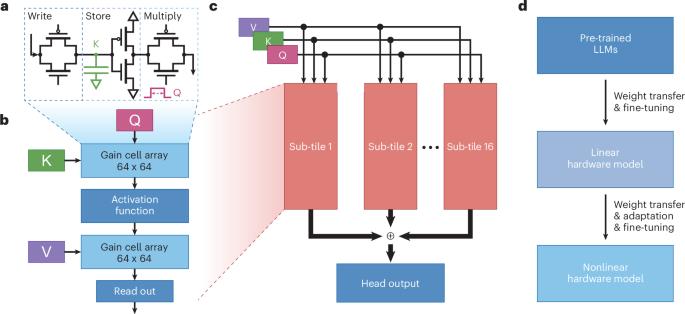

A recent study demonstrates the potential of using in-memory computing architecture for implementing large language models for an improved computational efficiency in both time and energy while maintaining a high accuracy.

通过模拟内存计算克服大型语言模型中的计算瓶颈。

最近的一项研究证明了使用内存计算架构实现大型语言模型的潜力,从而在时间和精力上提高计算效率,同时保持高精度。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: