JointDiffusion: Joint representation learning for generative, predictive, and self-explainable AI in healthcare

IF 4.9

2区 医学

Q1 ENGINEERING, BIOMEDICAL

Computerized Medical Imaging and Graphics

Pub Date : 2025-08-14

DOI:10.1016/j.compmedimag.2025.102619

引用次数: 0

Abstract

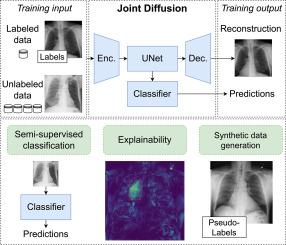

Joint machine learning models that allow synthesizing and classifying data often offer uneven performance between those tasks or are unstable to train. In this work, we depart from a set of empirical observations that indicate the usefulness of internal representations built by contemporary deep diffusion-based generative models not only for generating but also predicting. We then propose to extend the vanilla diffusion model with a classifier that allows for stable joint end-to-end training with shared parameterization between those objectives. The resulting joint diffusion model outperforms recent state-of-the-art hybrid methods in terms of both classification and generation quality on all evaluated benchmarks. On top of our joint training approach, we present its application to the medical data domain, where we show how joint training can aid with the problems crucial in the medical data domain. We show that our Joint Diffusion achieves superior performance in semi-supervised setup, where human annotation is scarce, while at the same time providing decisions explanations through counterfactual examples generation.

JointDiffusion:医疗保健领域生成式、预测性和可自我解释的人工智能的联合表示学习

允许综合和分类数据的联合机器学习模型通常在这些任务之间表现不平衡,或者训练不稳定。在这项工作中,我们偏离了一组经验观察,这些观察表明,当代基于深度扩散的生成模型构建的内部表征不仅用于生成,而且用于预测。然后,我们提出用一个分类器扩展香草扩散模型,该分类器允许在这些目标之间共享参数化进行稳定的联合端到端训练。由此产生的联合扩散模型在所有评估基准的分类和生成质量方面都优于最近最先进的混合方法。在我们的联合训练方法之上,我们介绍了它在医疗数据领域的应用,在那里我们展示了联合训练如何帮助解决医疗数据领域的关键问题。我们表明,我们的联合扩散在人工注释稀缺的半监督设置中取得了优异的性能,同时通过反事实示例生成提供决策解释。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

CiteScore

10.70

自引率

3.50%

发文量

71

审稿时长

26 days

期刊介绍:

The purpose of the journal Computerized Medical Imaging and Graphics is to act as a source for the exchange of research results concerning algorithmic advances, development, and application of digital imaging in disease detection, diagnosis, intervention, prevention, precision medicine, and population health. Included in the journal will be articles on novel computerized imaging or visualization techniques, including artificial intelligence and machine learning, augmented reality for surgical planning and guidance, big biomedical data visualization, computer-aided diagnosis, computerized-robotic surgery, image-guided therapy, imaging scanning and reconstruction, mobile and tele-imaging, radiomics, and imaging integration and modeling with other information relevant to digital health. The types of biomedical imaging include: magnetic resonance, computed tomography, ultrasound, nuclear medicine, X-ray, microwave, optical and multi-photon microscopy, video and sensory imaging, and the convergence of biomedical images with other non-imaging datasets.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: