Conceptual framework for prediction models of patient deterioration based on nursing documentation patterns: reproducibility and generalizability with a large number of hospitals across the United States

IF 4.5

2区 医学

Q2 COMPUTER SCIENCE, INTERDISCIPLINARY APPLICATIONS

引用次数: 0

Abstract

Objective

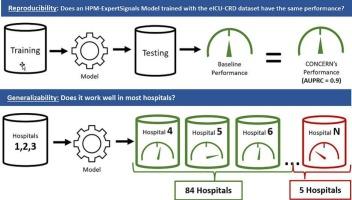

The Health Process Model (HPM)-ExpertSignals Conceptual Framework posits that healthcare professionals’ patient care behaviors can be used to predict in-hospital deterioration. Prediction models based on this framework have been validated using data from 4 hospitals within two healthcare systems. As clinician-system interactions may differ across organizations, this study aimed to evaluate the reproducibility and generalizability of the underlying conceptual framework using data from over 200 hospitals across the US.

Methods

This study used eICU-CRD, a publicly accessible dataset with data from 208 US hospitals. A logistic regression model was developed to predict in-hospital deterioration following the HPM-ExpertSignals conceptual framework. To test its reproducibility, patients were randomly split into training and testing datasets. After bootstrap testing of the model, the mean precision-recall curve (AUPRC) was compared with outcomes from previously published studies. For generalizability testing, the hospitals in the dataset were randomly assigned into model training or testing sets. After the model was trained with training hospitals’ data, generalizability was measured as the percentage of testing hospitals with an AUPRC at or above a baseline performance obtained in the reproducibility experiment.

Results

The AUPRC in the reproducibility experiment was 0.10 (0.09,0.11; 95% CI), equivalent to the AUPRC reported in a previous study at 0.093 (0.09, 0.096; 95% CI). In the generalizability experiment, 94% of the testing hospitals had AUPRC at or above the baseline AUPRC of 0.10.

Conclusion

The study provides evidence supporting the reproducibility of a predictive model following the HPM-ExpertSignals framework. This model also generalized to most hospitals without additional training. Nevertheless, some hospitals still obtained lower-than-expected performance, highlighting the need for model evaluation and potential fine-tuning before local adoption. Similar studies are needed to investigate the reproducibility and generalizability of other classes of machine learning models in healthcare.

基于护理文件模式的患者病情恶化预测模型的概念框架:美国大量医院的可重复性和普遍性

目的健康过程模型(HPM)-专家信号概念框架假设医疗保健专业人员的病人护理行为可以用来预测院内恶化。基于该框架的预测模型已经使用来自两个医疗保健系统内4家医院的数据进行了验证。由于临床医生与系统的互动可能因组织而异,本研究旨在利用来自美国200多家医院的数据来评估潜在概念框架的可重复性和普遍性。方法本研究使用eICU-CRD,这是一个可公开访问的数据集,包含来自美国208家医院的数据。根据HPM-ExpertSignals概念框架,开发了一个逻辑回归模型来预测院内恶化。为了验证其可重复性,将患者随机分为训练数据集和测试数据集。在对模型进行自举检验后,将平均精度-召回率曲线(AUPRC)与先前发表的研究结果进行比较。为了进行通用性测试,数据集中的医院被随机分配到模型训练集或测试集中。在使用培训医院的数据对模型进行训练后,通过再现性实验中获得的AUPRC达到或高于基线性能的测试医院的百分比来衡量模型的泛化性。结果重复性实验的AUPRC为0.10 (0.09,0.11;95% CI),与先前研究报告的AUPRC为0.093 (0.09,0.096;95%可信区间)。在概括性实验中,94%的测试医院的AUPRC等于或高于基线AUPRC 0.10。结论本研究为HPM-ExpertSignals框架下的预测模型的可重复性提供了证据。该模型也推广到大多数没有额外培训的医院。尽管如此,一些医院的表现仍低于预期,这凸显了在当地采用之前需要对模型进行评估和潜在的微调。需要类似的研究来调查医疗保健中其他类别的机器学习模型的可重复性和泛化性。

本文章由计算机程序翻译,如有差异,请以英文原文为准。

求助全文

约1分钟内获得全文

求助全文

来源期刊

Journal of Biomedical Informatics

医学-计算机:跨学科应用

CiteScore

8.90

自引率

6.70%

发文量

243

审稿时长

32 days

期刊介绍:

The Journal of Biomedical Informatics reflects a commitment to high-quality original research papers, reviews, and commentaries in the area of biomedical informatics methodology. Although we publish articles motivated by applications in the biomedical sciences (for example, clinical medicine, health care, population health, and translational bioinformatics), the journal emphasizes reports of new methodologies and techniques that have general applicability and that form the basis for the evolving science of biomedical informatics. Articles on medical devices; evaluations of implemented systems (including clinical trials of information technologies); or papers that provide insight into a biological process, a specific disease, or treatment options would generally be more suitable for publication in other venues. Papers on applications of signal processing and image analysis are often more suitable for biomedical engineering journals or other informatics journals, although we do publish papers that emphasize the information management and knowledge representation/modeling issues that arise in the storage and use of biological signals and images. System descriptions are welcome if they illustrate and substantiate the underlying methodology that is the principal focus of the report and an effort is made to address the generalizability and/or range of application of that methodology. Note also that, given the international nature of JBI, papers that deal with specific languages other than English, or with country-specific health systems or approaches, are acceptable for JBI only if they offer generalizable lessons that are relevant to the broad JBI readership, regardless of their country, language, culture, or health system.

求助内容:

求助内容: 应助结果提醒方式:

应助结果提醒方式: